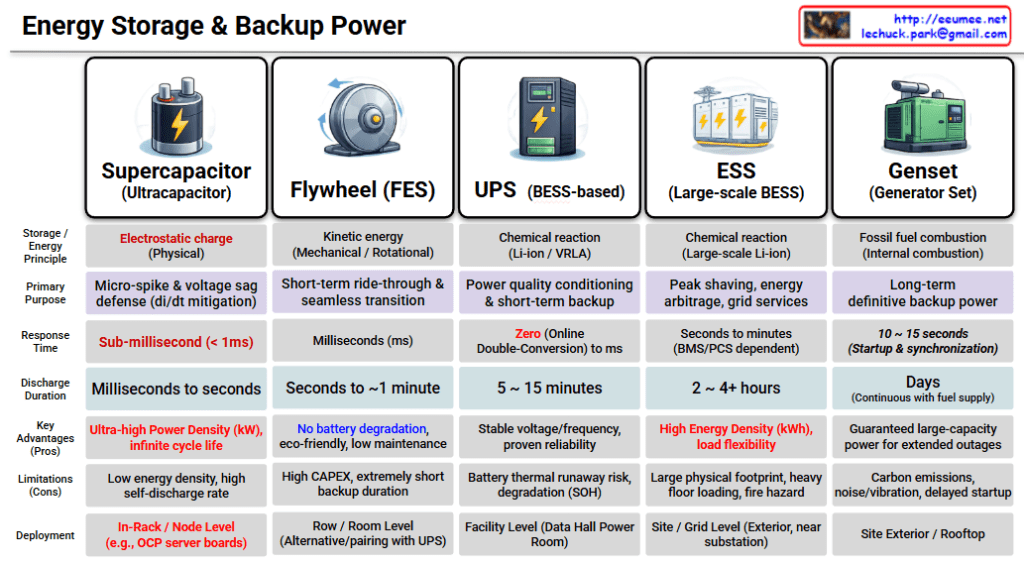

Energy Storage & Backup Power Comparison

This infographic provides a comprehensive overview of energy storage and backup power technologies used in mission-critical infrastructures like data centers. As you move from left to right, the response time increases, but the backup duration also significantly extends.

1. Supercapacitor (Ultracapacitor)

- Energy Principle: Electrostatic charge (Physical)

- Primary Purpose: Micro-spike & voltage sag defense (di/dt mitigation)

- Response Time: Sub-millisecond (< 1ms)

- Discharge Duration: Milliseconds to seconds

- Key Advantages: Ultra-high Power Density (kW), infinite cycle life

- Limitations: Low energy density, high self-discharge rate

- Deployment: In-Rack / Node Level (e.g., OCP server boards)

2. Flywheel (FES – Flywheel Energy Storage)

- Energy Principle: Kinetic energy (Mechanical / Rotational)

- Primary Purpose: Short-term ride-through & seamless transition

- Response Time: Milliseconds (ms)

- Discharge Duration: Seconds to ~1 minute

- Key Advantages: No battery degradation, eco-friendly, low maintenance

- Limitations: High CAPEX, extremely short backup duration

- Deployment: Row / Room Level (Used as an alternative or paired with UPS)

3. UPS (BESS-based)

- Energy Principle: Chemical reaction (Li-ion / VRLA)

- Primary Purpose: Power quality conditioning & short-term backup

- Response Time: Zero (Online Double-Conversion) to ms

- Discharge Duration: 5 ~ 15 minutes

- Key Advantages: Stable voltage/frequency, proven reliability

- Limitations: Battery thermal runaway risk, degradation (SOH – State of Health)

- Deployment: Facility Level (Data Hall Power Room)

4. ESS (Large-scale BESS)

- Energy Principle: Chemical reaction (Large-scale Li-ion)

- Primary Purpose: Peak shaving, energy arbitrage, grid services

- Response Time: Seconds to minutes (BMS/PCS dependent)

- Discharge Duration: 2 ~ 4+ hours

- Key Advantages: High Energy Density (kWh), load flexibility

- Limitations: Large physical footprint, heavy floor loading, fire hazard

- Deployment: Site / Grid Level (Exterior, near substation)

5. Genset (Generator Set)

- Energy Principle: Fossil fuel combustion (Internal combustion)

- Primary Purpose: Long-term definitive backup power

- Response Time: 10 ~ 15 seconds (Startup & synchronization)

- Discharge Duration: Days (Continuous with fuel supply)

- Key Advantages: Guaranteed large-capacity power for extended outages

- Limitations: Carbon emissions, noise/vibration, delayed startup

- Deployment: Site Exterior / Rooftop

Summary of the Spectrum

The hierarchy demonstrates a “Layered Defense” strategy for power reliability:

- Immediate (ms): Supercapacitors and Flywheels handle transient spikes and sags.

- Short-term (mins): UPS systems bridge the gap until secondary power kicks in.

- Long-term (hours/days): ESS manages energy efficiency, while Gensets provide the final safety net for prolonged outages.

#EnergyStorage #BackupPower #DataCenter #UPS #BESS #Flywheel #Supercapacitor #Genset #EnergyEfficiency #PowerReliability #ElectricalEngineering #SmartGrid #EnergyManagement #TechInfographic #Infrastructure

With Gemini