The revised slide visually and professionally conveys the technical philosophy we discussed through a clear visual narrative. Below is a structured breakdown of the slide, organized by its logical flow, which you can use directly as a presentation script or an executive summary.

Slide Overview: The Absolute Value of “Definition” in the AI Era

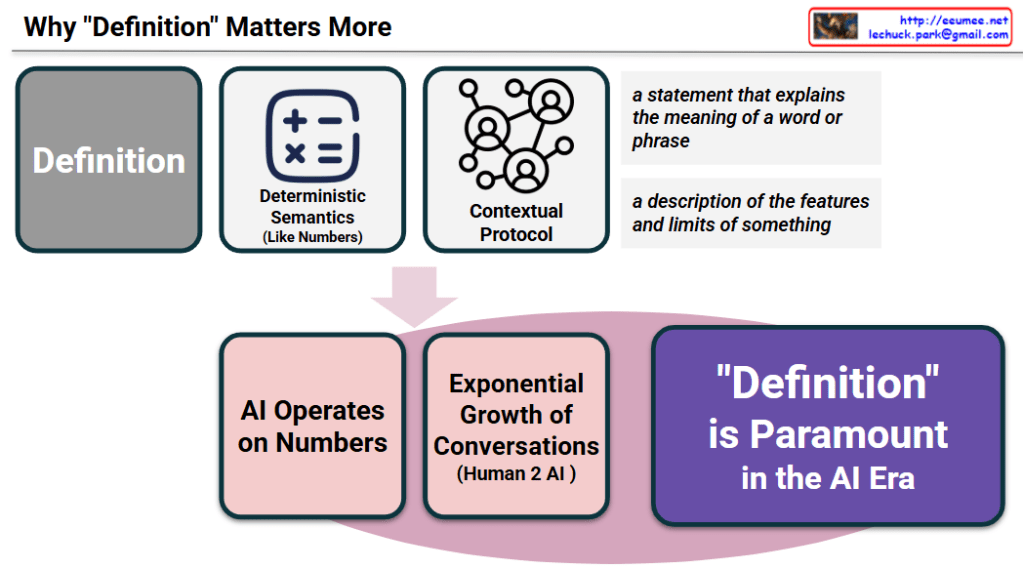

This slide illustrates why the traditional concept of a “definition” becomes critically important when applied to the new technological landscape of Artificial Intelligence. It follows a three-step logical progression: [The Nature of Concepts ➔ Characteristics of the AI Environment ➔ Final Conclusion].

1. Top Section: The Intrinsic Nature of a “Definition”

The upper half of the slide establishes the role of a “definition” from a system architecture perspective.

- Deterministic Semantics (Like Numbers): As noted in the dictionary excerpts on the right, a definition explains meanings and boundaries. When applied to AI systems, this must function like mathematical symbols ($+, -, \times, =$). It requires an absolute, unchanging standard—a strict “deterministic semantic” that operates with the exactness of numbers.

- Contextual Protocol: The network node icon signifies that definitions are no longer just dictionary entries. They act as fundamental “communication protocols” that govern, align, and regulate information exchange across complex networks and multiple AI agents.

2. Bottom-Left Section: The New Paradigm of the AI Environment

Moving through the central arrow, the slide transitions to the unique conditions of the current AI era where these definitions must be applied.

- AI Operates on Numbers: AI does not comprehend text or context through human intuition; it processes information strictly as vectorized, numerical data.

- Exponential Growth of Conversations (Human 2 AI): Concurrently, the frequency and volume of interactions—especially between humans and AI, and increasingly among AI agents themselves—are expanding at an explosive, unprecedented rate.

3. Bottom-Right Section: The Core Conclusion

- “Definition” is Paramount in the AI Era: Ultimately, in an environment where machines process information numerically and the volume of communication is exponentially increasing, even a microscopic conceptual discrepancy can cascade into a catastrophic system failure or hallucination. Therefore, establishing “clear definitions” to structure data and strictly control meaning is the absolute, paramount requirement for maintaining a stable, reliable, and functional AI ecosystem.

Overall Summary

As AI exponentially scales the volume of our daily communications and processes them through rigid, mathematical vectors, linguistic ambiguity becomes the greatest systemic risk. A strictly defined semantic baseline—the “Definition”—is no longer just a linguistic tool, but the most essential engineering protocol required to prevent AI hallucinations and ensure precise, automated operations.

#ArtificialIntelligence #DataArchitecture #DeterministicSemantics #SemanticAnchor #DataGovernance #Definition

With Gemini