The Evolution of LLM Utilization: Toward Autonomous Agents

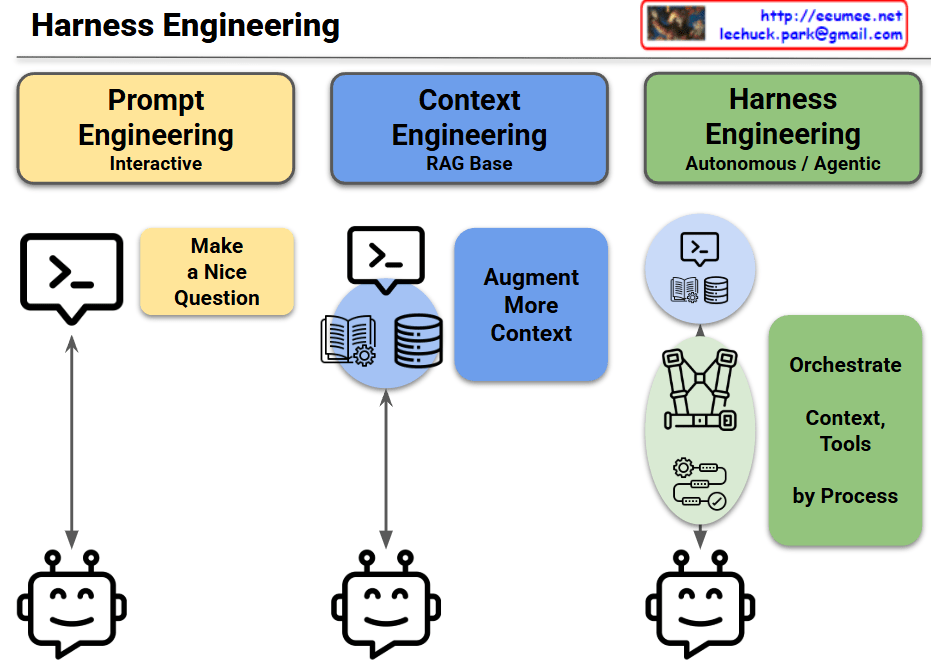

This slide illustrates the evolutionary roadmap of adopting Large Language Models (LLMs) within enterprise operations, transitioning from basic user inputs to fully automated, agentic workflows. The architecture is broken down into three distinct phases:

- Phase 1: Prompt Engineering (Interactive)This represents the foundational stage of LLM interaction. At this level, the quality of the output depends entirely on human input—the ability to “Make a Nice Question.” It is a strictly interactive, 1:1 process that relies solely on the model’s pre-trained knowledge, which limits its capability to resolve complex, real-time operational issues.

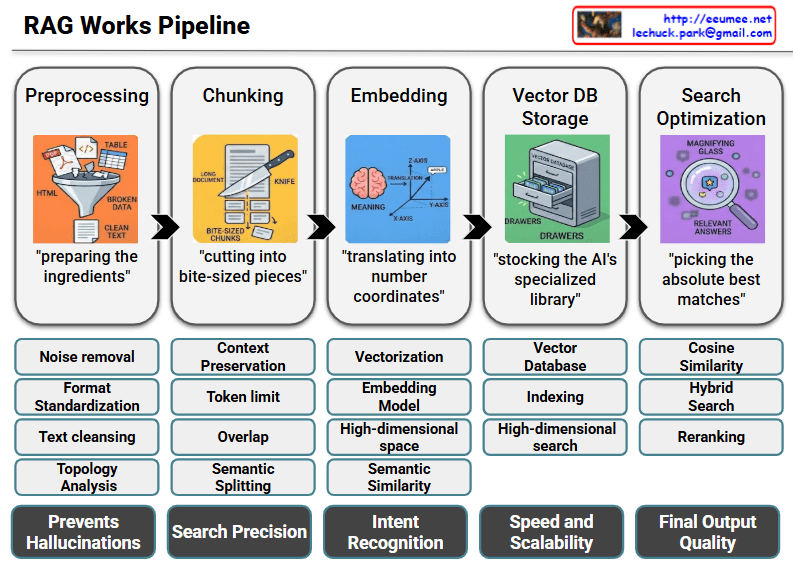

- Phase 2: Context Engineering (RAG Base)The second stage addresses the limitations of a standalone LLM by injecting trusted external data. Utilizing a Retrieval-Augmented Generation (RAG) base, the system actively retrieves specific domain knowledge—represented by the manual and database icons—to “Augment More Context.” This grounds the AI in reality, significantly reducing hallucinations and providing highly accurate, domain-specific insights.

- Phase 3: Harness Engineering (Autonomous / Agentic)This is the ultimate target state. Moving beyond simply generating text, the AI evolves into a proactive agent. The “harness” icon symbolizes a secure, controlled framework where the AI can independently “Orchestrate Context, Tools by Process.” In this autonomous phase, the system not only understands the problem but also safely executes predefined workflows and controls physical or software tools to resolve issues with minimal human intervention.

#LLM #AIArchitecture #AIOps #AutonomousAgents #RAG #ContextEngineering #HarnessEngineering #AgenticAI #ITOperations #TechLeadership

With Gemini