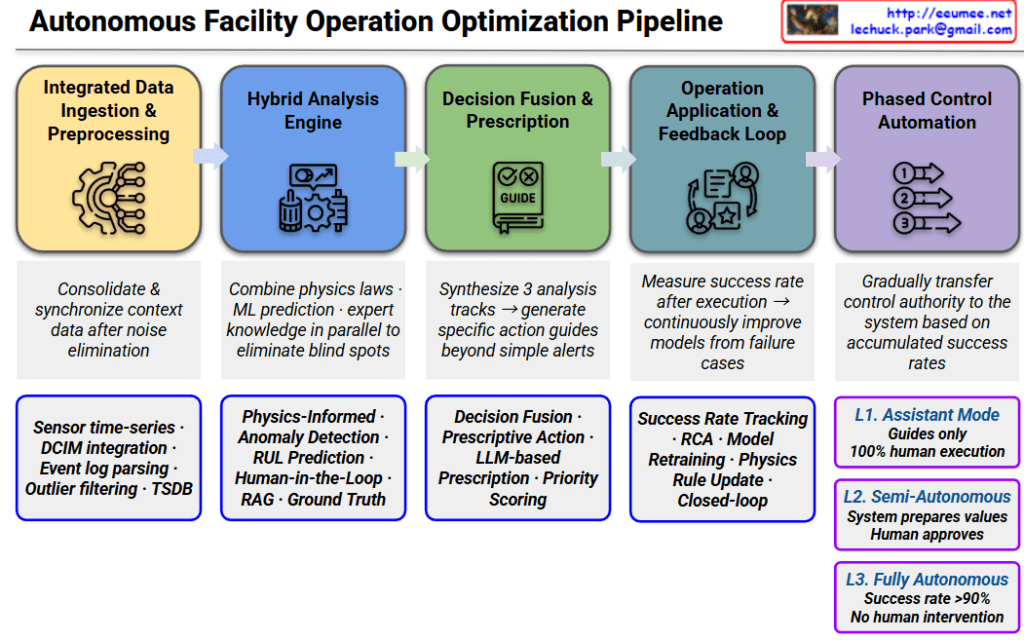

Autonomous Facility Operation Optimization Pipeline

This pipeline represents a sophisticated 5-stage workflow designed to transition facility management from manual oversight to full AI-driven autonomy, ensuring reliability through hybrid modeling.

1. Integrated Data Ingestion & Preprocessing

- Role: Consolidates diverse data streams into a synchronized, high-fidelity format by eliminating noise.

- Key Components: Sensor time-series data, DCIM integration, Event log parsing, Outlier filtering, and TSDB (Time Series Database).

2. Hybrid Analysis Engine

- Role: Eliminates analytical blind spots by running physical laws, machine learning predictions, and expert knowledge in parallel.

- Key Components: Physics-Informed Machine Learning (PIML), Anomaly Detection, RUL (Remaining Useful Life) Prediction, and RAG-enhanced Ground Truth analysis.

3. Decision Fusion & Prescription

- Role: Synthesizes multi-track analysis to move beyond simple alerts, generating specific, actionable “prescriptions.”

- Key Components: Decision Fusion, Prescriptive Action, LLM-based Prescription, and Priority Scoring to rank urgency.

4. Operation Application & Feedback Loop

- Role: Establishes a closed-loop system that measures success rates post-execution to continuously refine models.

- Key Components: Success Rate Tracking, RCA (Root Cause Analysis), Model Retraining, and Physics/Rule updates based on real-world performance.

5. Phased Control Automation

- Role: A risk-mitigated transition of control authority from humans to AI based on accumulated performance data.

- Automation Levels:

- L1. Assistant Mode: System provides guides only; 100% human execution.

- L2. Semi-Autonomous: System prepares optimized values; human provides final approval.

- L3. Fully Autonomous: System operates without human intervention (triggered when success rate >90%).

Strategic Insight

The hallmark of this architecture is the integration of Physics-Informed ML and LLM-based reasoning. By combining the rigid reliability of physical laws with the adaptive reasoning of Large Language Models, the pipeline solves the “black box” problem of traditional AI, making it suitable for mission-critical infrastructures like AI Data Centers.

#DataCenter #AIOps #AutonomousInfrastructure #PhysicsInformedML #DigitalTwin #LLM #PredictiveMaintenance #DataCenterOptimization #TechVisualization #SmartFacility #EngineeringExcellence