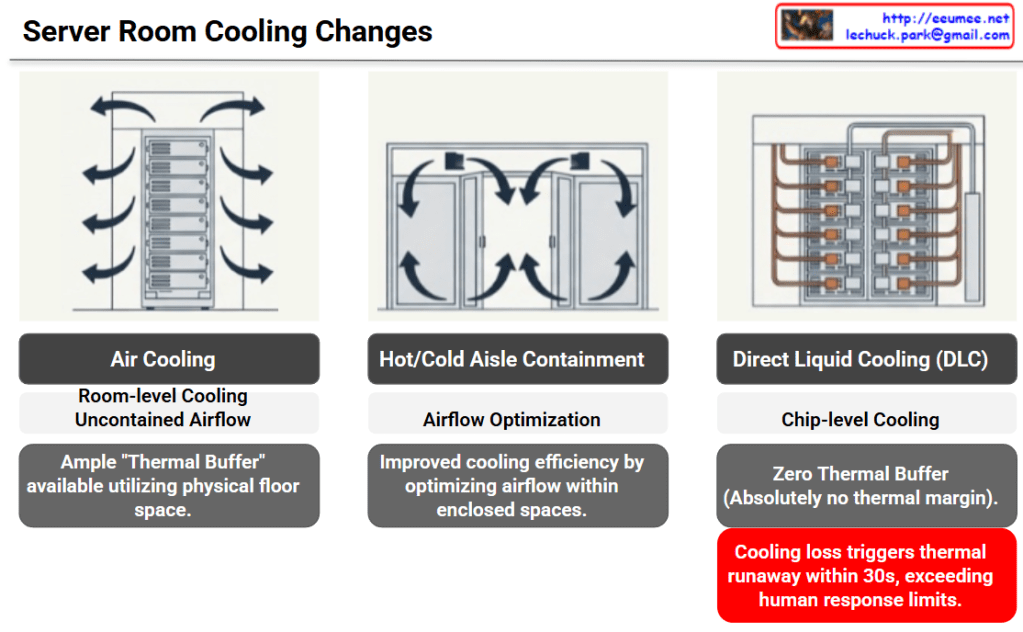

The provided image illustrates the evolution of data center cooling methods and the corresponding increase in risk—specifically, the drastic reduction of available thermal buffer space—categorized into three stages.

Here is a breakdown of each cooling method shown:

1. Air Cooling

- Method: The most traditional approach, providing room-level cooling with uncontained airflow.

- Characteristics: The physical space of the server room acts as a sponge for heat. Because of this, there is an ample “Thermal Buffer” utilizing the floor space. If the cooling system fails, it takes some time for temperatures to reach critical levels.

2. Hot/Cold Aisle Containment

- Method: Physically separates the cold intake air from the hot exhaust air to prevent them from mixing.

- Characteristics: Focuses on Airflow Optimization. It significantly improves cooling efficiency by directing and controlling the airflow within enclosed spaces.

3. Direct Liquid Cooling (DLC)

- Method: A high-density, chip-level cooling approach that brings liquid coolant directly to the primary heat-generating components (like CPUs or GPUs).

- Characteristics: While cooling efficiency is maximized, there is Zero Thermal Buffer. There is absolutely no thermal margin provided by surrounding air or room volume.

💡 Core Implication (The Red Warning Box)

The ultimate takeaway of this slide is highlighted in the bottom right corner.

In a DLC environment, a loss of cooling triggers thermal runaway within 30 seconds. This speed fundamentally exceeds human response limits. It is no longer feasible for a facility manager to hear an alarm, diagnose the issue, and manually intervene before catastrophic failure occurs in modern, high-density servers.

Summary

- Evolution of Efficiency: Data center cooling is shifting from broad, room-level air cooling to highly efficient, chip-level Direct Liquid Cooling (DLC).

- Loss of Thermal Buffer: This transition completely eliminates the physical thermal margin, meaning there is zero room for error if the cooling system fails.

- Automation is Mandatory: Because DLC cooling loss causes thermal runaway in under 30 seconds—faster than humans can react—AI-driven, automated operational agents are now essential to protect infrastructure.

#DataCenter #DataCenterCooling #DirectLiquidCooling #ThermalRunaway #AIOps #InfrastructureManagement

With Gemini