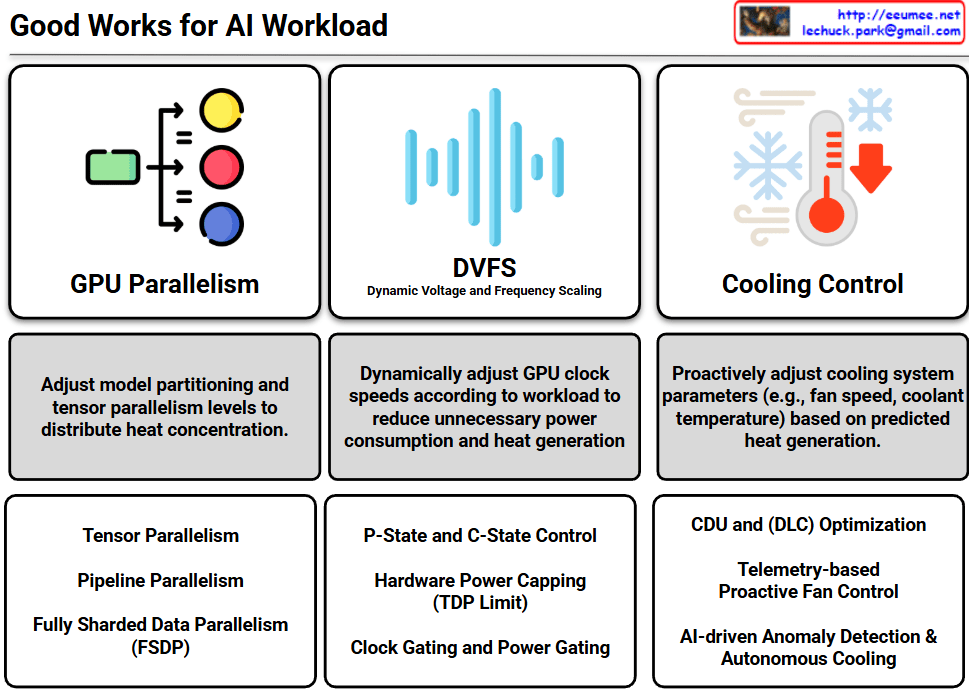

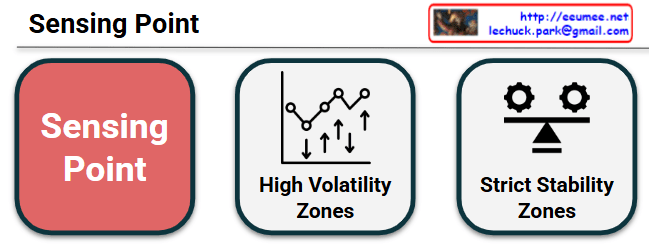

This mage is a diagram that visually contrasts two core characteristics of “Sensing Points,” which are locations where data is collected and status is monitored within a system or infrastructure environment.

Here is a breakdown of each component:

- Sensing Point (Red Block): The central theme of this diagram. It represents the measurement points where physical and logical sensors are deployed to collect data for system monitoring and autonomous operations.

- High Volatility Zones: Represented by a fluctuating line graph and up/down arrows. This indicates areas that are highly dynamic with large and rapid fluctuations in state—such as sudden surges in GPU power consumption or localized thermal changes driven by heavy AI workloads. The primary goal of sensing in these zones is to minimize data collection latency (Time Constant) to instantly capture rapid changes and respond with agility.

- Strict Stability Zones: Represented by interlocking gears and a balanced scale. This refers to the foundational areas of the system where balance must be strictly maintained, such as the baseline temperature of a cooling system or the main power distribution network. Because volatility must be tightly controlled here, the purpose of sensing is focused on ensuring the overall integrity of the infrastructure by detecting subtle imbalances or early signs of anomalies.

Comprehensive Analysis:

Ultimately, this infographic illustrates a monitoring strategy for efficiently managing high-density environments, such as AI Data Centers. By bifurcating the monitoring targets into “areas requiring immediate tracking due to high volatility” and “areas requiring homeostasis through strict control,” it provides a highly intuitive, architecturally structured visualization. It emphasizes the need to establish tailored measurement and operational standards (like AIOps) for each specific domain.

#DataCenter#InfrastructureArchitecture #SensingPoint #Telemetry #SystemMonitoring #AutonomousOperations #HighDensityComputing #TechVisualized

With Gemini