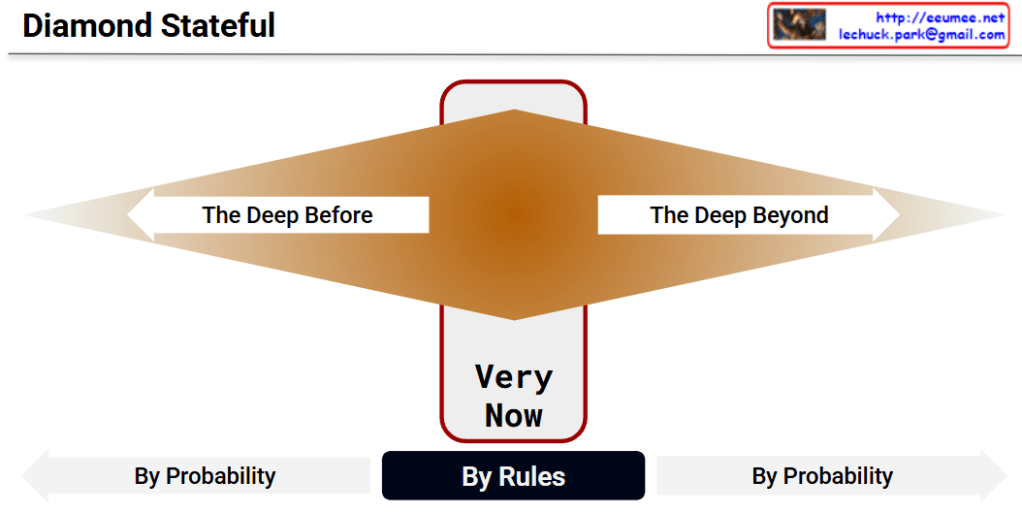

Understanding the “Diamond Stateful” Framework

This diagram, titled “Diamond Stateful,” visually represents a conceptual framework for managing time, context, and system states. It illustrates the balance between deterministic control and probabilistic reasoning across the past, present, and future.

Here is a breakdown of the core components:

- The Present (“Very Now”): The thickest, vertical center of the diamond represents the exact current moment. This specific state is governed “By Rules.” This indicates that the present system is deterministic, strictly defined, and “Stateful.” We have absolute certainty and control over the current environment using explicit logic and operational rules.

- The Past (“The Deep Before”): The left side of the diamond tapers off into the past. As we look further back in time, historical context and data become less absolute. Therefore, reconstructing or interpreting the past is governed “By Probability” (e.g., relying on statistical inferences, heuristics, or context retrieval).

- The Future (“The Deep Beyond”): The right side of the diamond tapers off into the future. Because the future has not yet occurred, predicting upcoming states or generating new outcomes cannot be achieved with rigid rules. It must also be handled “By Probability” (e.g., utilizing predictive algorithms, generative AI, or statistical forecasting).

Key Takeaway:

The core philosophy of the “Diamond Stateful” model is that we should secure and manage the present moment using strict, definitive rules (Stateful), while embracing probability-based models to navigate the vast uncertainties of both the distant past and the unknown future.

#StateManagement #SystemArchitecture #DeterministicVsProbabilistic #DataFramework #SystemDesign #TechConcepts #FutureOfData