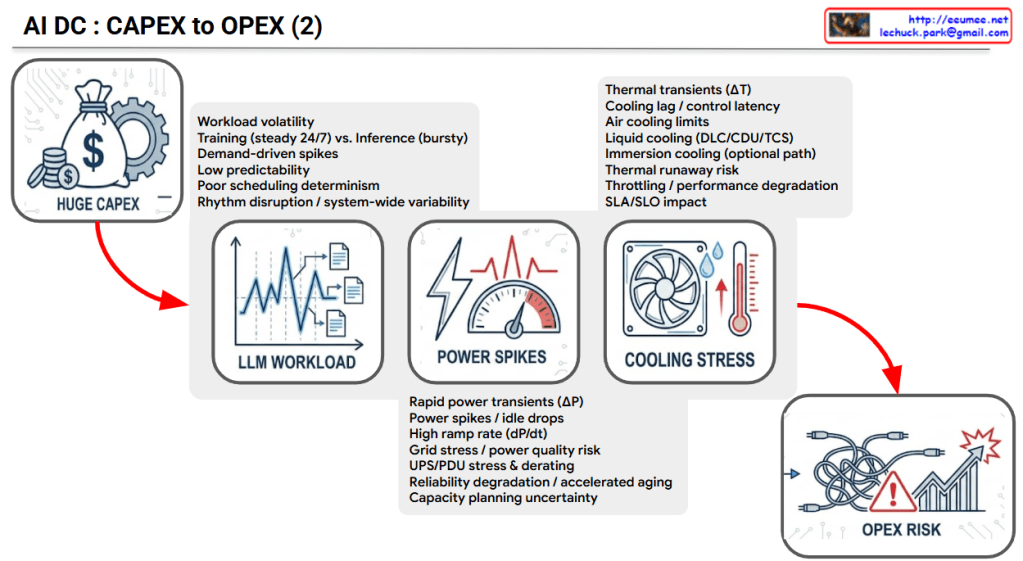

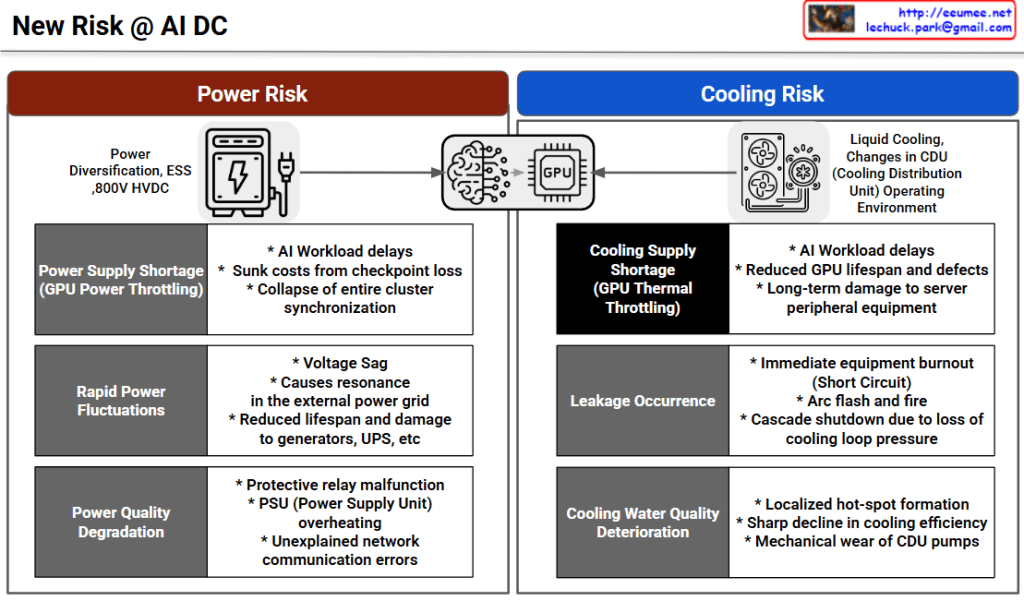

Overview: New Risks at AI Data Centers

The image outlines the infrastructure challenges faced by modern AI Data Centers (AI DC), specifically focusing on the high demands placed on hardware like GPUs. It divides these challenges into two primary categories: Power Risk and Cooling Risk.

The central graphic illustrates that the core AI processing units (Brains/GPUs) are entirely dependent on these two foundational elements.

⚡ Power Risk

This section highlights issues related to power supply and infrastructure (such as Power Diversification, ESS, and 800V HVDC).

- Power Supply Shortage (GPU Power Throttling): When the facility cannot provide enough power, GPUs slow down to compensate.

- Impacts: Delays in AI workloads, financial losses due to lost data checkpoints, and the collapse of synchronization across the entire computing cluster.

- Rapid Power Fluctuations: Sudden spikes or drops in the power supply.

- Impacts: Voltage sag, electrical resonance in external grids, and reduced lifespan or physical damage to backup power systems like generators and UPS (Uninterruptible Power Supplies).

- Power Quality Degradation: When the provided electricity is “noisy” or unstable.

- Impacts: Malfunctions in protective electrical relays, overheating of server Power Supply Units (PSUs), and unexplained network communication errors.

❄️ Cooling Risk

This section focuses on the challenges of managing the massive heat generated by AI workloads, specifically looking at Liquid Cooling and changes in Cooling Distribution Unit (CDU) environments.

- Cooling Supply Shortage (GPU Thermal Throttling): When the cooling system cannot remove heat fast enough, GPUs slow down to prevent melting.

- Impacts: Delays in AI workloads, reduced lifespan and increased defects in GPUs, and long-term damage to surrounding server equipment.

- Leakage Occurrence: Physical leaks in the liquid cooling system.

- Impacts: Immediate equipment burnout (short circuits), risk of electrical arc flashes and fires, and cascading system shutdowns due to a loss of pressure in the cooling loop.

- Cooling Water Quality Deterioration: When the liquid used for cooling becomes contaminated or degrades.

- Impacts: Formation of localized “hot-spots” where cooling fails, a sharp decline in overall cooling efficiency, and mechanical wear and tear on the CDU pumps.

📝 Summary

- AI Data Centers face critical new infrastructure risks divided into two main categories: supplying massive amounts of power and managing extreme heat.

- Power-related risks (shortages, fluctuations, and poor quality) lead to severe workload delays, cluster synchronization failures, and damage to backup generators.

- Cooling-related risks (insufficient cooling, leaks, and poor water quality) cause thermal throttling, severe hardware damage, and potentially catastrophic fires.

#AIDataCenter #DataCenterInfrastructure #GPUPower #LiquidCooling #DataCenterRisk #ThermalThrottling #TechInfrastructure

With Gemini