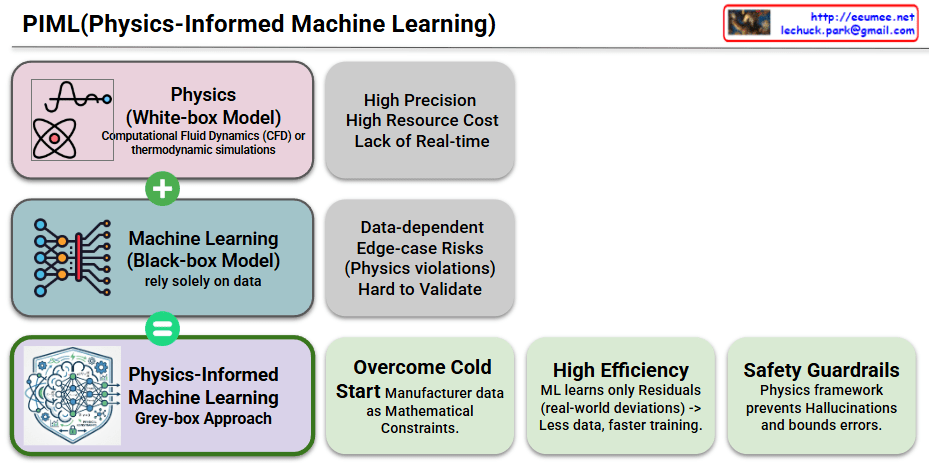

PIML (Physics-Informed Machine Learning) Explained

This diagram illustrates how PIML (Physics-Informed Machine Learning) combines the strengths of physics-based models and data-driven machine learning to create a more powerful and reliable approach.

1. Top: Physics (White-box Model)

- Definition: These are models where the underlying principles are fully explained by mathematical equations, such as Computational Fluid Dynamics (CFD) or thermodynamic simulations.

- Characteristics:

- High Precision: They are very accurate because they are based on fundamental physical laws.

- High Resource Cost: They are computationally intensive, requiring significant processing power and time.

- Lack of Real-time Processing: Complex simulations are difficult to use for real-time prediction or control.

2. Middle: Machine Learning (Black-box Model)

- Definition: These models rely solely on large amounts of training data to find correlations and make predictions, without using underlying physical principles.

- Characteristics:

- Data-dependent: Their performance depends heavily on the quality and quantity of the data they are trained on.

- Edge-case Risks: In situations not covered by the data (edge cases), they can make illogical predictions that violate physical laws.

- Hard to Validate: It is difficult to understand their internal workings, making it challenging to verify the reliability of their results.

3. Bottom: Physics-Informed Machine Learning (Grey-box Approach)

- Definition: This approach integrates the knowledge of physical laws (equations) into a machine learning model as mathematical constraints, combining the best of both worlds.

- Benefits:

- Overcome Cold Start Problem: By using existing knowledge like mathematical constraints, PIML can function even when training data is scarce, effectively addressing the initial (“Cold Start”) state.

- High Efficiency: Instead of learning physics from scratch, the ML model focuses on learning only the residuals (real-world deviations) between the physics-based model and actual data. This makes learning faster and more efficient with less data.

- Safety Guardrails: The integrated physics framework acts as a set of safety guardrails, providing constraints that prevent the model from making physically impossible predictions (“Hallucinations”) and bounding errors to ensure safety.

#AI #PIML #MachineLearning #Physics #HybridAI #DataScience #ExplainableAI #XAI #ComputationalPhysics #Simulation

with Gemini