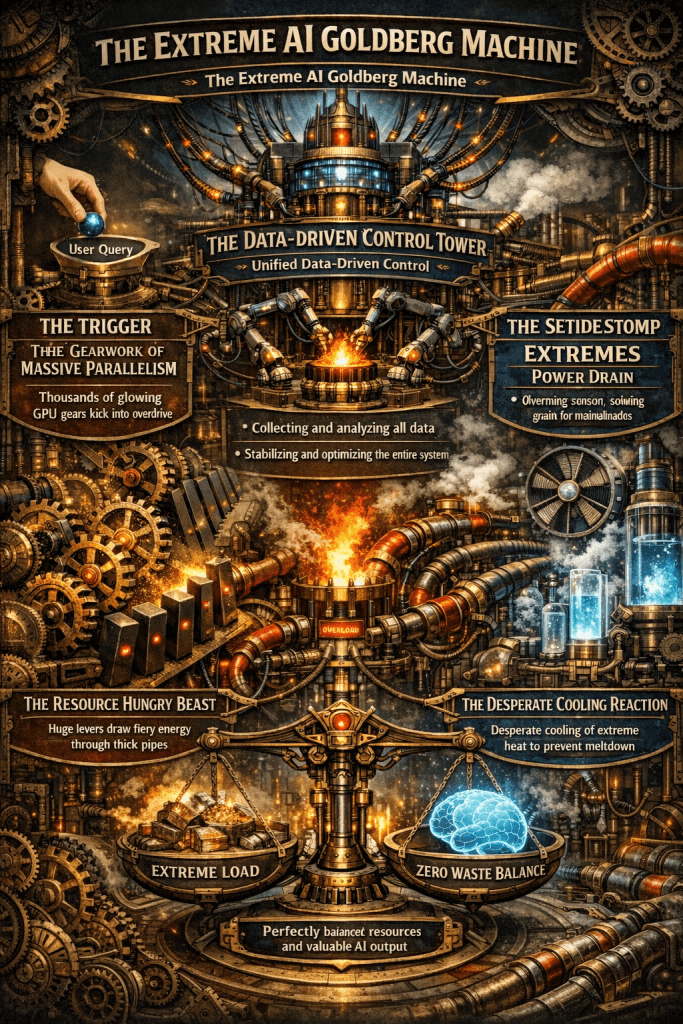

A single AI response triggers a massive chain reaction of compute, power, and cooling.

Only unified, data-driven control can stabilize this fragile system and eliminate waste.

The Computing for the Fair Human Life.

A single AI response triggers a massive chain reaction of compute, power, and cooling.

Only unified, data-driven control can stabilize this fragile system and eliminate waste.

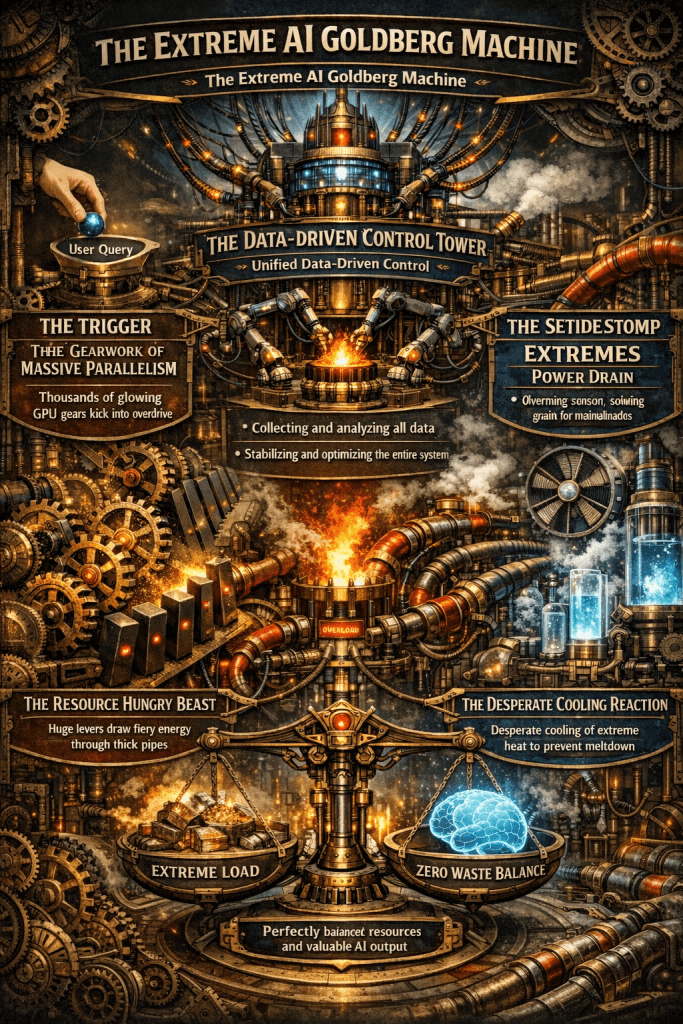

This infographic illustrates the radical shift in operational paradigms between Legacy Data Centers and AI Data Centers, highlighting the transition from “Human-Speed” steady-state management to “Machine-Speed” real-time automation.

| Category | Legacy DC | AI DC | Delta / Impact |

| Power Density | 5 ~ 15 kW / Rack | 40 ~ 120 kW / Rack | 8x ~ 10x Density |

| Thermal Ramp Rate | 0.5 ~ 2.0°C / Min | 10 ~ 20°C / Min | Extreme Heat Surge |

| Thermal Ride-through | 10 ~ 20 Minutes | 30 ~ 90 Seconds | 90% Buffer Loss |

| Cooling UPS Backup | 20 ~ 30% (Partial) | 100% (Full Redundancy) | Mission-Critical Cooling |

| Telemetry Sampling | 1 ~ 5 Minutes | < 1 Second (Real-time) | 60x Precision |

| Coolant Flow Rate | N/A (Air-cooled) | 60 ~ 150 LPM (Liquid) | Liquid-to-Chip Essential |

| Automated Failsafe | 5 ~ 10 Minutes | 5 ~ 10 Seconds | Ultra-fast Shutdown |

With rack densities reaching 120 kW, air cooling is no longer viable. The shift to Liquid-to-Chip cooling with flow rates up to 150 LPM is mandatory to manage the 10–20°C per minute thermal ramp rates.

In a Legacy DC, operators have a 20-minute “Golden Hour” to respond to cooling failures. In an AI DC, this buffer collapses to seconds, making sub-second telemetry and automated failsafe protocols the only way to prevent hardware damage.

#AIDataCenter #AIOps #LiquidCooling #InfrastructureOptimization #DataCenterDesign #HighDensityComputing #ThermalManagement #DigitalTransformation

With Gemini

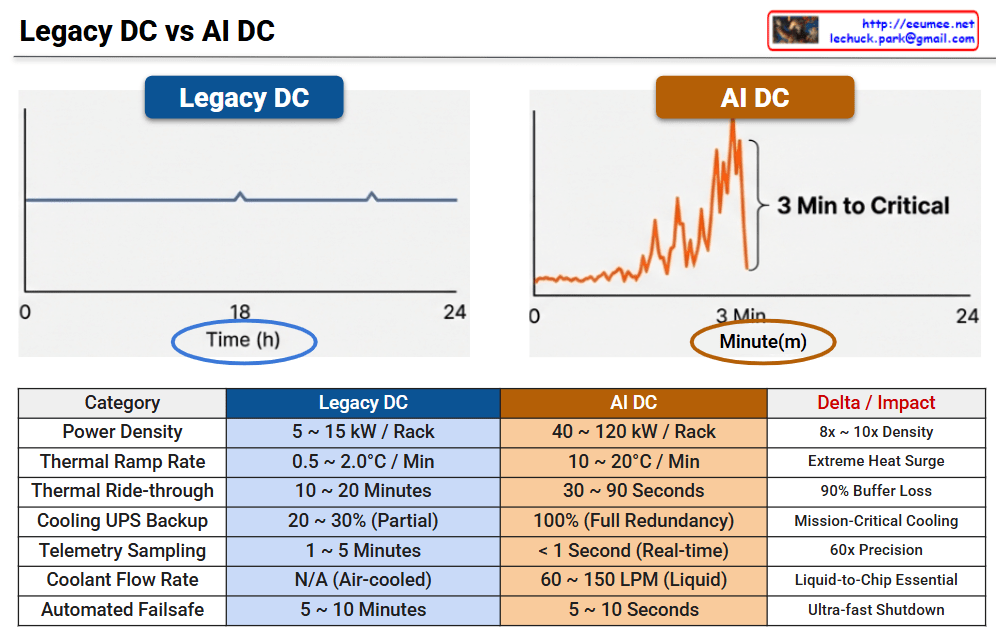

The image illustrates a logical framework titled “Labeling for AI World,” which maps how human cognitive processes are digitized and utilized to train Large Language Models (LLMs). It emphasizes the transition from natural human perception to optimized AI integration.

This track represents the traditional human experience:

This track represents the technical pipeline for AI development:

The most critical element of the diagram is the central blue box, which acts as a bridge between human logic and machine processing:

The diagram demonstrates that Data Labeling, guided by Corpus and Ontology, is the essential mechanism that translates human cognition into the digital realm. It ensures that LLMs are not just processing raw numbers, but are optimized to understand the world through a human-centric logical framework.

#AI #DataLabeling #LLM #Ontology #Corpus #CognitiveComputing #AIOptimization #DigitalTransformation

With Gemini

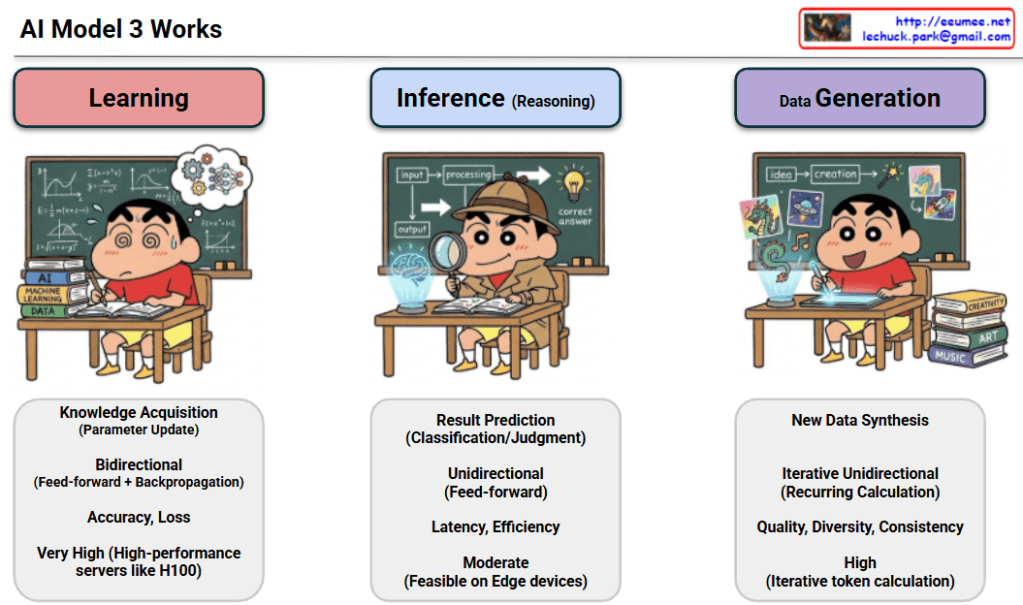

The provided image illustrates the three core stages of how AI models operate: Learning, Inference, and Data Generation.

#AI #MachineLearning #GenerativeAI #DeepLearning #TechExplained #AIModel #Inference #DataScience #Learning #DataGeneration

With Gemini

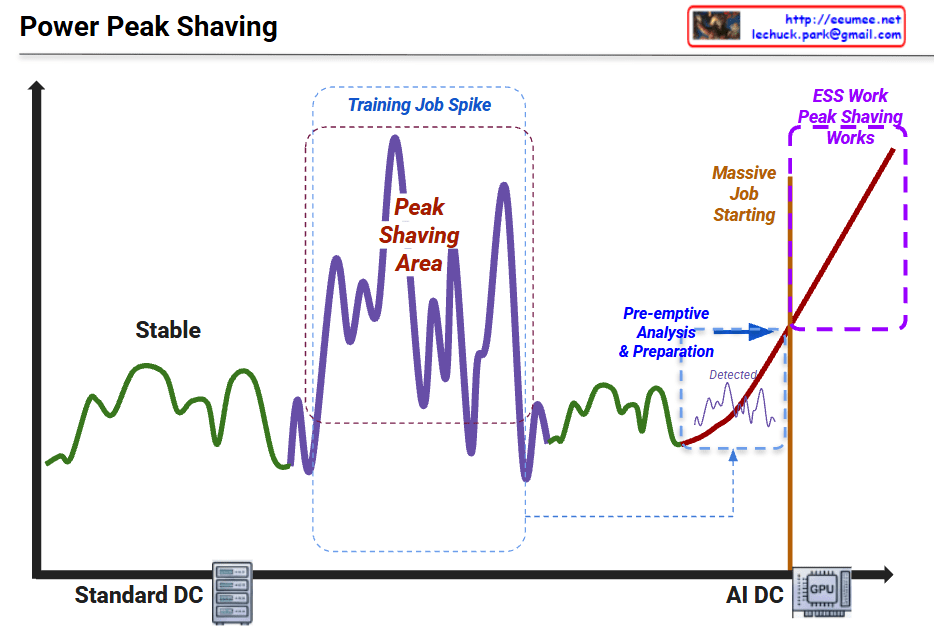

This graph illustrates the shift in power consumption patterns from traditional data centers to AI-driven data centers and the necessity of “Peak Shaving” strategies.

1. Standard DC (Green Line – Left)

2. Training Job Spike (Purple Line – Middle)

3. AI DC & Massive Job Starting (Red Line – Right)

4. ESS Work & Peak Shaving (Purple Dotted Box – Top Right)

#DataCenter #AI #PeakShaving #EnergyStorage #ESS #GPU #PowerManagement #SmartGrid #TechInfrastructure #AIDC #EnergyEfficiency

with Gemini

This diagram illustrates the flow of Power and Cooling changes throughout the execution stages of an AI workload. It divides the process into five phases, explaining how data center infrastructure (Power, Cooling) reacts and responds from the start to the completion of an AI job.

Here are the key details for each phase:

This diagram demonstrates that managing AI infrastructure goes beyond simply “running a job.” It requires active control of the infrastructure (e.g., PreCooling, Throttling, Ramp-down) to handle the specific characteristics of AI workloads, such as rapid power spikes and high heat generation.

Phase 1 (PreCooling) for proactive heat management and Phase 4 (Throttling) for hardware protection are the core mechanisms determining the stability and efficiency of an AI Data Center.

#AI #ArtificialIntelligence #GPU #HPC #DataCenter #AIInfrastructure #DataCenterOps #GreenIT #SustainableTech #SmartCooling #PowerEfficiency #PowerManagement #ThermalEngineering #TDP #DVFS #Semiconductor #SystemArchitecture #ITOperations

With Gemini

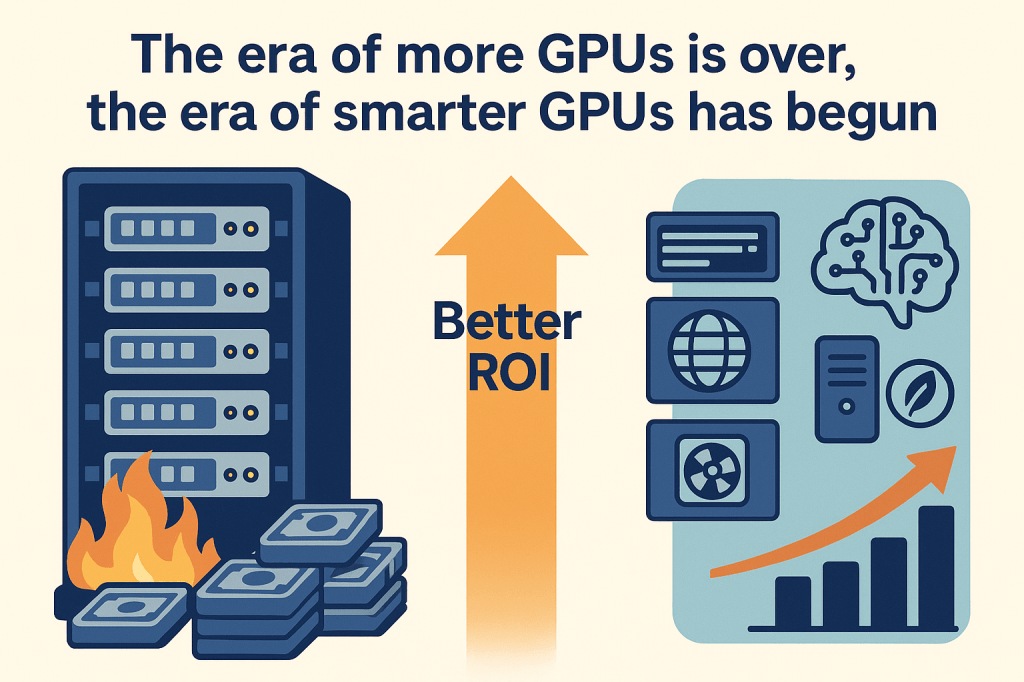

This illustration contrasts an old approach of endlessly adding more GPU servers, burning money for little gain, with a new era where AI-driven optimization of software, network, cooling and power delivers smarter GPUs and a much better ROI.