AI Workload Cooling Systems: Bidirectional Physical-Software Optimization

This image summarizes four cutting-edge research studies demonstrating the bidirectional optimization relationship between AI LLMs and cooling systems. It proves that physical cooling infrastructure and software workloads are deeply interconnected.

🔄 Core Concept of Bidirectional Optimization

Direction 1: Physical Cooling → AI Performance Impact

- Cooling methods directly affect LLM/VLM throughput and stability

Direction 2: AI Software → Cooling Control

- LLMs themselves act as intelligent controllers for cooling systems

📊 Research Analysis

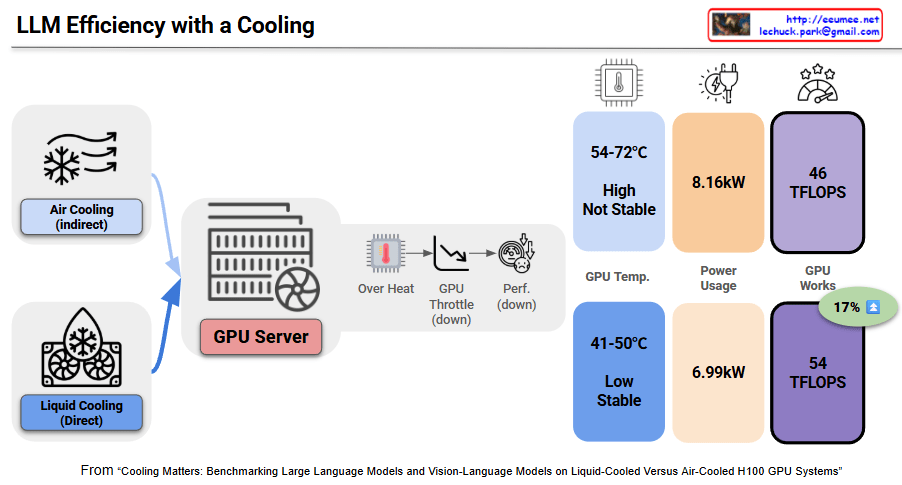

1. Physical Cooling Impact on AI Performance (2025 arXiv)

[Cooling HW → AI SW Performance]

- Experiment: Liquid vs Air cooling comparison on H100 nodes

- Physical Differences:

- GPU Temperature: Liquid 41-50°C vs Air 54-72°C (up to 22°C difference)

- GPU Power Consumption: 148-173W reduction

- Node Power: ~1kW savings

- Software Performance Impact:

- Throughput: 54 vs 46 TFLOPs/GPU (+17% improvement)

- Sustained and predictable performance through reduced throttling

- Improved performance/watt (perf/W) ratio

→ Physical cooling improvements directly enhance AI workload real-time processing capabilities

2. AI Controls Cooling Systems (2025 arXiv)

[AI SW → Cooling HW Control]

- Method: Offline Reinforcement Learning (RL) for automated data center cooling control

- Results: 14-21% cooling energy reduction in 2000-hour real deployment

- Bidirectional Effects:

- AI algorithms optimally control physical cooling equipment (CRAC, pumps, etc.)

- Saved energy → enables more LLM job execution

- Secured more power headroom for AI computation expansion

→ AI software intelligently controls physical cooling to improve overall system efficiency

3. LLM as Cooling Controller (2025 OpenReview)

[AI SW ↔ Cooling HW Interaction]

- Innovative Approach: Using LLMs as interpretable controllers for liquid cooling systems

- Simulation Results:

- Temperature Stability: +10-18% improvement vs RL

- Energy Efficiency: +12-14% improvement

- Bidirectional Interaction Significance:

- LLMs interpret real-time physical sensor data (temperature, flow rate, etc.)

- Multi-objective trade-off optimization between cooling requirements and energy saving

- Interpretability: LLM decision-making process is human-understandable

- Result: Reduced throttling/interruptions → improved AI workload stability

→ Complete closed-loop where AI controls physical systems, and results feedback to AI performance

4. Physical Cooling Innovation Enables AI Training (E-Energy’25 PolyU)

[Cooling HW → AI SW Training Stability]

- Method: Immersion cooling applied to LLM training

- Physical Benefits:

- Dramatically reduced fan/CRAC overhead

- Lower PUE (Power Usage Effectiveness) achieved

- Uniform and stable heat removal

- Impact on AI Training:

- Enables stable long-duration training (eliminates thermal spikes)

- Quantitative power-delay trade-off optimization per workload

- Continuous training environment without interruptions

→ Advanced physical cooling technology secures feasibility of large-scale LLM training

🔁 Physical-Software Interdependency Map

┌─────────────────────────────────────────────────────────┐

│ Physical Cooling Systems │

│ (Liquid cooling, Immersion, CRAC, Heat exchangers) │

└──────────────┬────────────────────────┬─────────────────┘

↓ ↑

Temp↓ Power↓ Stability↑ AI-based Control

↓ RL/LLM Controllers

┌──────────────┴────────────────────────┴─────────────────┐

│ AI Workloads (LLM/VLM) │

│ Performance↑ Throughput↑ Throttling↓ Training Stability↑│

└───────────────────────────────────────────────────────────┘

💡 Key Insights: Bidirectional Optimization Synergy

1. Bottom-Up Influence (Physical → Software)

- Better cooling → maintains higher clock speeds/throughput

- Temperature stability → predictable performance, no training interruptions

- Power efficiency → enables simultaneous operation of more GPUs

2. Top-Down Influence (Software → Physical)

- AI algorithms provide real-time optimal control of cooling equipment

- LLM’s interpretable decision-making ensures operational transparency

- Adaptive cooling strategies based on workload characteristics

3. Virtuous Cycle Effect

Better cooling → AI performance improvement → smarter cooling control

→ Energy savings → more AI jobs → advanced cooling optimization

→ Sustainable large-scale AI infrastructure

🎯 Practical Implications

These studies demonstrate:

- Cooling is no longer passive infrastructure: It’s an active determinant of AI performance

- AI optimizes its own environment: Meta-level self-optimizing systems

- Hardware-software co-design is essential: Isolated optimization is suboptimal

- Simultaneous achievement of sustainability and performance: Synergy, not trade-off

📝 Summary

These four studies establish that next-generation AI data centers must evolve into integrated ecosystems where physical cooling and software workloads interact in real-time to self-optimize. The bidirectional relationship—where better cooling enables superior AI performance, and AI algorithms intelligently control cooling systems—creates a virtuous cycle that simultaneously achieves enhanced performance, energy efficiency, and sustainable scalability for large-scale AI infrastructure.

#EnergyEfficiency#GreenAI#SustainableAI#DataCenterOptimization#ReinforcementLearning#AIControl#SmartCooling

With Claude