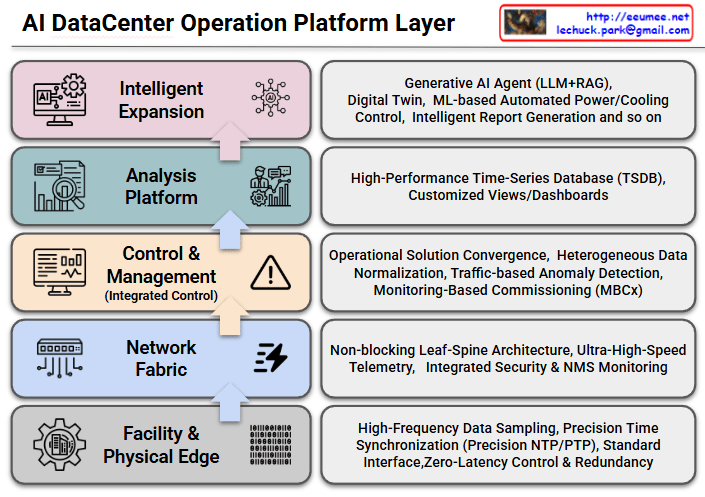

The provided image illustrates the architecture of an AI DataCenter Operation Platform, mapping it out in five distinct stages from the physical foundation layer up to the top-tier artificial intelligence application layer.

The upward-pointing arrows depict the flow of raw data collected from the infrastructure, demonstrating the system’s upward evolution and how the data is ultimately utilized intelligently by AI.

Here is the breakdown of the core roles and components of each layer:

- Layer 1: Facility & Physical Edge

- Role: The foundational layer responsible for collecting data and controlling the physical infrastructure equipment of the data center, such as power and cooling systems.

- Key Elements: High-Frequency Data Sampling, Precision Time Synchronization (Precision NTP/PTP), Standard Interfaces, and Zero-Latency Control & Redundancy. This layer focuses on extracting data and issuing control commands to hardware with extreme speed and accuracy.

- Layer 2: Network Fabric

- Role: The neural network of the data center. It reliably and rapidly transmits the massive amounts of collected data to the upper platforms without bottlenecks.

- Key Elements: Non-blocking Leaf-Spine Architecture, Ultra-High-Speed Telemetry, and Integrated Security & NMS (Network Management System) Monitoring. These elements work together to efficiently handle large-scale traffic.

- Layer 3: Control & Management (Integrated Control)

- Role: The layer that integrates and normalizes heterogeneous data streaming in from various facilities and solutions to execute practical operations and management.

- Key Elements: Operational Solution Convergence, Heterogeneous Data Normalization, Traffic-based Anomaly Detection, and Monitoring-Based Commissioning (MBCx). It acts as a critical gateway to identify infrastructure issues early and improve overall operational efficiency.

- Layer 4: Analysis Platform

- Role: The stage where refined data is stored, analyzed, and visualized, allowing administrators to intuitively grasp the system’s status at a glance.

- Key Elements: Utilizes a High-Performance Time-Series Database (TSDB) to record state changes over time and provides Customized Views/Dashboards for tailored monitoring.

- Layer 5: Intelligent Expansion

- Role: The ultimate destination of this platform. It is the highest layer where AI autonomously operates and optimizes the data center, leveraging the well-organized data provided by the lower layers.

- Key Elements: Generative AI Agent (LLM+RAG), Digital Twin technology, ML-based Automated Power/Cooling Control, and Intelligent Report Generation.

This blueprint clearly demonstrates the overall solution architecture: precisely collecting and transmitting raw data from hardware facilities (Layers 1-2), standardizing, storing, and analyzing that data (Layers 3-4), and ultimately achieving advanced, autonomous operations through intelligent, automatic control of power and cooling systems via a Generative AI Agent (Layer 5).

#AIDataCenter #AIOps #DataCenterManagement #GenerativeAI #DigitalTwin #NetworkFabric #ITInfrastructure #SmartDataCenter #MachineLearning #TechArchitecture

With Gemini