The Computing for the Fair Human Life.

This diagram presents an advanced three-layer architecture for digital operations, emphasizing continuous feedback loops and real-time decision-making.

The system operates through three interconnected environments that continuously update each other, creating an intelligent operational ecosystem.

Creates a virtual replica of physical assets for real-time monitoring and simulation.

Data Sources (Servers, Networks, Manufacturing Equipment, IoT Sensors) → High Resolution Data Quality → Real-time Real-Model → Digital Twin

Analyzes real-time data, identifies events, and makes intelligent autonomous decisions using AI.

Well-Defined Deterministic Processing → Deterministic Event Log + Add-on RAG → AI Agent

Enables human oversight, strategic decision-making, and intervention when needed.

Both Digital Twin (Micro) and AI Agent (Macro) feed into → Human Layer for Well-Cognitive Decision Making

All three layers are connected through Continuous Update pathways (red arrows), creating a closed-loop system:

Each layer compensates for the others’ limitations:

This architecture integrates three operational environments: the Micro Layer uses real-time data to maintain Digital Twins of physical assets, the Macro Layer employs LLM-based AI Agents with RAG to analyze events and generate intelligent recommendations, and the Human Layer ensures well-cognitive decision-making through human-in-the-loop oversight. All three layers continuously update each other and feed decisions back to the operational systems, creating a self-improving closed-loop architecture. This synergy combines real-time precision, artificial intelligence, and human expertise to achieve optimal digital operations.

#DigitalTwin #AIAgent #HumanInTheLoop #ClosedLoopSystem #LLM #RAG #RetrievalAugmentedGeneration #RealTimeOperations #DigitalTransformation #Industry40 #SmartManufacturing #CognitiveComputing #ContinuousImprovement #IntelligentAutomation #DigitalOperations #AI #IoT #PredictiveMaintenance #DataDrivenDecisions #FutureOfManufacturing

With Claude

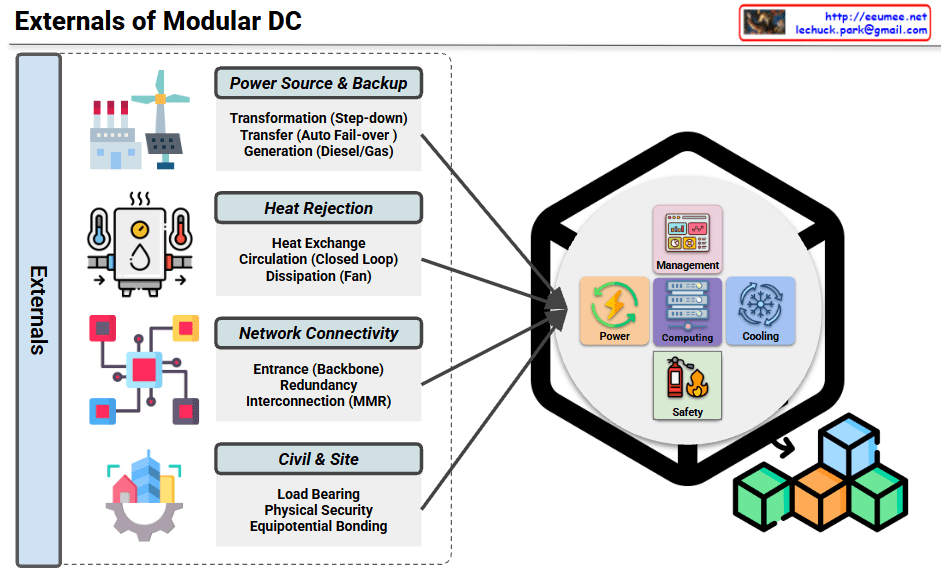

Externals of Modular DC Infrastructure

This diagram illustrates the external infrastructure systems that support a Modular Data Center (Modular DC).

Ensures stable power supply and emergency backup capabilities.

Cooling infrastructure that removes heat generated from the data center to the outside environment.

Provides connectivity and telecommunication infrastructure with external networks.

Handles building foundation and physical security requirements.

The module integrates the following management elements:

Modular data centers require four critical external infrastructure systems: power supply with backup generation, heat rejection for thermal management, network connectivity for communications, and civil/site infrastructure for physical foundation and security. These external systems work together to support the internal management components (power, computing, cooling, and safety) within the modular unit. This architecture enables rapid deployment while maintaining enterprise-grade reliability and scalability.

#ModularDataCenter #DataCenterInfrastructure #DCInfrastructure #EdgeComputing #HybridIT #DataCenterDesign #CriticalInfrastructure #PowerBackup #CoolingSystem #NetworkRedundancy #PhysicalSecurity #ModularDC #DataCenterSolutions #ITInfrastructure #EnterpriseIT

With Claude

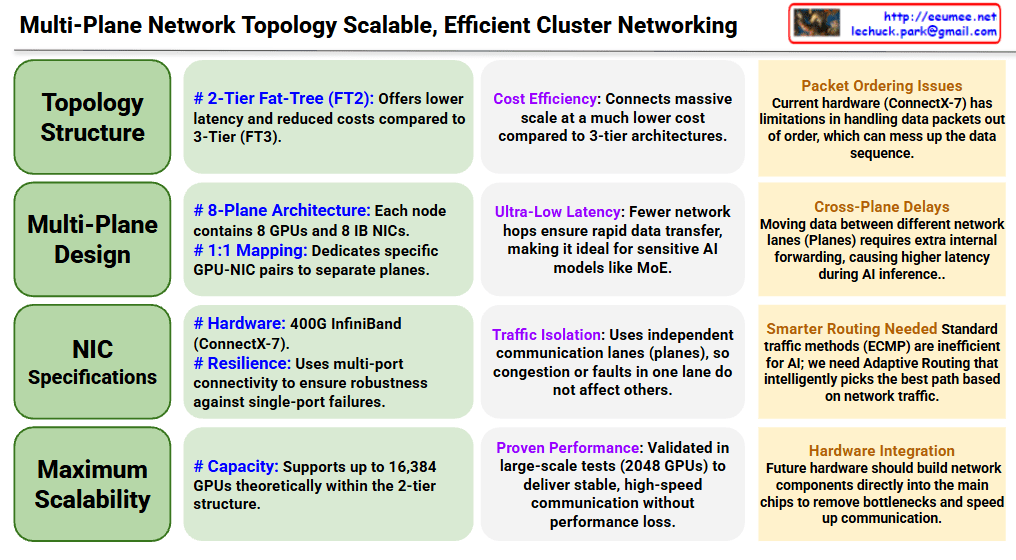

Topology Structure

Multi-Plane Design

NIC Specifications

Maximum Scalability

Cost Efficiency: Connects massive scale at much lower cost compared to 3-tier architectures

Ultra-Low Latency: Fewer network hops ensure rapid data transfer, ideal for latency-sensitive AI models like MoE

Traffic Isolation: Independent communication lanes (planes) prevent congestion or faults in one lane from affecting others

Proven Performance: Validated in large-scale tests with 2048 GPUs, delivering stable and high-speed communication

Packet Ordering Issues: Current hardware (ConnectX-7) has limitations in handling out-of-order data packets

Cross-Plane Delays: Moving data between different network planes requires extra internal forwarding, causing higher latency during AI inference

Smarter Routing Needed: Standard traffic methods (ECMP) are inefficient for AI; requires Adaptive Routing that intelligently selects the best path based on network traffic

Hardware Integration: Future hardware should build network components directly into main chips to remove bottlenecks and speed up communication

This document presents a multi-plane network topology using 2-tier Fat-Tree architecture that scales AI clusters up to 16,384 GPUs cost-effectively with ultra-low latency. The 8-plane design with 1:1 GPU-NIC mapping provides traffic isolation and resilience, though challenges remain in packet ordering and cross-plane communication. Future improvements require smarter routing algorithms and deeper hardware-network integration to optimize AI workload performance.

#AIInfrastructure #DataCenterNetworking #HPC #InfiniBand #GPUCluster #NetworkTopology #FatTree #ScalableComputing #MLOps #AIHardware #DistributedComputing #CloudInfrastructure #NetworkArchitecture #DeepLearning #AIatScale

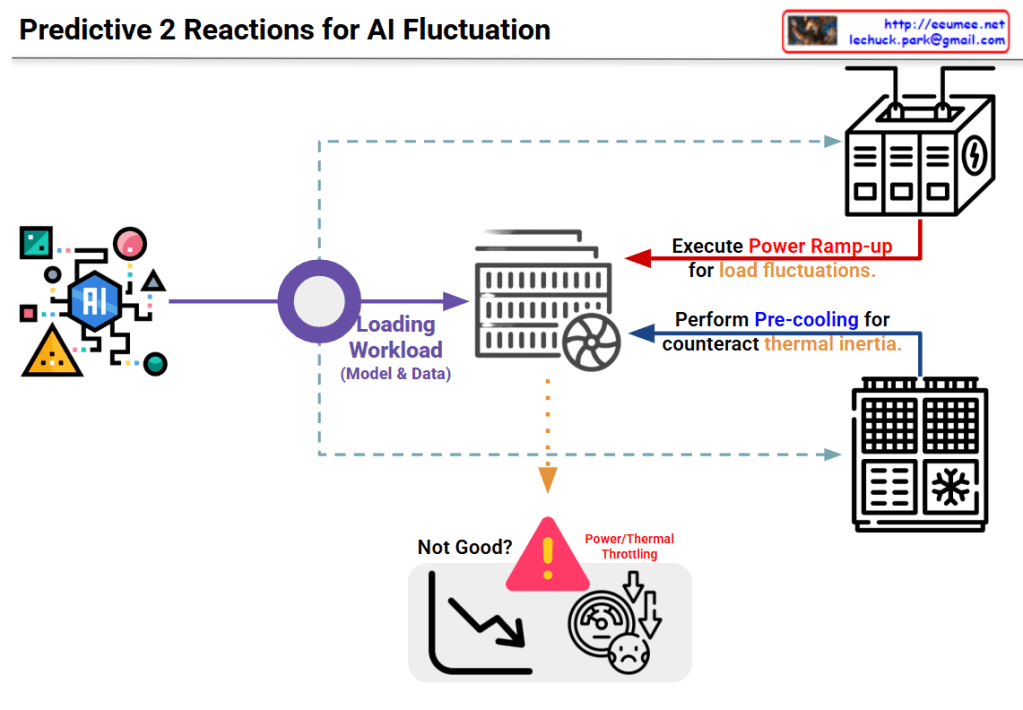

This diagram illustrates a two-stage predictive strategy to address load fluctuation issues in AI systems.

Input Stage:

Processing Stage:

The warning area at the bottom center shows problems that occur without these responses:

This system proposes an intelligent infrastructure management approach that predicts rapid fluctuations in AI workloads and proactively adjusts power and cooling before actual loads occur, thereby preventing performance degradation.

This diagram presents a predictive two-stage reaction system for AI workload management that combines proactive power ramp-up and pre-cooling to prevent thermal throttling. By anticipating load fluctuations before they occur, the system maintains optimal performance without degradation. The approach represents a shift from reactive to predictive infrastructure management in AI computing environments.

#AIInfrastructure #PredictiveComputing #ThermalManagement #PowerManagement #AIWorkload #DataCenterOptimization #ProactiveScaling #AIPerformance #ThermalThrottling #SmartCooling #MLOps #AIEfficiency #ComputeOptimization #InfrastructureAsCode #AIOperations

With Claude

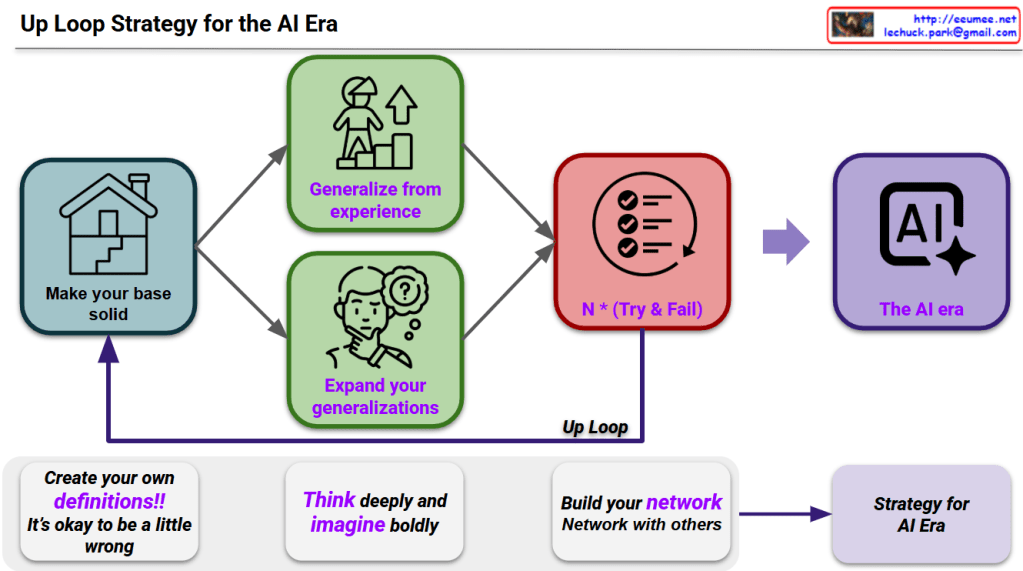

This diagram presents a learning and growth strategy for preparing for the AI era.

1. Make your base solid

2. Two developmental paths

3. N × (Try & Fail)

4. The AI era

5. Up Loop

“Create your own definitions!! It’s okay to be a little wrong”

“Think deeply and imagine boldly”

“Build your network, Network with others”

These three elements combine → Strategy for AI Era

This strategy emphasizes learning through continuous trial and error rather than perfection, self-directed learning, and networking. It aims to develop the competencies needed for the AI era through iterative improvement.

This framework advocates for a continuous learning cycle: build solid foundations, generalize and expand your knowledge, then embrace multiple failures as learning opportunities. The strategy rejects perfectionism in favor of bold experimentation, deep thinking, and collaborative networking. Success in the AI era comes from iterative improvement through this “up loop” rather than seeking perfect answers from the start.

#AIStrategy #GrowthMindset #ContinuousLearning #IterativeImprovement #UpLoop #AIEra #LearnFromFailure #NetworkBuilding #LifelongLearning #FutureOfWork #AdaptiveThinking #ExperimentalMindset #AIReadiness #PersonalDevelopment #ProfessionalGrowth

With Claude