The Computing for the Fair Human Life.

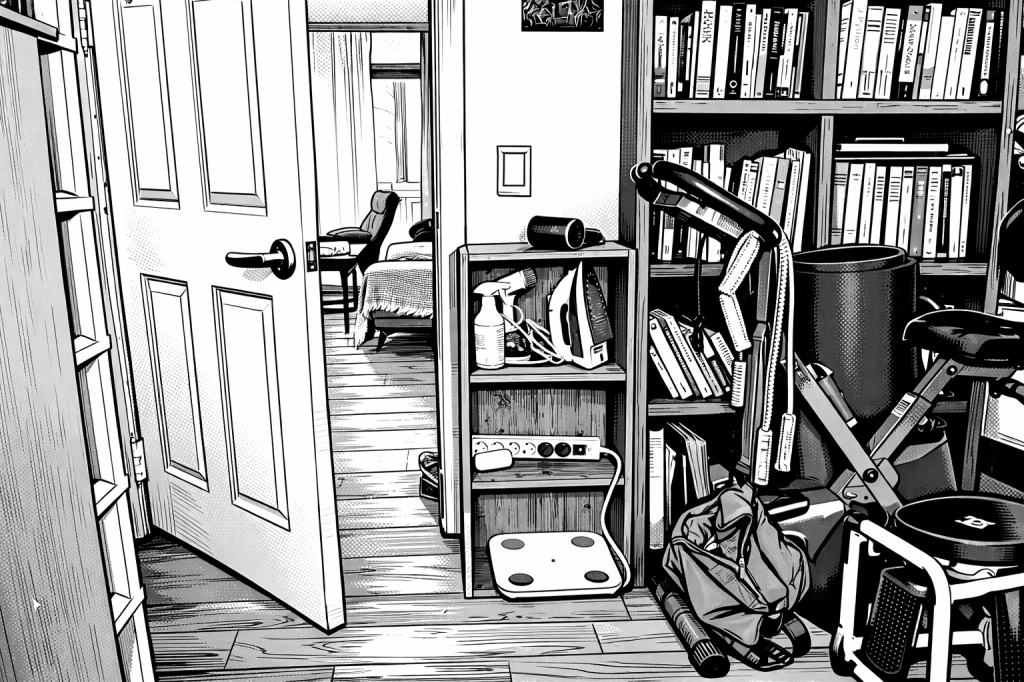

This diagram illustrates a workflow that handles system logs/events by dividing them into real-time urgent responses and periodic deep analysis.

This section indicates a shift from simple monitoring to intelligent AIOps.

The processed insights are delivered to the user through four channels:

#EventProcessing #SystemArchitecture #VectorSearch #Observability #RCA

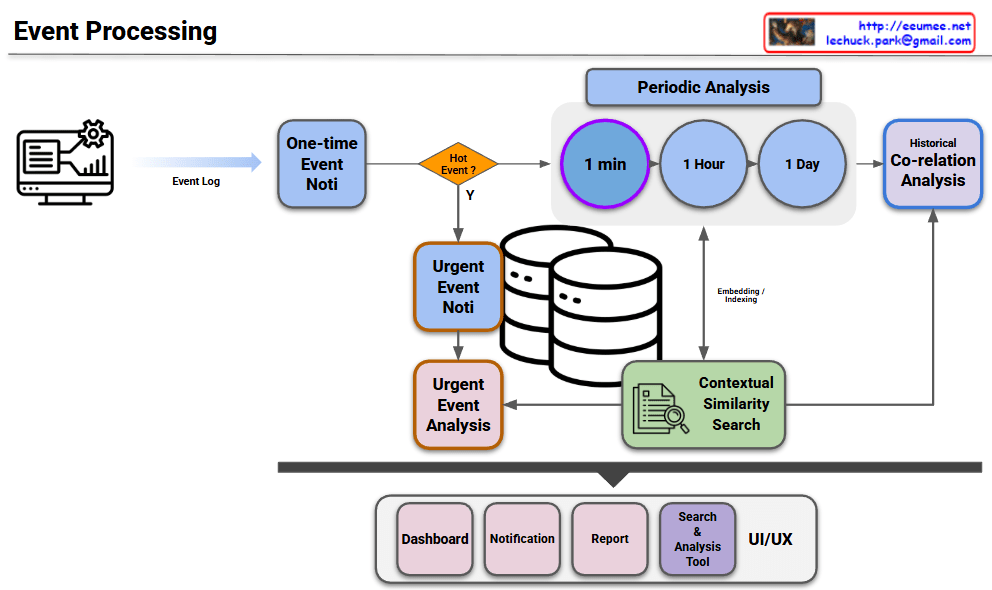

For a Co-location (Colo) service provider, the challenge is managing high-density AI workloads without having direct access to the customer’s proprietary server data or software stacks. This second image provides a specialized architecture designed to overcome this “data blindness” by using infrastructure-level metrics.

In a co-location environment, the server internal data—such as LLM Job Schedules, GPU/HBM telemetry, and Internal Temperatures—is often restricted for security and privacy reasons. This creates a “Black Box” for the provider. The architecture shown here shifts the focus from the Server Inside to the Server Outside, where the provider has full control and visibility.

Because the provider cannot see when an AI model starts training, they must rely on Power Supply telemetry as a proxy.

Since the provider is missing the “More Proactive” software-level data, the Analysis with ML component becomes even more critical.

| Feature | Ideal Model (Image 1) | Practical Colo Model (Image 2) |

| Visibility | Full-stack (Software to Hardware) | Infrastructure-only (Power & Air/Liquid) |

| Primary Metric | LLM Job Queue / GPU Temp | Power Trend ($kW$) / Rack Density |

| Tenant Privacy | Low (Requires data sharing) | High (Non-intrusive) |

| Control Precision | Extremely High | High (Dependent on Power Sampling Rate) |

#Colocation #DataCenterManagement #PredictiveCooling #AICooling #InfrastructureOptimization #PUE #LiquidCooling #MultiTenantSecurity

With Gemini

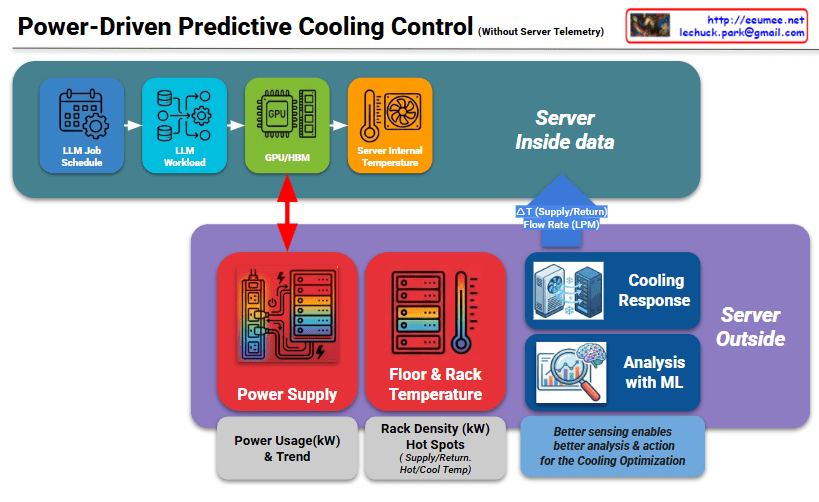

The image illustrates a logical framework titled “Labeling for AI World,” which maps how human cognitive processes are digitized and utilized to train Large Language Models (LLMs). It emphasizes the transition from natural human perception to optimized AI integration.

This track represents the traditional human experience:

This track represents the technical pipeline for AI development:

The most critical element of the diagram is the central blue box, which acts as a bridge between human logic and machine processing:

The diagram demonstrates that Data Labeling, guided by Corpus and Ontology, is the essential mechanism that translates human cognition into the digital realm. It ensures that LLMs are not just processing raw numbers, but are optimized to understand the world through a human-centric logical framework.

#AI #DataLabeling #LLM #Ontology #Corpus #CognitiveComputing #AIOptimization #DigitalTransformation

With Gemini

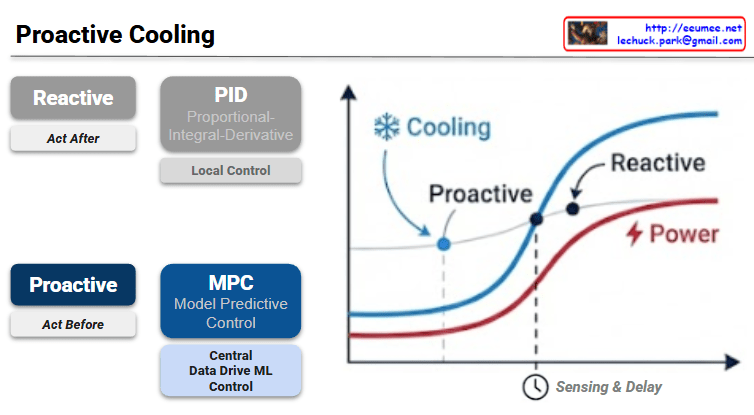

The provided image illustrates the fundamental shift in data center thermal management from traditional Reactive methods to AI-driven Proactive strategies.

The slide contrasts two distinct approaches to managing the cooling load in a high-density environment, such as an AI data center.

| Feature | Reactive (Traditional) | Proactive (Advanced) |

| Philosophy | Act After: Responds to changes. | Act Before: Anticipates changes. |

| Mechanism | PID Control: Proportional-Integral-Derivative. | MPC: Model Predictive Control. |

| Scope | Local Control: Focuses on individual units/sensors. | Central ML Control: Data-driven, system-wide optimization. |

| Logic | Feedback-based (error correction). | Feedforward-based (predictive modeling). |

The graph on the right visualizes the efficiency gap between these two methods:

In modern data centers, especially those handling fluctuating AI workloads (like LLM training or high-concurrency inference), the “Sensing & Delay” in traditional PID systems can lead to significant energy waste and hardware stress. MPC leverages historical data and real-time telemetry to:

#DataCenter #AICooling #ModelPredictiveControl #MPC #ThermalManagement #EnergyEfficiency #SmartInfrastructure #PUEOptimization #MachineLearning

With Gemini

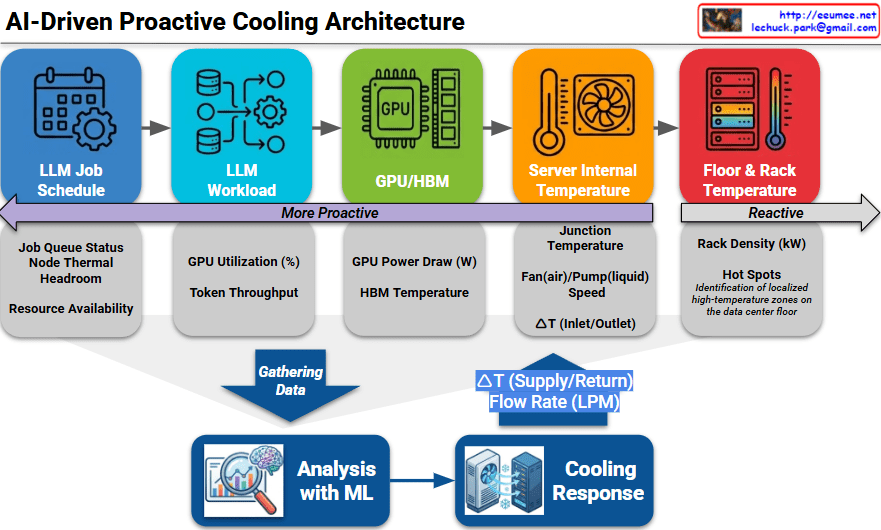

The provided image illustrates an AI-Driven Proactive Cooling Architecture, detailing a sophisticated pipeline that transforms operational data into precise thermal management.

The architecture categorizes data sources along a spectrum, moving from “More Proactive” (predicting future heat) to “Reactive” (measuring existing heat).

The bottom section of the diagram shows how this multi-layered data is converted into action:

By shifting the control logic “left” (toward the LLM Job Schedule), data centers can eliminate the thermal lag inherent in traditional systems. This is particularly critical for AI infrastructure, where GPU power consumption can spike almost instantaneously, often faster than traditional mechanical cooling systems can ramp up.

#AICooling #DataCenterInfrastructure #ProactiveCooling #GPUManagement #LiquidCooling #LLMOps #ThermalManagement #EnergyEfficiency #SmartDC

With Gemini