The Computing for the Fair Human Life.

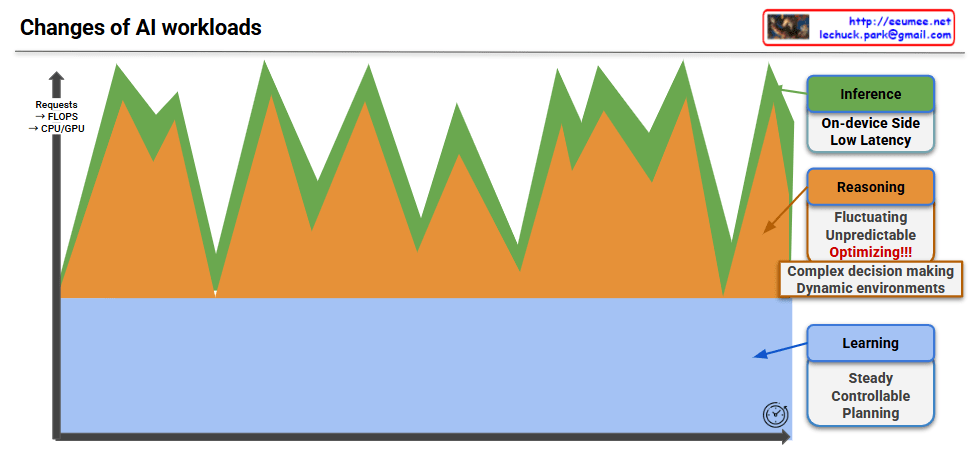

This image visualizes the three major AI workload types and their characteristics in a comprehensive graph.

Visualization Framework:

Properties: Steady, Controllable, Planning

Properties: Fluctuating, Unpredictable, Optimizing!!!

Properties: On-device Side, Low Latency

Differentiated Resource Management Strategies Required:

This graph provides crucial insights demonstrating that customized resource allocation strategies considering the unique characteristics of each workload type are essential for effective AI system operations.

This visualization emphasizes that AI workloads are not monolithic but consist of distinct components with varying demands, requiring sophisticated resource management approaches to handle their collective and individual requirements effectively.

With Claude

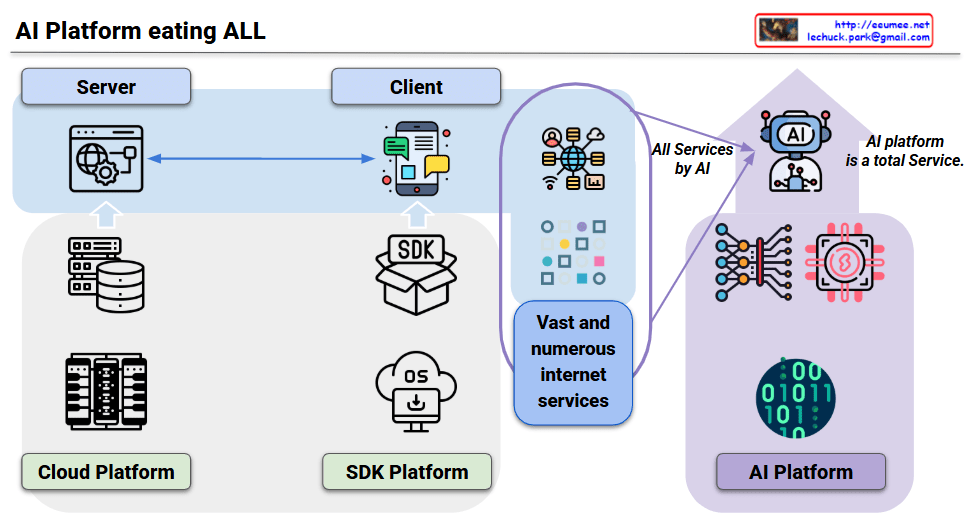

This diagram illustrates the fundamental paradigm shift in service development across three platform evolution stages.

Platform Evolution:

Key Transformation:

This represents not just tool evolution, but a fundamental reorganization of the service ecosystem where countless specialized services converge into one unified AI platform due to AI’s universal cognitive abilities. The AI platform becomes a total service provider, essentially “eating” all existing service categories.

With Claude

This diagram visualizes the core concept that all components must be organically connected and work together to successfully operate AI workloads.

Continuity of Data Flow

Cooperative Computing Resource Operations

Integrated Software Control Management

Infrastructure-based Stability Assurance

In AI systems, the weakest link determines overall performance. For example, no matter how powerful the GPU, if memory bandwidth is insufficient or cooling is inadequate, the entire system cannot achieve its full potential. Therefore, balanced design and integrated management of all components is crucial for AI workload success.

The diagram emphasizes that AI infrastructure is not just about having powerful individual components, but about creating a holistically optimized ecosystem where every element supports and enhances the others.

With Claude

This image is an architecture diagram titled “ALL to LLM” that illustrates the digital transformation of industrial facilities and AI-based operational management systems.

Left Section (Industrial Equipment):

Central Processing:

Right Section (AI-based Operations):

Overall, this diagram represents the transformation from traditional manual or semi-automated industrial facility operations to a fully digitized system where all operational data is converted to bit-level information and managed through LLM-powered intelligent facility management and predictive maintenance in an integrated operational system.

With Claude

Left Side – Fundamental Operating Characteristics:

Center – Digital Transformation:

Right Side – Implementation Directions (Updated Interpretation):

This framework demonstrates how digital technology can resolve the traditional trade-off between stability and efficiency. The approach is to establish safe operations as the foundation, utilize digitalization as the implementation method, and ultimately achieve reduction in both operating costs and energy costs.

The diagram shows a strategic pathway where digital transformation enables organizations to move beyond the traditional stability-efficiency dilemma toward a comprehensive cost optimization model.