From Claude with some prompting

focusing on the importance of the digital twin-based floor operation optimization system for high-performance computing rooms in AI data centers, emphasizing stability and energy efficiency. I’ll highlight the key elements marked with exclamation points.

Purpose of the system:

- Enhance stability

- Improve energy efficiency

- Optimize floor operations

Key elements (marked with exclamation points):

- Interface:

- Efficient data collection interface using IPMI, Redis and Nvidia DCGM

- Real-time monitoring of high-performance servers and GPUs to ensure stability

- Intelligent/Smart PDU:

- Precise power usage measurement contributing to energy efficiency

- Early detection of anomalies to improve stability

- High Resolution under 1 sec:

- High-resolution data collection in less than a second enables real-time response

- Immediate detection of rapid changes or anomalies to enhance stability

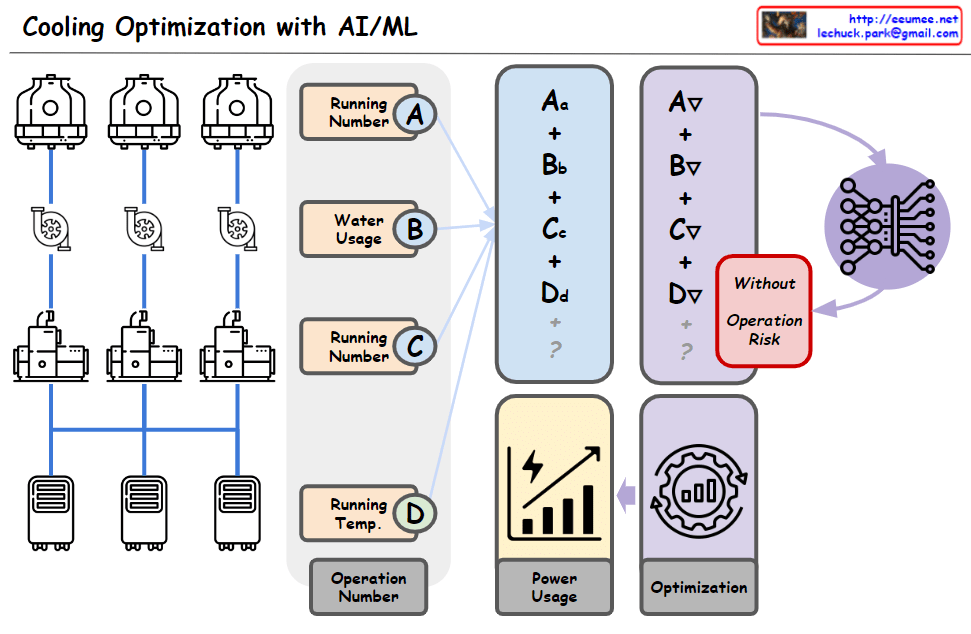

- Analysis with AI:

- AI-based analysis of collected data to derive optimization strategies

- Utilized for predictive maintenance and energy usage optimization

- Computing Room Digital Twin:

- Virtual replication of the actual computing room for simulation and optimization

- Scenario testing for various situations to improve stability and efficiency

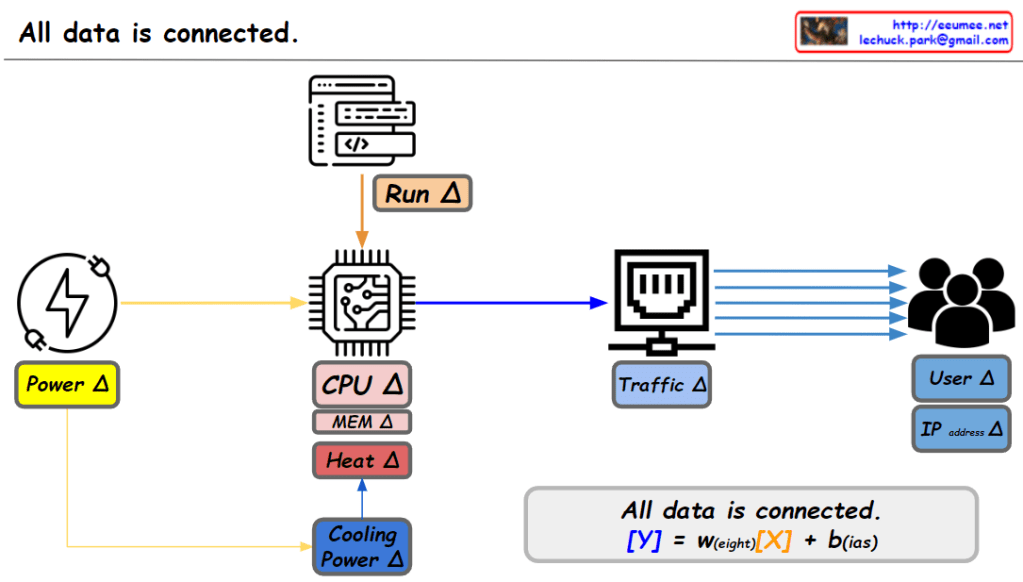

This system collects and analyzes data from high-power servers, power distribution units, cooling facilities, and environmental sensors. It optimizes the operation of AI data center computing rooms, enhances stability, and improves energy efficiency.

By leveraging digital twin technology, the system enables not only real-time monitoring but also predictive maintenance, energy usage optimization, and proactive response to potential issues. This leads to improved stability and reduced operational costs in high-performance computing environments.

Ultimately, this system serves as a critical infrastructure for efficient operation of AI data centers, energy conservation, and stable service provision. It addresses the unique challenges of managing high-density, high-performance computing environments, ensuring optimal performance while minimizing risks and energy consumption.