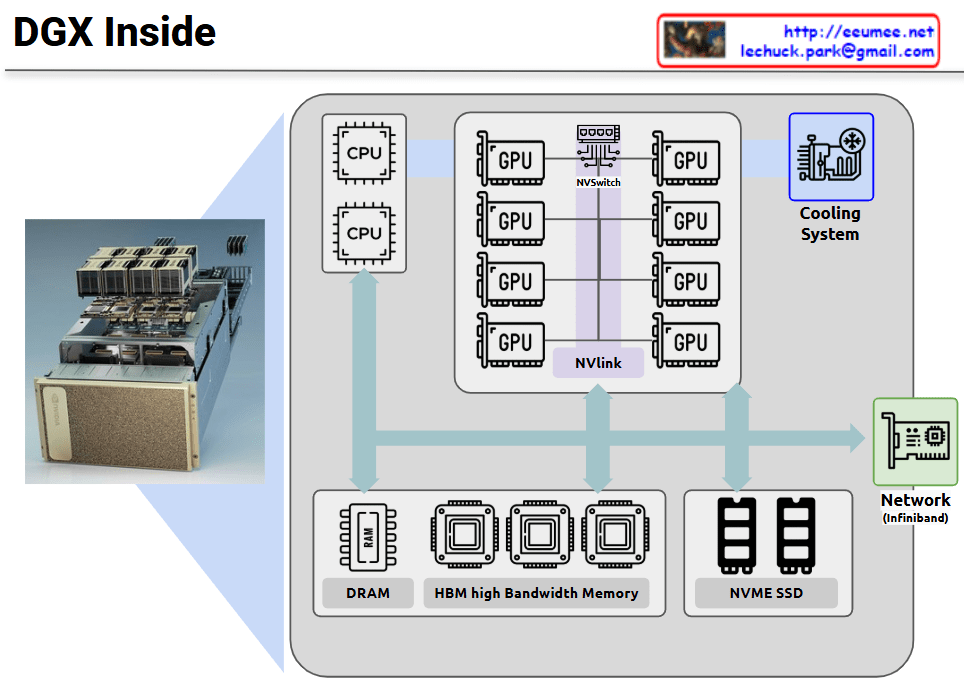

This diagram visualizes the core concept that all components must be organically connected and work together to successfully operate AI workloads.

Importance of Organic Interconnections

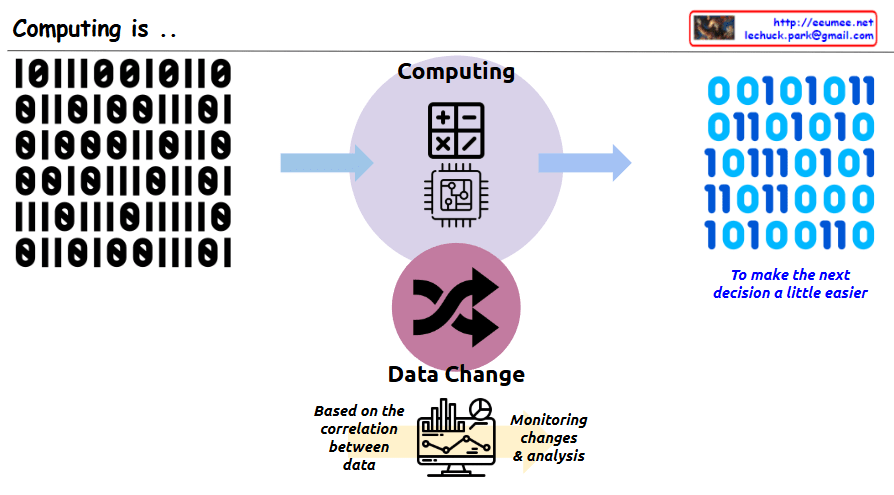

Continuity of Data Flow

- The data pipeline from Big Data → AI Model → AI Workload must operate seamlessly

- Bottlenecks at any stage directly impact overall system performance

Cooperative Computing Resource Operations

- GPU/CPU computational power must be balanced with HBM memory bandwidth

- SSD I/O performance must harmonize with memory-processor data transfer speeds

- Performance degradation in one component limits the efficiency of the entire system

Integrated Software Control Management

- Load balancing, integration, and synchronization coordinate optimal hardware resource utilization

- Real-time optimization of workload distribution and resource allocation

Infrastructure-based Stability Assurance

- Stable power supply ensures continuous operation of all computing resources

- Cooling systems prevent performance degradation through thermal management of high-performance hardware

- Facility control maintains consistency of the overall operating environment

Key Insight

In AI systems, the weakest link determines overall performance. For example, no matter how powerful the GPU, if memory bandwidth is insufficient or cooling is inadequate, the entire system cannot achieve its full potential. Therefore, balanced design and integrated management of all components is crucial for AI workload success.

The diagram emphasizes that AI infrastructure is not just about having powerful individual components, but about creating a holistically optimized ecosystem where every element supports and enhances the others.

With Claude