The Computing for the Fair Human Life.

From Claude with some prompting

This image presents a concept diagram titled “One Point”. It illustrates the process from the smallest unit in the universe to human data collection.

Key elements include:

At the bottom, there’s an infinity symbol with the phrase “not much different (infinite by the view of micro & macro)”. This suggests little difference between microscopic and macroscopic perspectives.

From Claude with some prompting

The image comprehensively illustrates the structure and major developments of the UNIX operating system, first developed in 1969. The key components and features are as follows:

This diagram shows the evolution of UNIX from its basic structure to significant technological advancements over time, providing a comprehensive overview of UNIX’s core concepts and features. It displays the historical development of UNIX by combining early design elements with later added functionalities, allowing for a clear understanding of UNIX’s progression.

From Claude with some prompting

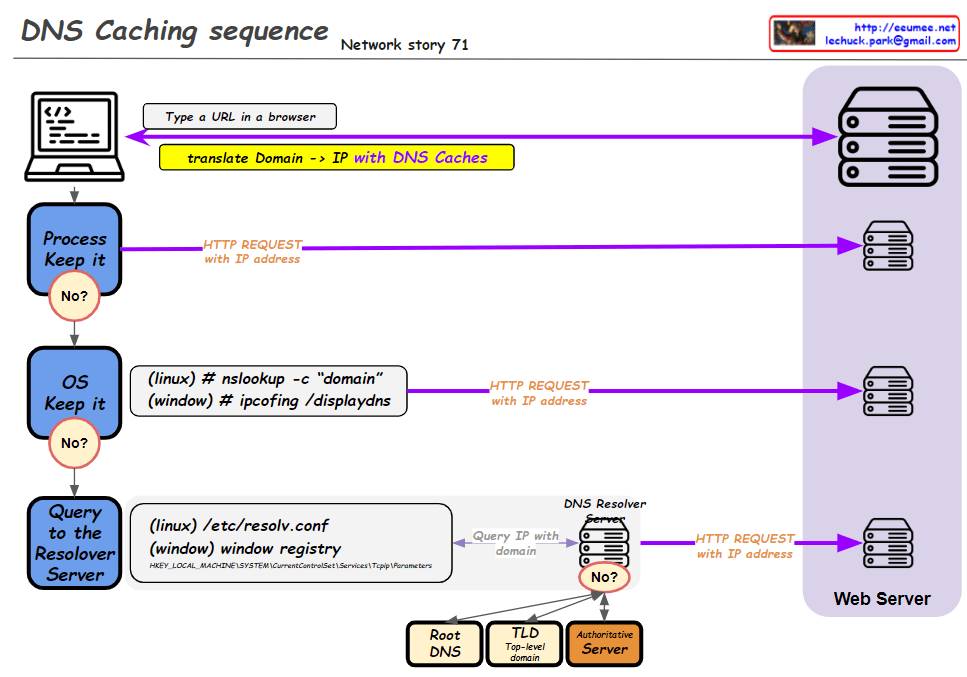

This improved diagram illustrates the DNS caching sequence more comprehensively. Here’s a breakdown of the process:

This diagram effectively shows the hierarchical nature of DNS resolution and the fallback mechanisms at each level. It demonstrates how the system progressively moves from local caches to broader, more authoritative sources when resolving domain names to IP addresses. The addition of the DNS hierarchy (Root, TLD, Authoritative) provides a more complete picture of the entire resolution process when local caches and the initial resolver query don’t yield results.

From Claude with some prompting

This image illustrates a more comprehensive structure of a new operating system integrated with AI. Here’s a summary of the key changes and features:

This structure offers several advantages:

This new OS architecture integrates AI as a core component, seamlessly combining traditional OS functions with advanced AI capabilities to present a next-generation computing environment.

From Claude with some prompting

This image titled “AI DC Key” illustrates the key components of an AI data center. Here’s an interpretation of the diagram:

This diagram visualizes the essential components of a modern AI data center and their key considerations. It demonstrates how high-performance computing, efficient power management, advanced cooling technology, and optimized operations effectively process and analyze large-scale data, emphasizing the critical technologies or approaches for each element.