From Claude with some prompting

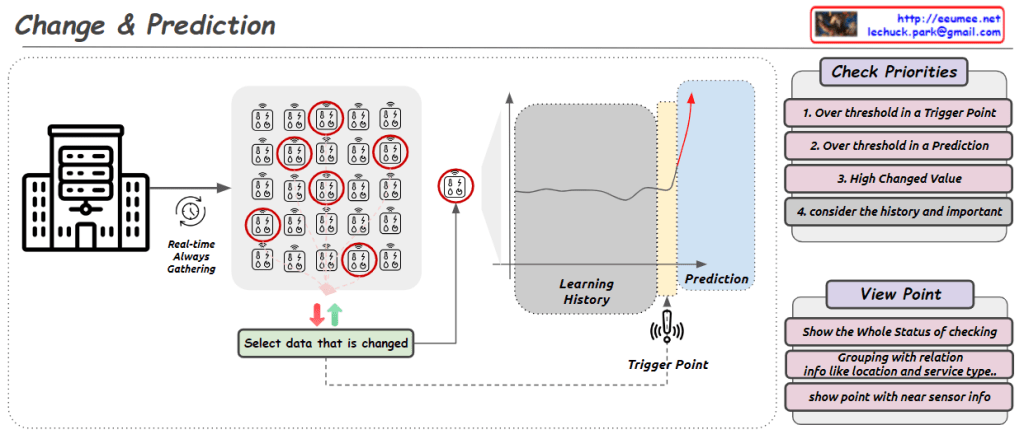

This image illustrates a process called “Change & Prediction” which appears to be a system for monitoring and analyzing real-time data streams. The key components shown are:

- Real-time data gathering from some source (likely sensors represented by the building icon).

- Selecting data that has changed significantly.

- A “Learning History” component that tracks and learns from the incoming data over time.

- A “Trigger Point” that detects when data values cross certain thresholds.

- A “Prediction” component that likely forecasts future values based on the learned patterns.

The “Check Priorities” box lists four criteria for determining which data points deserve attention: exceeding trigger thresholds, predictions crossing thresholds, high change values, and considering historical context.

The “View Point” section suggests options for visualizing the status, grouping related data points (e.g., by location or service type), and showing detailed sensor information.

Overall, this seems to depict an automated monitoring and predictive analytics system for identifying and responding to important changes in real-time data streams from various sources or sensors.