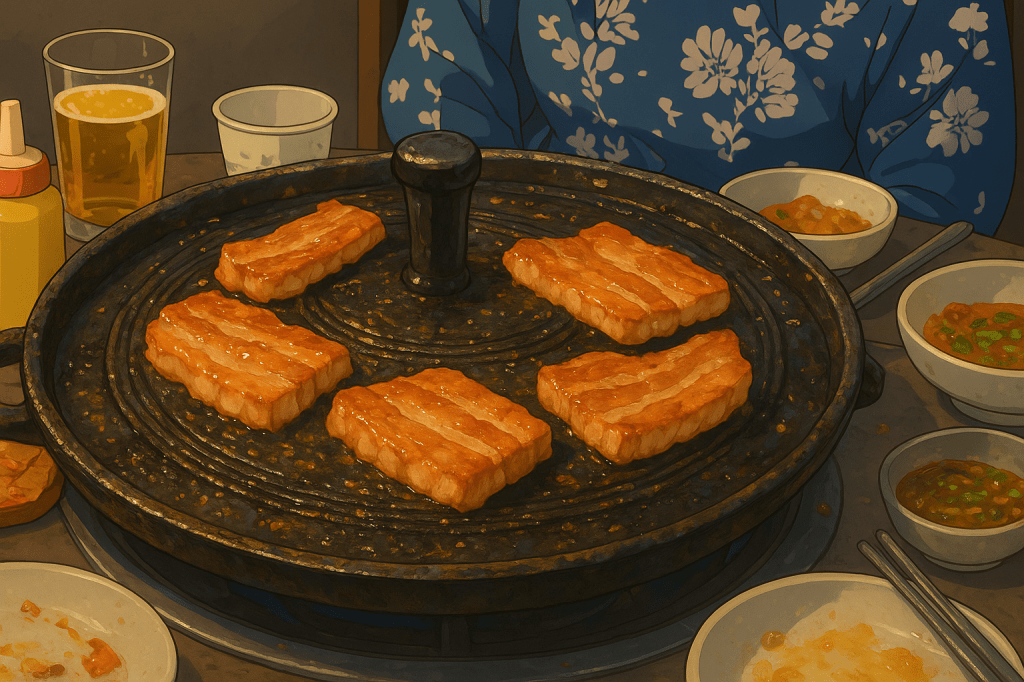

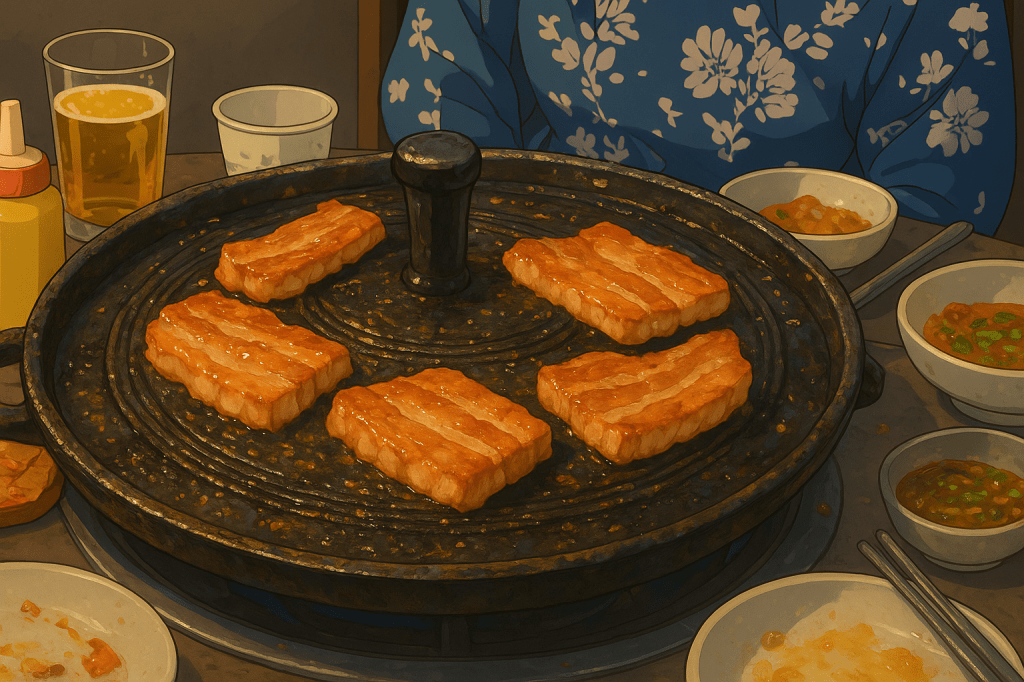

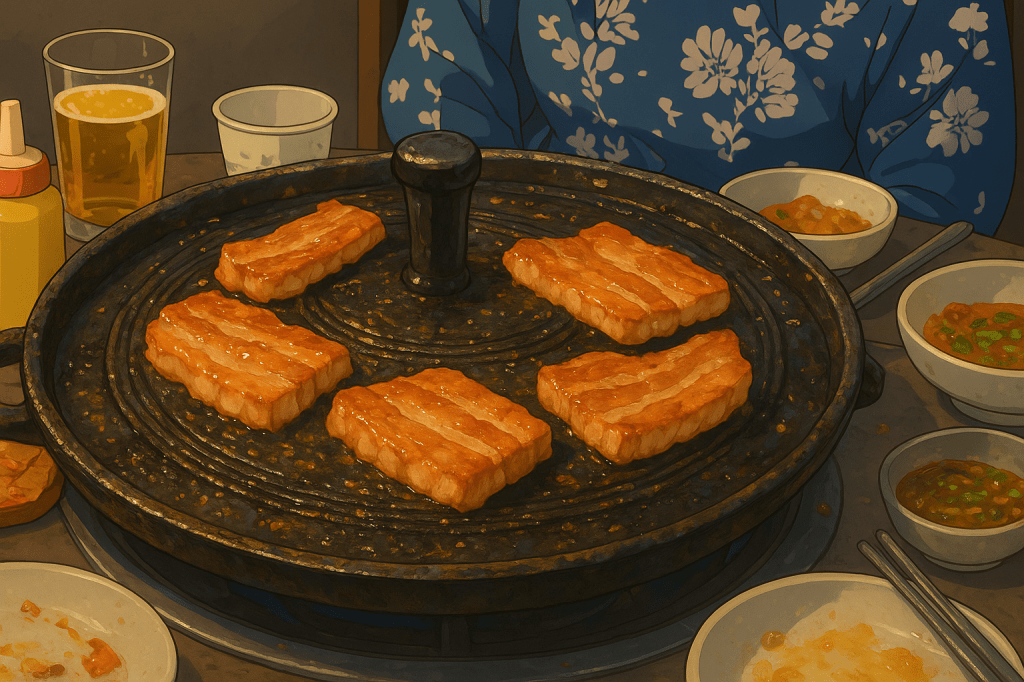

Samgyeopsal is always

The Computing for the Fair Human Life.

This diagram presents a systematic framework that defines the essence of AI LLMs as “Massive Simple Parallel Computing” and systematically outlines the resulting issues and challenges that need to be addressed.

Massive: Enormous scale with billions of parameters Simple: Fundamentally simple computational operations (matrix multiplications, etc.) Parallel: Architecture capable of simultaneous parallel processing Computing: All of this implemented through computational processes

Big Issues:

Very Required:

How can we solve all these requirements?

In other words, this framework poses the fundamental question about specific solutions and approaches to overcome the problems inherent in the essential characteristics of current LLMs. This represents a compressed framework showing the core challenges for next-generation AI technology development.

The diagram effectively illustrates how the defining characteristics of LLMs directly lead to significant challenges, which in turn demand specific capabilities, ultimately raising the critical question of implementation methodology.

With Claude

This diagram illustrates the evolution of mainstream data types throughout computing history, showing how the complexity and volume of processed data has grown exponentially across different eras.

Evolution of Mainstream Data by Computing Era:

The question marks on the right symbolize the fundamental uncertainty surrounding this final stage. Whether everything humans perceive – emotions, consciousness, intuition, creativity – can truly be fully converted into computational data remains an open question due to technical limitations, ethical concerns, and the inherent nature of human cognition.

Summary: This represents a data-centric view of computing evolution, progressing from simple numerical processing to potentially encompassing all aspects of human perception and experience, though the ultimate realization of this vision remains uncertain.

With Claude

Visual Analysis: RNN vs Transformer

The diagram succeeds by using:

The grid vs chain visualization immediately conveys why Transformers enable faster, more scalable processing than RNNs.

This diagram effectively illustrates the fundamental shift from sequential to parallel processing in neural architecture. The visual contrast between RNN’s linear chain and Transformer’s interconnected grid clearly demonstrates why Transformers revolutionized AI by enabling massive parallelization and better long-range dependencies.

With Claude

Before: CPU [Memory] ←PCIe→ [Memory] GPU (Separated)

After: CPU ←CXL→ GPU → Shared Memory Pool (Unified)

| Metric | PCIe 4.0 | CXL 2.0 | Improvement |

|---|---|---|---|

| Bandwidth | 64 GB/s | 128 GB/s | 2x |

| Latency | 1-2μs | 200-400ns | 5-10x |

| Memory Copy | Required | Eliminated | Complete Removal |

AI/ML: 90% reduction in training data loading time, larger model processing capability

HPC: Real-time large dataset exchange, memory constraint elimination

Cloud: Maximized server resource efficiency through memory pooling

The key technical improvements of CXL – Zero-Copy sharing and hardware-based cache coherency – are emphasized as the most revolutionary aspects that fundamentally solve the traditional PCIe bottlenecks.

With Claude

“No Change, No Operation” – This diagram illustrates the fundamental IT operations principle that operations are driven by change detection.

Change Detection → Operational Need Assessment → Appropriate Response

“Change Determines Operations”

This diagram demonstrates the evolution from Reactive Operations to Proactive Operations, where:

The framework recognizes change as the trigger for all operational activities, embodying the contemporary IT operations paradigm where:

This represents a shift toward Change-Driven Operations Management, where the operational workload directly correlates with the rate and nature of system changes, enabling more efficient resource utilization and better service reliability.

With Claude

Human language simplifies the world into words and symbols, but its limits soon appear. Through mathematical abstraction, these simplified ideas transform into precise equations. Only then can the hidden reality of quantum mechanics—waves, probability, and uncertainty—be revealed.