From Claude with some prompting

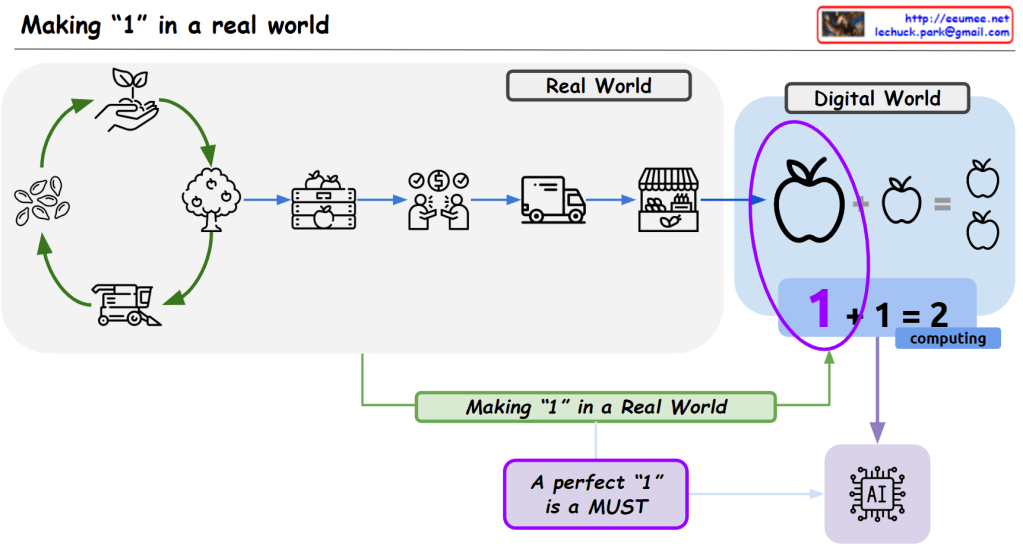

This image emphasizes the crucial importance of obtaining high-quality data from the real world for the advancement of the digital world, particularly artificial intelligence (AI).

The real-world section depicts the complex series of steps required to produce a “perfect 1,” or a product of excellent quality (e.g., an apple), including growing trees, harvesting, transportation, and selling.

In contrast, the digital world represents this intricate process through a simple mathematical computation (1 + 1 = 2). However, the image conveys that securing flawless data from the real world is an extremely important and arduous process for AI to develop and improve.

In essence, the image highlights that the complex process of extracting high-quality data from the physical realm is essential for enhancing AI performance. It serves as a reminder that this crucial aspect should not be overlooked or underestimated.

The overall message is that for AI to advance in the digital world, obtaining pristine data from the real world through an intricate series of steps is an indispensable and challenging requirement that must be prioritized.