From Claude with some prompting

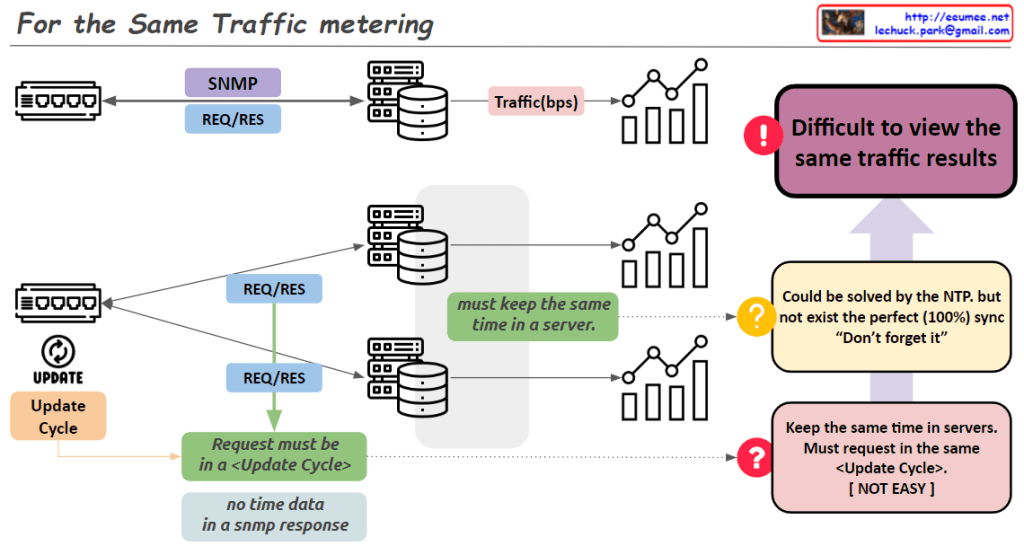

“For the Same Traffic Metering” – Key Points:

- Problem: Different collection servers using SNMP may not yield the same results for identical traffic.

- Main causes of discrepancy:

- Network equipment updates traffic information periodically.

- To get consistent values, SNMP requests must align with the equipment’s update cycle.

- Difficult to synchronize requests precisely across multiple servers.

- Challenges for resolution:

- Servers need accurate time synchronization.

- All requests should occur within the same ‘Update Cycle’ of the equipment.

- Time synchronization:

- NTP can partially solve the issue.

- Perfect (100%) synchronization is not achievable in practice.

- Consequence: SNMP data collected from multiple servers may show different results for the same traffic.

- Key insight: The image emphasizes the difficulties in accurate data collection using SNMP in network monitoring systems.

- Implications: Network administrators and system designers must be aware of these limitations and consider them when collecting and interpreting data.

This summary highlights the complexities involved in ensuring consistent traffic metering across multiple collection points in a network environment.