From Claude with some prompting

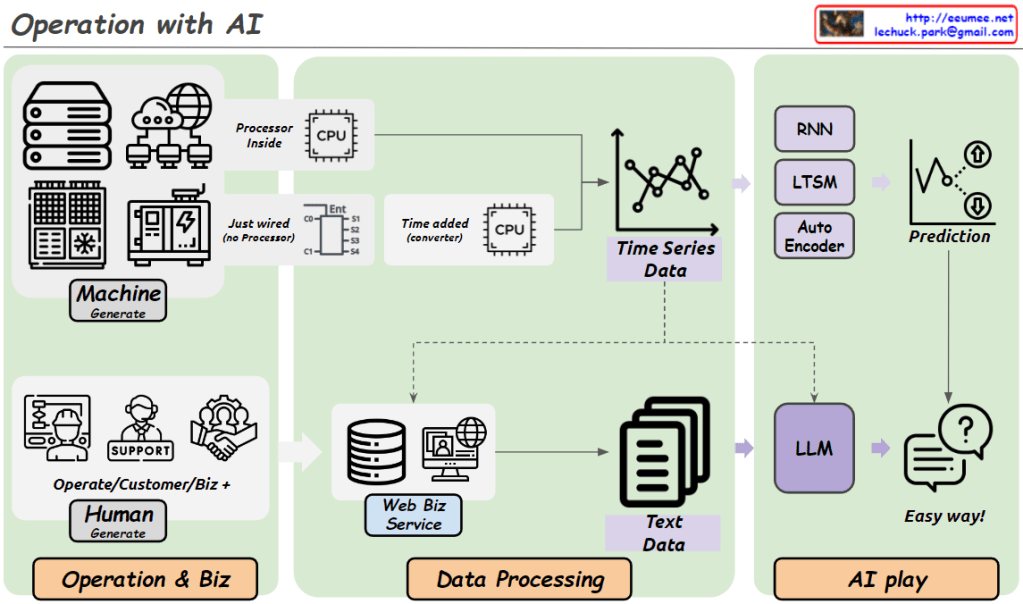

This image outlines a progressive approach to data monitoring and alert systems, starting with simple metrics and evolving to more complex AI-driven solutions. The key steps are:

- “Keeping a Temperature”: Basic monitoring of system temperatures.

- “Monitoring”: Continuous observation of temperature data.

- “Alerts with thresholds”: Simple threshold-based alerts.

- More complex metrics: Including 10-minute thresholds, change counts, averages, and derivations.

- “More Indicators”: Expanding to additional KPIs and metrics.

- “Machine Learning ARIMA/LSTM”: Implementing advanced predictive models.

- “Alerts with predictions”: AI-driven predictive alerts.

The central message “EASY FIRST BEFORE THE AI !!” emphasizes starting with simpler methods before advancing to AI solutions.

Importantly, the image also implies that these simpler metrics and indicators established early on will later serve as valuable training data for AI models. This is shown by the arrows connecting all stages to the machine learning component, suggesting that the data collected throughout the process contributes to the AI’s learning and predictive capabilities.

This approach not only allows for a gradual build-up of system complexity but also ensures that when AI is implemented, it has a rich dataset to learn from, enhancing its effectiveness and accuracy.