AI transforms abstract, context-based terms into unified concepts, shaping new ways of human thinking.

The Computing for the Fair Human Life.

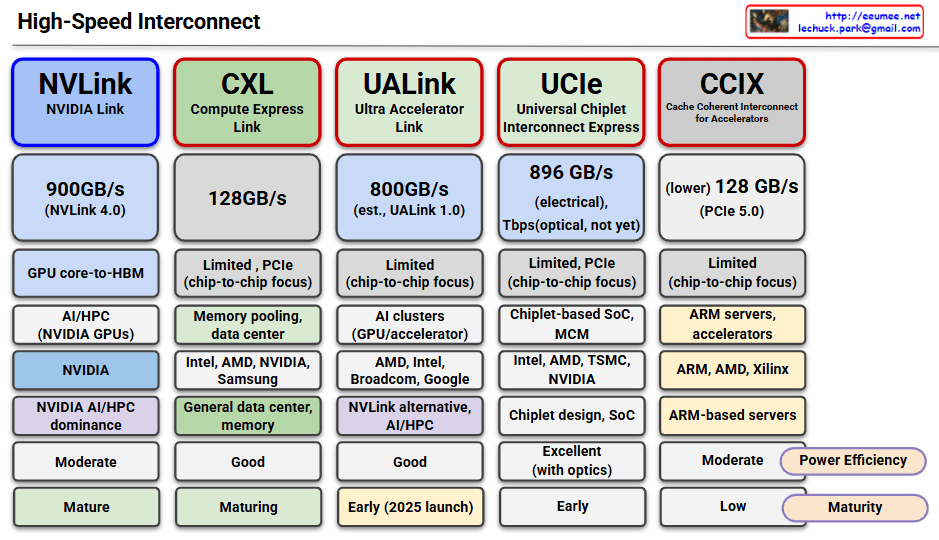

This image compares five major high-speed interconnect technologies:

Summary: All technologies are converging toward higher bandwidth, lower latency, and chip-to-chip connectivity to address the growing demands of AI/HPC workloads. The effectiveness varies by ecosystem, with specialized solutions like NVLink leading in performance while universal standards like CXL focus on broader compatibility and adoption.

With Claude

This image is a conceptual diagram showing how the domain of “Difference” is continuously expanded.

Top Flow: Natural Emergence of Difference

Bottom Flow: Human Tools for Recognizing Difference

The interaction between these two drivers creates a process that continuously expands the domain of difference, shown in the center:

Emergence of Difference

↓ (Continuous Expansion)

Recognition of Difference

Differentiation & Distinction

The natural emergence of difference and the development of human recognition tools create mutual feedback that continuously expands the domain of difference.

As the handwritten note on the left indicates (“AI expands the boundary of perceivable difference”), particularly in the AI era, the speed and scope of this expansion has dramatically increased. This represents a cyclical expansion process where new differences emerging from nature are recognized through increasingly sophisticated tools, and these recognized differences in turn enable new natural changes.

With Claude

This image illustrates the evolution of computing architectures, comparing three major computing paradigms:

The core focus of the image, PIM features the following characteristics:

Core Concept:

Key Advantages:

PIM is gaining attention as a next-generation computing paradigm that can significantly improve energy efficiency and performance compared to existing architectures, particularly for tasks involving massive repetitive simple operations such as AI/machine learning and big data analytics.

With Claude

This image illustrates the purpose and outcomes of temperature prediction approaches in data centers, showing how each method serves different operational needs.

Input:

Process: Physics-based simulation through computational fluid dynamics

Results:

Input:

Process: Data-driven pattern learning and prediction

Results:

CFD: “Can we do this?” – Validates design feasibility and limits before implementation

ML: “What’s happening now?” – Monitors current operations and predicts immediate future

The diagram shows these are complementary approaches: CFD for design validation and ML for operational excellence, each serving distinct phases of data center lifecycle management.

With Claude

Top: CFD (Computational Fluid Dynamics) based approach Bottom: ML (Machine Learning) based approach

CFD Method: Theoretical calculation through physical laws using physical space definitions, material properties, and boundary conditions as inputs ML Method: Data-driven approach that learns from actual operational data and sensor information for prediction

The key distinction is that CFD performs simulation from predefined physical conditions, while ML learns from actual operational data collected during runtime to make predictions.

With Claude

Computing is shifting from complex logic to massive parallel processing of simple matrix operations, especially in AI. As computation becomes faster, memory—its speed, structure, and reliability—becomes the new bottleneck and the most critical resource.