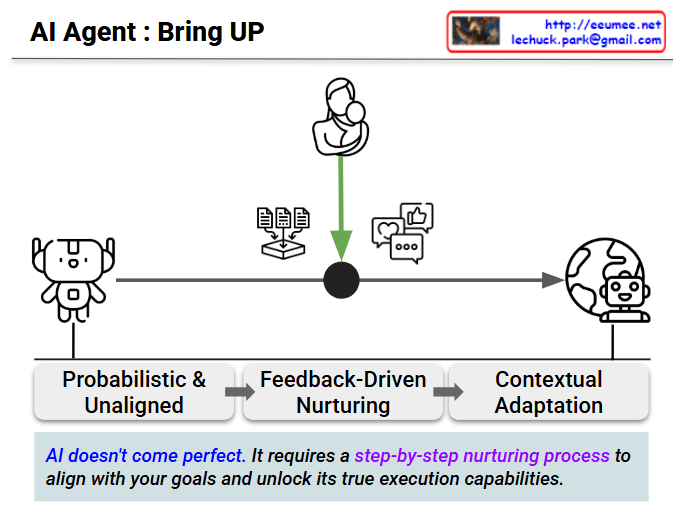

Visualizing the Evolution of an AI Agent: The “Bring UP” Process

This infographic, titled “AI Agent : Bring UP,” effectively illustrates the evolutionary journey of an Artificial Intelligence from a raw, untrained model to a fully functional, real-world agent. It uses a powerful “nurturing” metaphor to emphasize that building a reliable AI is not a plug-and-play event, but a continuous process of guidance.

Here is the step-by-step breakdown of the AI’s journey:

1. The Starting Point: Probabilistic & Unaligned

- Visual: The basic, blank-faced robot on the far left.

- Meaning: This represents the raw AI (such as a base LLM). At this initial stage, the AI is merely a probabilistic engine. It predicts outputs based on statistical likelihoods but fundamentally lacks an understanding of the user’s true intent, operational goals, or constraints. It is a powerful tool, but it is “unaligned.”

2. The Critical Phase: Feedback-Driven Nurturing

- Visual: The central nexus featuring a parent holding a child, flanked by documents (data) and social interaction icons (likes/comments).

- Meaning: This is the most crucial step—the “Human-in-the-Loop” process. The parent-child icon symbolizes that an AI must be nurtured. To bridge the gap between a raw model and a useful agent, it requires the injection of specific contextual data (documents) and continuous, iterative human feedback (represented by the interaction icons).

3. The Final Goal: Contextual Adaptation

- Visual: The advanced, confident robot standing in front of a globe on the right.

- Meaning: Having successfully passed through the nurturing phase, the AI is no longer just a text generator. It has adapted to complex, real-world contexts (the globe). It is now an aligned, goal-oriented “Agent” capable of understanding its environment and executing tasks accurately.

💡 The Key Takeaway

The most important message is captured in the footer: “AI doesn’t come perfect.”

Many people expect out-of-the-box perfection from AI, but this diagram clearly debunks that myth. To unlock an AI’s true execution capabilities, you cannot skip the middle step. It mandates a step-by-step nurturing process to align the technology with your specific objectives. Perfection is not the starting point; it is the result of continuous guidance.

#AIAgents #ArtificialIntelligence #AIAlignment #HumanInTheLoop #MachineLearning #TechVisualization #AIOps #LLM #TechLeadership #Innovation

With Gemini