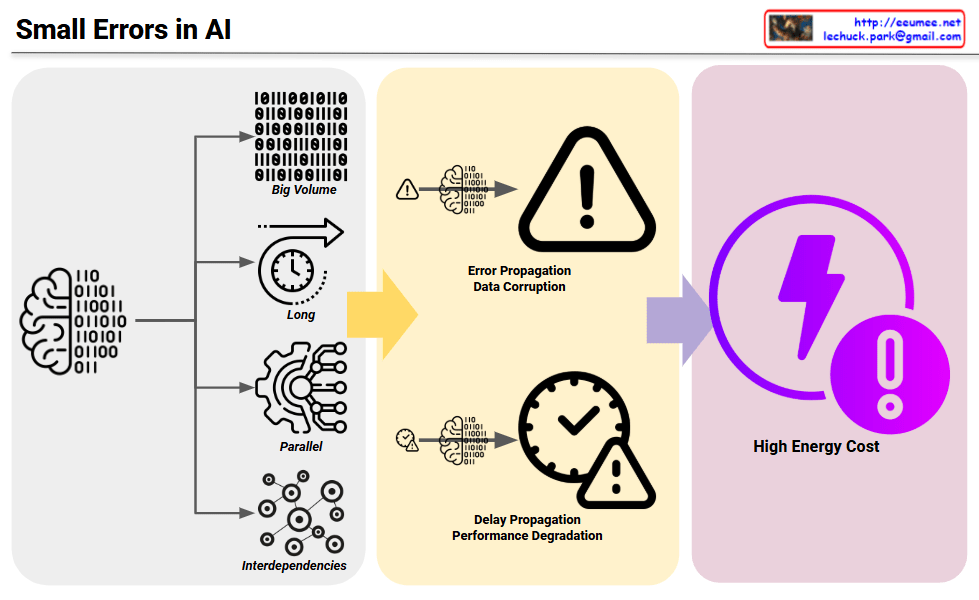

Four Core Characteristics of AI Tasks (Left)

AI systems have distinctive characteristics that make them particularly vulnerable to error amplification:

- Big Volume: Processing massive amounts of data

- Long Duration: Extended computational operations over time

- Parallel Processing: Simultaneous execution of multiple tasks

- Interdependencies: Complex interconnections where components influence each other

Small Error Amplification (Middle)

Due to these AI characteristics, small initial errors become amplified in two critical ways:

- Error Propagation & Data Corruption: Minor errors spread throughout the system, significantly impacting overall data quality

- Delay Propagation & Performance Degradation: Small delays accumulate and cascade, severely affecting entire system performance

Final Impact (Right)

- Very High Energy Cost: Errors and performance degradation result in exponentially higher energy consumption than anticipated

Key Message

The four inherent characteristics of AI (big volume, long duration, parallel processing, and interdependencies) create a perfect storm where small errors can amplify exponentially, ultimately leading to enormously high energy costs. This diagram serves as a warning about the critical importance of preventing small errors in AI systems before they cascade into major problems.

With Claude