From Claude with some prompting

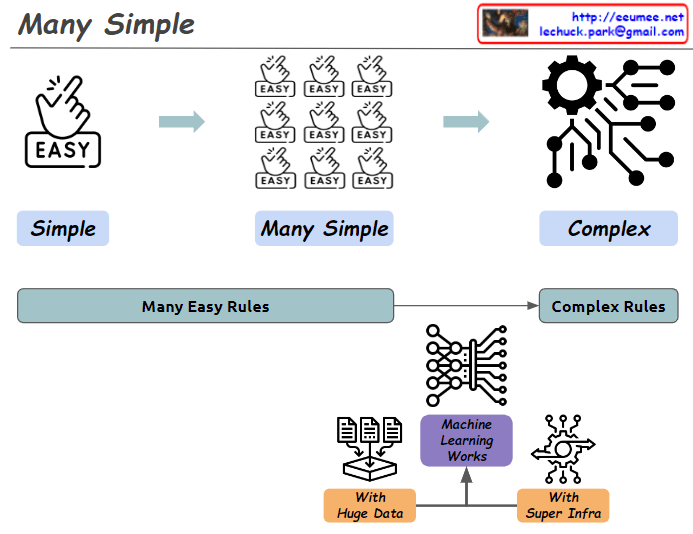

This image illustrates the concept of “Many Simple” and demonstrates how simple elements combine to create complexity.

- Top diagram:

- “Simple”: Starts with a single “EASY” icon.

- “Many Simple”: Shows multiple “EASY” icons grouped together.

- “Complex”: Depicts a system of intricate gears and connections.

- Bottom diagram:

- Shows the progression from “Many Easy Rules” to “Complex Rules”.

- Centers around the concept of “Machine Learning Works”.

- This is supported by “With Huge Data” and “With Super Infra”.

The image provides a simplified explanation of how machine learning operates. It visualizes the process of numerous simple rules being processed through massive amounts of data and powerful infrastructure to produce complex systems.