From Claude with some prompting

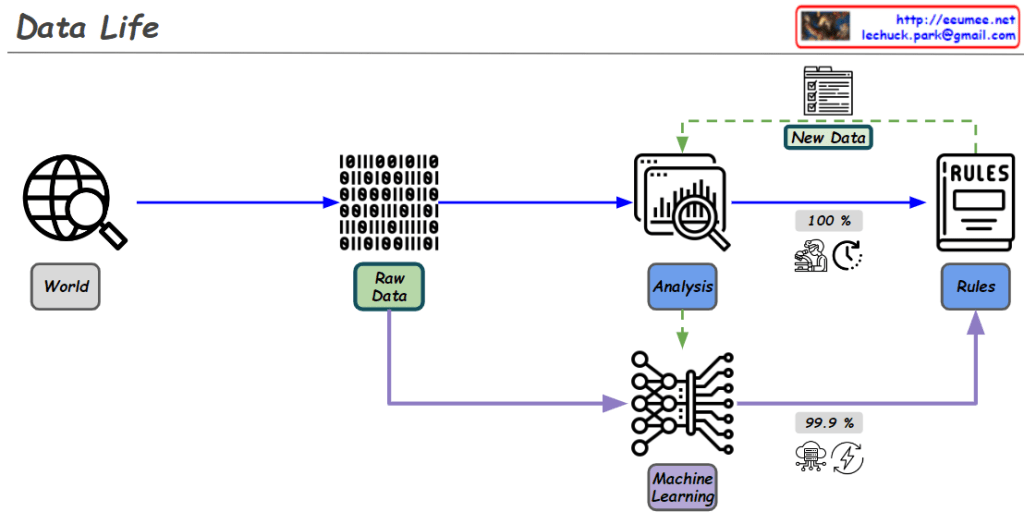

This image depicts the progressive development of human capabilities and knowledge, showcasing how humans have strived to understand and explain the world through the use of numbers, mathematics, and computing technology.

- Human Groups: The image represents humans coming together in groups to explore and comprehend the world around them.

- Using Math: Humans have leveraged numbers and mathematical calculations in an effort to make sense of the world.

- Computing: Building upon their mathematical prowess, the advancement of computing technology has enhanced human analysis and understanding.

- High-Speed Infrastructure: The development of cutting-edge technological infrastructure has enabled further evolution of human activities.

- AI and Deep Learning: This series of technological advancements has led humans to a point where they may feel they have nearly reached the true essence of reality. However, the image suggests that the emergence of AI and deep learning technologies is now challenging this human-centric perspective, hinting that there may still be an infinite gap to traverse before fully grasping the fundamental nature of the world.

In essence, the image showcases the stepwise progression of human knowledge and capabilities, anchored in numbers, math, and computing, while also highlighting how these efforts are now being disrupted by the rise of advanced AI and deep learning, which may transcend the limitations of human understanding.