Big moment this week

The Computing for the Fair Human Life.

From Claude with some prompting

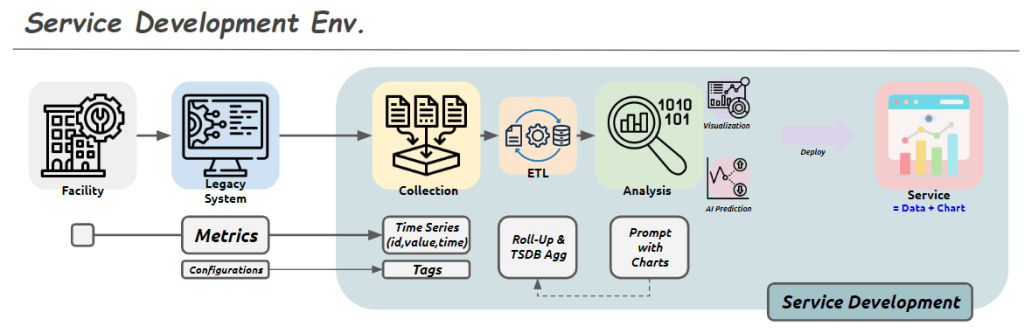

This image shows a diagram titled “Service Development Env.” (Service Development Environment). It illustrates the stages of a service development process:

The lower part of the diagram shows additional process steps:

Overall, this diagram illustrates the entire service development process from data collection to analysis, visualization, and final service deployment. Each stage represents the steps of processing, storing, analyzing data, and ultimately delivering it to end-users.

The flow suggests a progression from legacy systems and facilities, through data collection and processing, to advanced analysis and deployment of data-driven services

From Claude with some prompting

This diagram illustrates the importance of standardization and platform development, highlighting two key objectives:

This structure offers several advantages:

In conclusion, this diagram visually represents two core strategies: establishing data reliability through standardization and enabling efficient service improvement and expansion through a dedicated platform. It emphasizes how standardization allows teams to trust and focus on using the data, while the platform makes it easier to improve existing services and develop new ones.

From Claude with some prompting

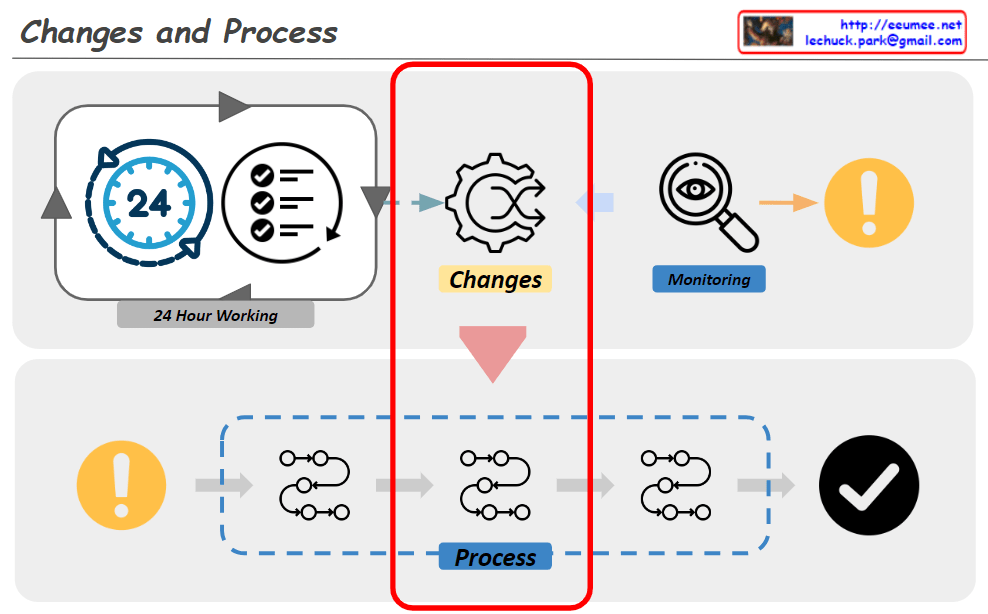

The diagram titled “Changes and Process” illustrates an organization’s system for detecting and responding to changes. The key components and flow are as follows:

This system operates cyclically, continuously monitoring to detect changes and activating an immediate response process when necessary. This approach maintains the organization’s efficiency and stability. It demonstrates the organization’s ability to respond quickly and systematically to changing environments.

The diagram emphasizes the interconnectedness of continuous operation, change management, monitoring, and the execution of structured processes, all working together to ensure effective adaptation to changes.

From Claude with some prompting

The image “Computing Power 4-Optimizations” highlights four key areas for optimizing computing power, emphasizing a comprehensive approach that goes beyond infrastructure to include both hardware and software perspectives:

The diagram effectively illustrates that computing power optimization is not limited to hardware or infrastructure improvements alone. It stresses the need for a holistic approach, from chip design to code writing, to achieve effective optimization. By considering both hardware (chip) and software (code) level optimizations together, the overall system efficiency can be maximized. This comprehensive view is essential for addressing the complex challenges of power management in modern computing systems.

From Claude with some prompting

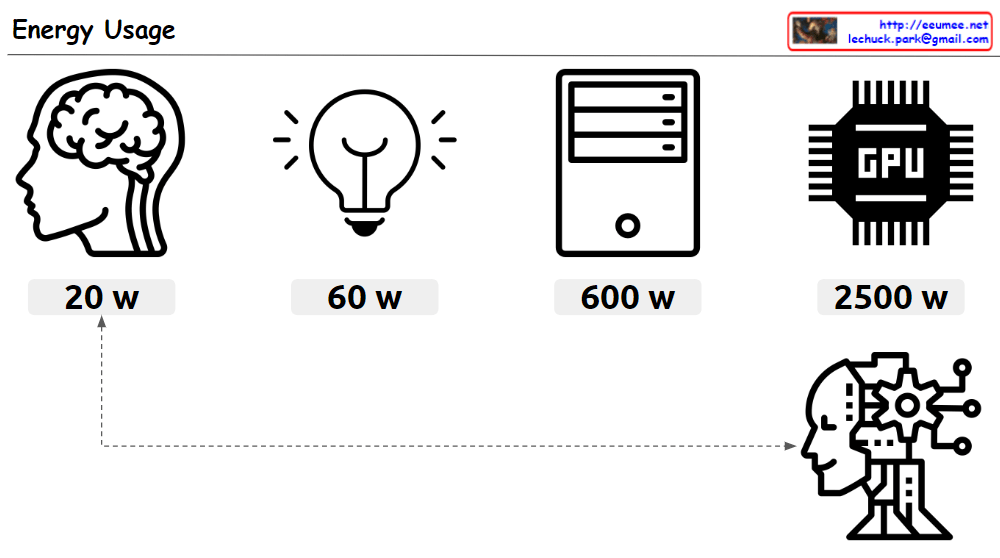

Here’s an interpretation of the image in English:

This image compares the energy usage of various devices and systems. Under the title “Energy Usage”, the following items are listed:

At the bottom of the image, there’s an icon suggestive of artificial intelligence or a robot. This icon is connected by a dotted line to the human brain icon, implying a comparison of energy usage between the human brain and AI systems.

The image emphasizes the energy efficiency of the human brain. While the brain operates on just 20W, a high-performance computing device like a GPU consumes 2500W. This suggests that artificial intelligence systems consume significantly more energy compared to the human brain.

In the top right corner of the image, an email address (lechuck.park@gmail.com) is displayed.

Overall, this image provides a striking visual comparison of energy consumption across different systems, highlighting the remarkable efficiency of the human brain in contrast to artificial computing systems.