Attitude towards AI

The Computing for the Fair Human Life.

This image is a diagram showing the key components of a Data Center (DC).

The diagram visually represents the core elements that make up a data center:

This diagram illustrates how a data center flows from a physical building through core elements like network, computing, power, and cooling to ultimately provide digital services.

With Claude

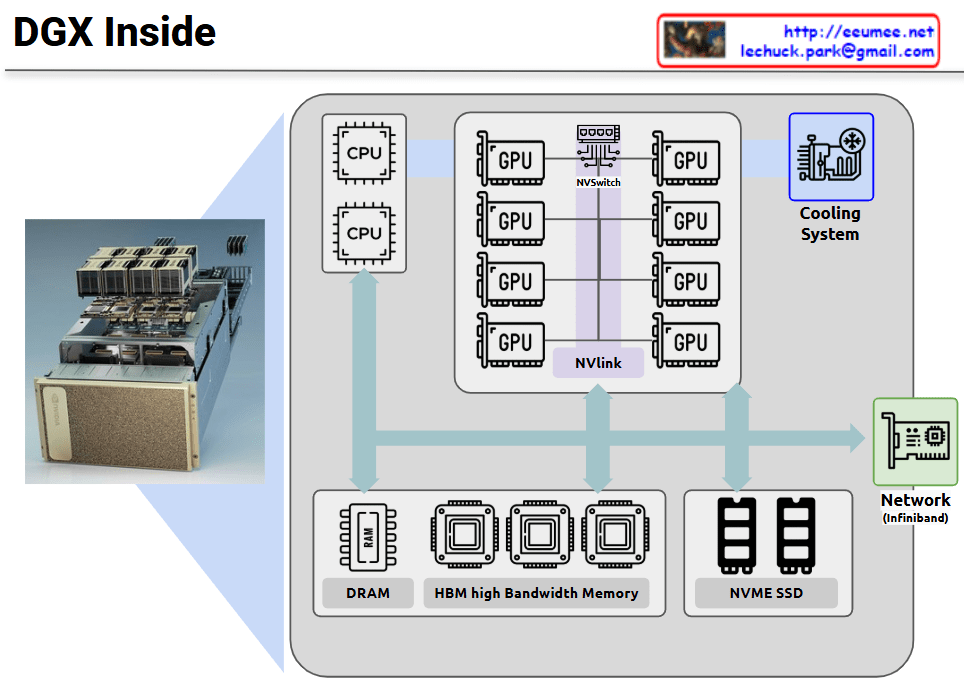

NVIDIA DGX is a specialized server system optimized for GPU-centric high-performance computing. This diagram illustrates the internal architecture of DGX, which maintains a server-like structure but is specifically designed for massive parallel processing.

The core of the DGX system consists of multiple high-performance GPUs interconnected not through conventional PCIe, but via NVIDIA’s proprietary NVLink and NVSwitch technologies. This configuration dramatically increases GPU-to-GPU communication bandwidth, maximizing parallel processing efficiency.

Key features:

This configuration is optimized for workloads requiring parallel processing such as deep learning, AI model training, and large-scale data analysis, enabling much more efficient GPU utilization compared to conventional servers.

With Claude

This image illustrates a comparison between two main data center architecture approaches: “Rack Cluster DC” and “Modular DC.”

On the left side, there are basic infrastructure elements depicted, representing power supply components (transformers, generators), cooling systems, and network equipment. On the right side, two different data center configuration methods are presented.

Both approaches display expansion units labeled “NEW” at the bottom, demonstrating the scalability of each approach.

This diagram visually compares the structural differences, scalability, and component arrangements between the traditional rack cluster approach and the modular approach to data center design.

With Claude

This diagram compares two GPU networking technologies: NVLink and InfiniBand, both essential for parallel computing expansion.

On the left side, the “NVLink” section shows multiple GPUs connected vertically through purple interconnect bars. This represents the “Scale UP” approach, where GPUs are vertically scaled within a single system for tight integration.

On the right side, the “InfiniBand” section demonstrates how multiple server nodes connect through an InfiniBand network. This illustrates the “Scale Out” approach, where computing power expands horizontally across multiple independent systems.

Both technologies share the common goal of expanding parallel processing capabilities, but they do so in different architectural approaches. NVLink focuses on high-speed, direct connections between GPUs in a single system, while InfiniBand specializes in networking across multiple systems to support distributed computing environments.

The optimization of these expansion configurations is crucial for maximizing performance in high-performance computing, AI training, and other compute-intensive applications. System architects must carefully consider workload characteristics, data movement patterns, and scaling requirements when choosing between these technologies or determining how to best implement them together in hybrid configurations.

With Claude

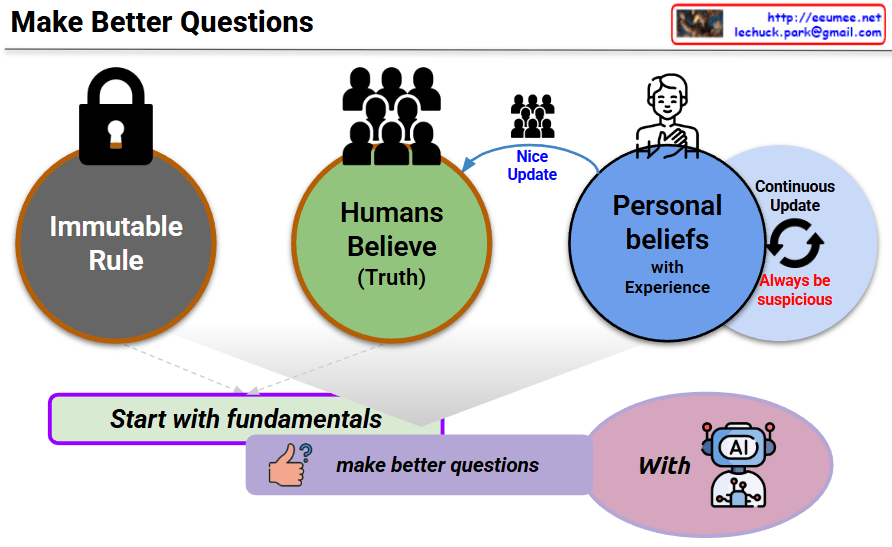

This diagram titled “Make Better Questions” illustrates a methodology for effective questioning. The key concepts are:

This diagram ultimately suggests a method for optimizing interactions with AI through constant skepticism and adherence to fundamentals while maintaining flexible thinking. It emphasizes the importance of not settling for fixed beliefs but continuously learning and evolving.

With Claude