The digital exists to reveal difference—through patterns of 0s and 1s.

The Computing for the Fair Human Life.

The digital exists to reveal difference—through patterns of 0s and 1s.

The majority of AI workloads are concentrated in matrix processing because matrix multiplication is the core operation in deep learning. Tensor cores are the key component for AI performance improvement.

With Claude

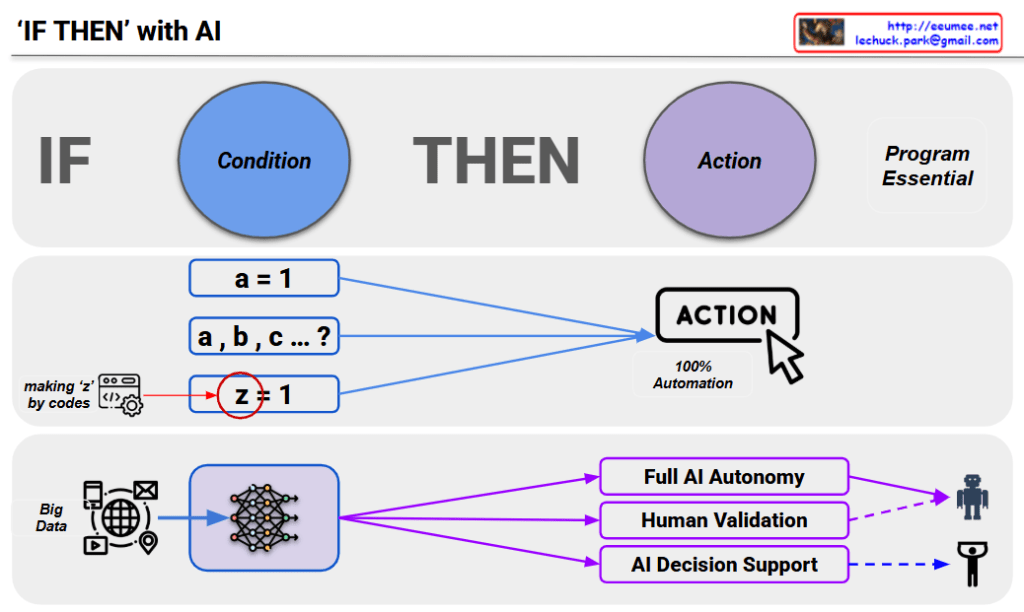

This image is a diagram titled “IF-THEN with AI” that explains conditional logic and automation levels in AI systems.

AI is ultimately a program, and like humans who wanted to predict by sensing data, making judgments, and taking actions based on those criteria. IF-THEN is essentially prediction – the foundation of programming that involves recognizing situations, making judgments, and taking actions.

Evolution stages of data/formulas:

Now we input massive amounts of data and analyze with AI, though it has somewhat probabilistic characteristics.

Starting from Big Data through AI networks, three development directions:

Key Perspective: While these three development directions exist, there’s a need for judgment regarding decisions based on the quality of data used in analysis/judgment. This diagram shows that it’s not just about automation levels, but that data quality-based reliability assessment is a crucial consideration.

This diagram illustrates the evolution from simple conditional programming to complex AI systems, emphasizing that AI fundamentally operates on IF-THEN logic for prediction and decision-making. The key insight is that regardless of automation level, the quality of input data remains critical for reliable AI decision-making processes.

With Claude

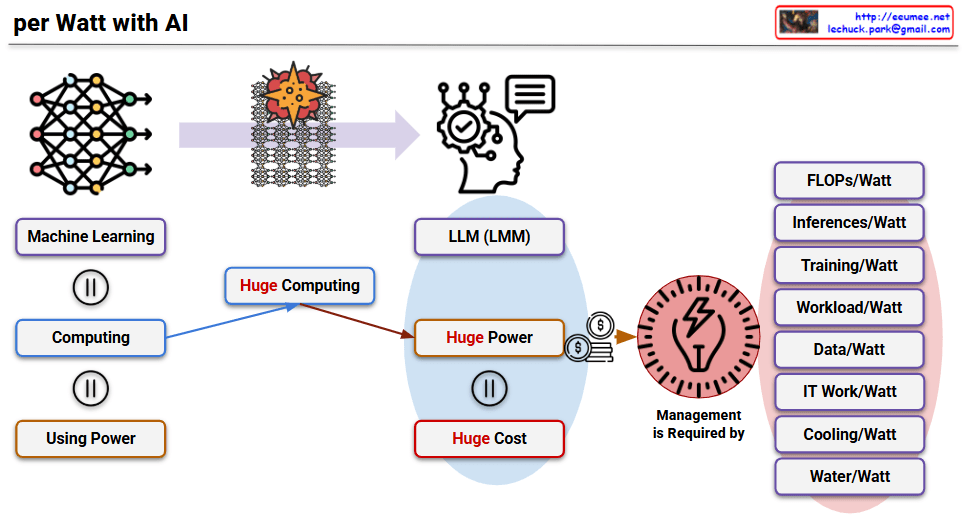

This image titled “per Watt with AI” is a diagram explaining the paradigm shift in power efficiency following the AI era, particularly after the emergence of LLMs.

Core Structure of AI Development:

Characteristics of LLMs: As AI, particularly LLMs, have proven their effectiveness, tremendous progress has been made. However, due to their technical characteristics, they have the following structure:

With hardware advancements making this approach practically effective, power consumption has become a critical issue affecting even the global ecosystem. Therefore, power is now used as a performance indicator for all operations.

Performance-related:

Operations-related:

Infrastructure-related:

This diagram illustrates that in the AI era, power efficiency has become the core criterion for all performance evaluations, transcending simple technical metrics to encompass environmental, economic, and social perspectives.

With Claude

This image illustrates the three core processes of AI LLMs by drawing parallels to human learning and cognitive processes.

Learning

Reasoning

Inference

These three stages visually demonstrate how AI processes information in a manner similar to the natural human sequence of learning → thinking → conclusion, connecting AI’s technical processes to familiar human cognitive patterns.

With Claude

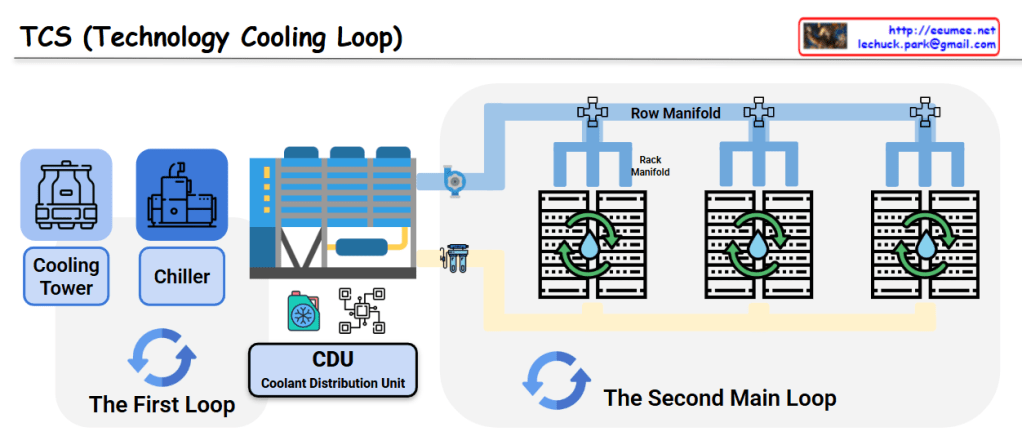

This image shows a diagram of the TCS (Technology Cooling Loop) system structure.

The First Loop:

The Second Main Loop:

This is an efficient cooling system used in data centers and large-scale IT facilities. It systematically removes heat generated by server equipment to ensure stable operations through a two-loop architecture that separates the heat rejection process from the precision cooling delivery to IT equipment.

With Claude

Eeumee.net: Your Daily Dose of Tech Clarity!

Tired of complex tech concepts feeling like a tangled mess? At Eeumee.net, we simplify the intricate world of technology into easy-to-digest, one-page diagrams, delivered daily!

What makes eeumee.net unique?

Why visit eeumee.net?

Whether you’re a seasoned professional looking to quickly refresh a concept, a student trying to grasp new ideas, or simply someone who appreciates clear and concise explanations, Eeumee.net is your go-to resource. Stop struggling with vague definitions and start building a solid foundation of technical understanding, one diagram at a time.

Visit us today and bring clarity to your technical world!

Imaged by ChatGPT, Promotion text by Gemini