A Cleaning Day

The Computing for the Fair Human Life.

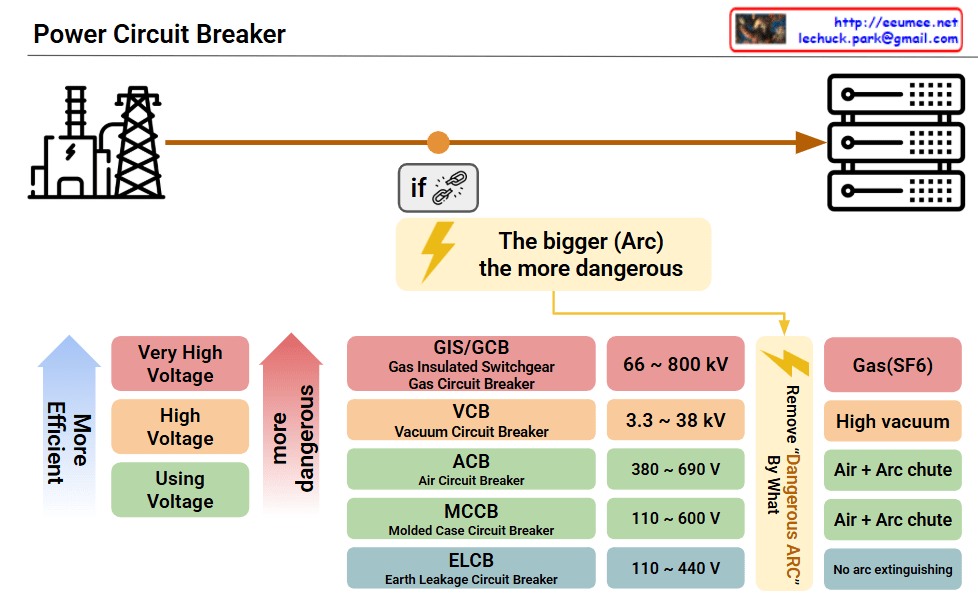

This image presents a Power Circuit Breaker classification diagram showing the types and characteristics of electrical circuit breakers used in power systems.

Power Flow: The diagram illustrates the electrical power path from power plant → transmission lines → circuit breakers → distribution panels.

The breakers are categorized by voltage levels and arc extinguishing methods:

The diagram emphasizes “The bigger (Arc) the more dangerous” – highlighting that higher voltages require more sophisticated and safer arc extinguishing technologies.

Summary: This technical diagram systematically categorizes power circuit breakers from ultra-high voltage (800kV) to low voltage (110V) applications, demonstrating how arc extinguishing complexity increases with voltage levels. The chart serves as an educational reference showing that higher voltage systems require more advanced safety mechanisms like SF6 gas insulation, while lower voltage applications can use simpler air-based arc interruption methods.

With Claude

Analysis of Optimization Strategy Framework

Stable Environment → Minimal optimization sufficient

Volatile Environment → 60-80% optimization optimal

This framework demonstrates that “good enough” often outperforms “perfect” in real-world scenarios. The 60-80% optimization zone represents the intersection of achievability, efficiency, and business value—particularly crucial in today’s rapidly changing business landscape. True optimization isn’t about reaching 100%; it’s about finding the right balance between effort invested and value delivered, while maintaining the agility to adapt when requirements inevitably change.

(!) 60-80% is just a number. The best number is changed by …

With Claude

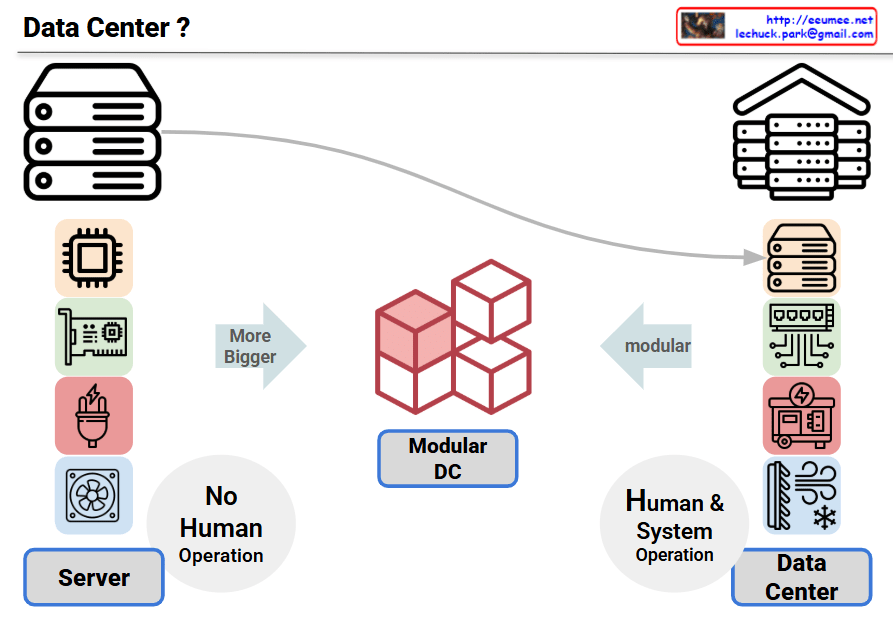

This infographic compares the evolution from servers to data centers, showing the progression of IT infrastructure complexity and operational requirements.

Left – Server

Center – Modular DC

Right – Data Center

Additional Perspective on Automation Evolution:

While the image shows data centers requiring human intervention, the actual industry trend points toward increasing automation:

Summary: This diagram illustrates the current transition from simple automated servers to complex data centers requiring human oversight, but the ultimate industry goal is achieving fully automated “lights-out” data center operations. The evolution shows increasing complexity followed by sophisticated automation that eventually reduces the need for human intervention.

With Claude

Hope to jump

This image visualizes humanity’s endless desire for ‘difference’ as the creative force behind ‘newness.’ The organic human brain fuses with the logical AI circuitry, and from their core, a burst of light emerges. This light symbolizes not just the expansion of knowledge, but the very moment of creation, transforming into unknown worlds and novel concepts.