From Copilot with some prompting

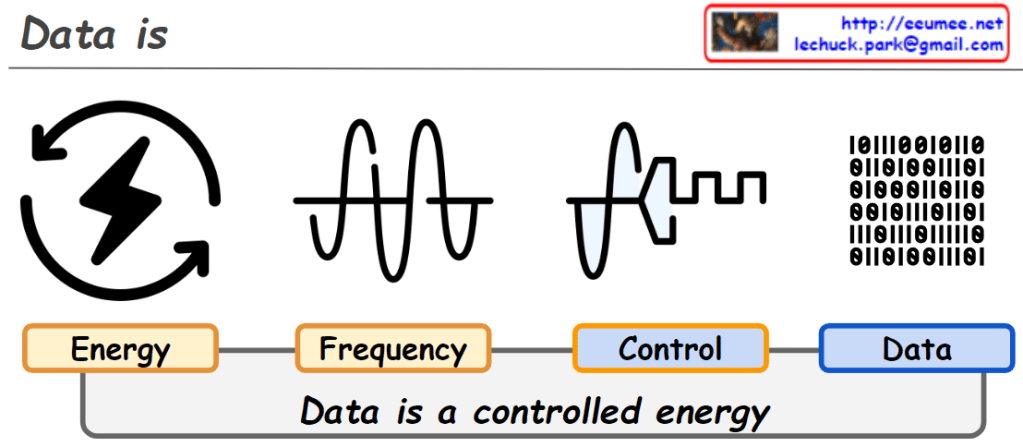

The image you shared represents a diagram titled “Facility Data Spec.” Here’s the description:

- Image Description:

- The diagram depicts the process of defining data requirements centered around facility-based data management.

- On the left side, there’s an icon representing a “Facility,” which is connected to elements such as data generation rate, hardware interface, and software protocol.

- A relay network (symbolized by gears) is linked to the facility and leads to an IP port.

- The IP port connects to a TCP/IP network represented by circuit lines, ultimately leading to a data point.

- The data point includes elements like data explanations and data units.

- At the top right corner of the image, there are URLs and an email address.

- Summary:

- The diagram illustrates how data is generated within a facility, transmitted through various networks and protocols, and ultimately represented as data points.

This diagram provides valuable insights for managing facility-related data requirements. Understanding and effectively managing data specifications at the facility level is crucial for efficient data management.

From Gemini with some prompting

Facility-centric data definition for facility data management

Facility data management is the process of systematically collecting, storing, managing, and analyzing data generated from facilities. Facility data includes information on the facility’s condition, operation, and production. Facility data management can lead to the following benefits:

Improved facility efficiency

Prediction and prevention of facility failures

Increased productivity

Energy savings

Facility-centric data definition is a data definition approach that centers on facilities. It offers the following benefits:

Data consistency

Improved data accessibility

Increased data usability

The diagram illustrates the following facility-centric data definition requirements for facility data management:

Facility: The facility is the entity that generates data. Different types of facilities exist, and each type generates different data.

Data point: A data point is a unit of data generated from a facility. It includes the data name, data type, and data value.

Network: The network is a communication network that connects facilities and data points. Data generated from facilities is transmitted to data points through the network.

Data repository: The data repository is a place where data is stored. Different types of data repositories exist, and each type has different characteristics.

Data processing: Data processing is the process of analyzing and utilizing data. It includes data cleaning, data analysis, and data visualization.

Facility-centric data definition requirements for facility data management:

Data consistency: Facility-centric data definition should ensure data consistency. Data names, data types, and data values should be standardized to maintain data consistency.

Data accessibility: Facility-centric data definition should improve data accessibility. The data repository should be appropriately selected, and data access permissions should be managed to enhance data accessibility.

Data usability: Facility-centric data definition should increase data usability. Data analysis tools should be utilized, and data-driven decision-making should be implemented to improve data usability.

Conclusion:

Facility-centric data definition requirements should be considered for facility data management. Facility-centric data definition can enhance data consistency, accessibility, and usability, leading to improved facility efficiency, productivity, and energy efficiency.