from Claude with some prompting

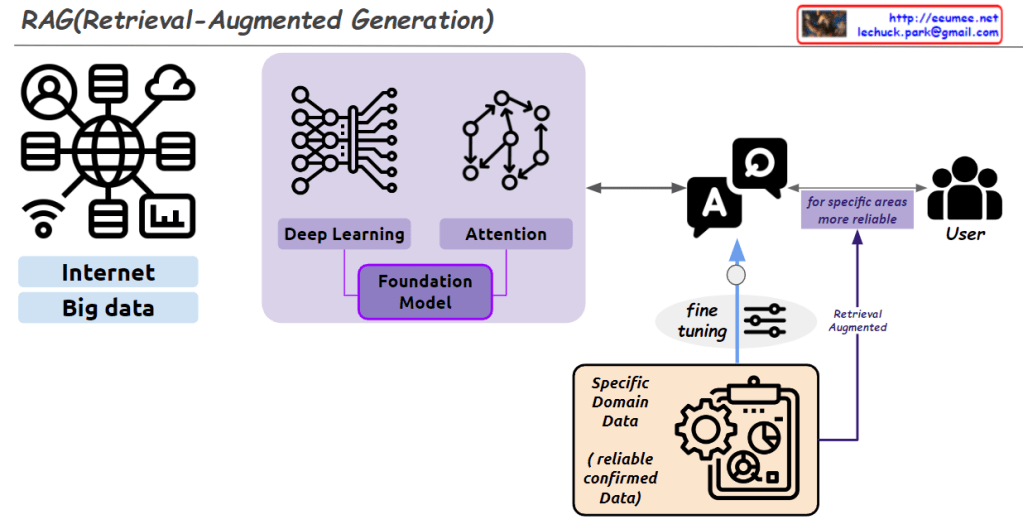

This diagram illustrates a personalized RAG (Retrieval-Augmented Generation) system that allows individuals to use their personal data with various LLM (Large Language Model) implementations. Key aspects include:

- User input: Represented by a person icon and notebook on the left, indicating personal data or queries.

- On-Premise storage: Contains LLM models that can be managed and run locally by the user.

- Cloud integration: An API connects to cloud-based LLM services, represented by icons in the “on cloud” section. These also symbolize different cloud-based LLM models.

- Flexible model utilization: The structure enables users to leverage both on-premise and cloud-based LLM models, allowing for combination of different models’ strengths or selection of the most suitable model for specific tasks.

- Privacy protection: A “Control a privacy Filter” icon emphasizes the importance of managing privacy filters to prevent inappropriate exposure of sensitive information to LLMs.

- Model selection: The “Use proper Foundation models” icon stresses the importance of choosing appropriate base models for different tasks.

This system empowers individual users to safely manage their data while flexibly utilizing various LLM models, both on-premise and cloud-based. It places a strong emphasis on privacy protection, which is crucial in RAG systems dealing with personal data.

The diagram effectively showcases how personal data can be integrated with advanced LLM technologies while maintaining control over privacy and model selection.