From Claude with some prompting

Here’s an interpretation of the image in English:

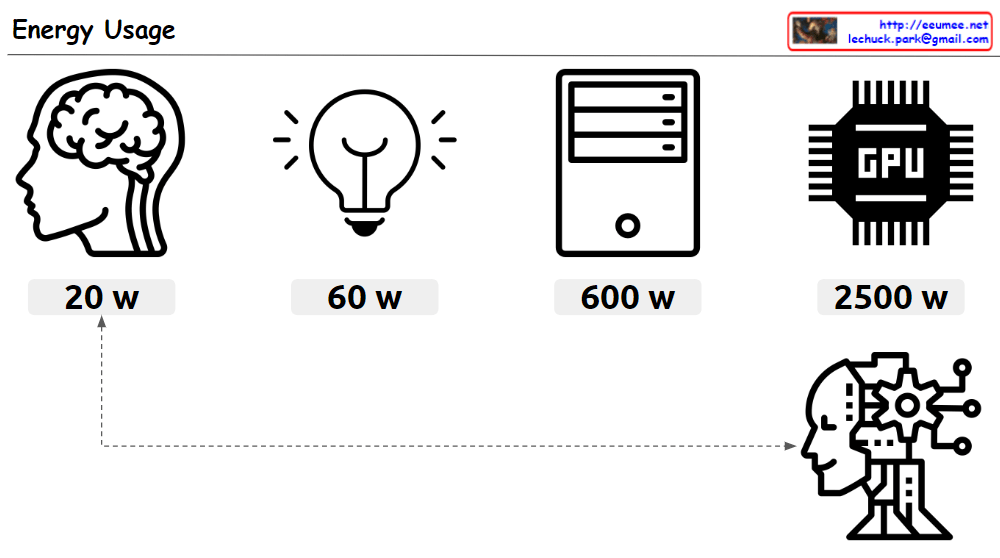

This image compares the energy usage of various devices and systems. Under the title “Energy Usage”, the following items are listed:

- An icon representing a human brain: 20 W (watts)

- A light bulb icon: 60 W

- An icon representing a computer tower: 600 W

- An icon representing a GPU (Graphics Processing Unit): 2500 W

At the bottom of the image, there’s an icon suggestive of artificial intelligence or a robot. This icon is connected by a dotted line to the human brain icon, implying a comparison of energy usage between the human brain and AI systems.

The image emphasizes the energy efficiency of the human brain. While the brain operates on just 20W, a high-performance computing device like a GPU consumes 2500W. This suggests that artificial intelligence systems consume significantly more energy compared to the human brain.

In the top right corner of the image, an email address (lechuck.park@gmail.com) is displayed.

Overall, this image provides a striking visual comparison of energy consumption across different systems, highlighting the remarkable efficiency of the human brain in contrast to artificial computing systems.