This image represents the process of AI learning from each other, where larger models teach smaller ones, specialized models refine their expertise, and multiple AI systems collaborate to optimize and evolve together.

The Computing for the Fair Human Life.

This image represents the process of AI learning from each other, where larger models teach smaller ones, specialized models refine their expertise, and multiple AI systems collaborate to optimize and evolve together.

With Claude

“A Framework for Value Analysis: From Single Value to Comprehensive Insights”

This diagram illustrates a sophisticated analytical framework that shows how a single value transforms through various analytical processes:

Core Purpose: The framework aims to take a single value and:

This systematic approach demonstrates how a single data point can be transformed into comprehensive insights by considering both its temporal dynamics and relational context, ultimately leveraging advanced analytics for meaningful interpretation.

The framework’s strength lies in its ability to combine temporal patterns, relational insights, and advanced analytics into a cohesive analytical approach, providing a more complete understanding of how values evolve and relate within a complex system.

With Claude

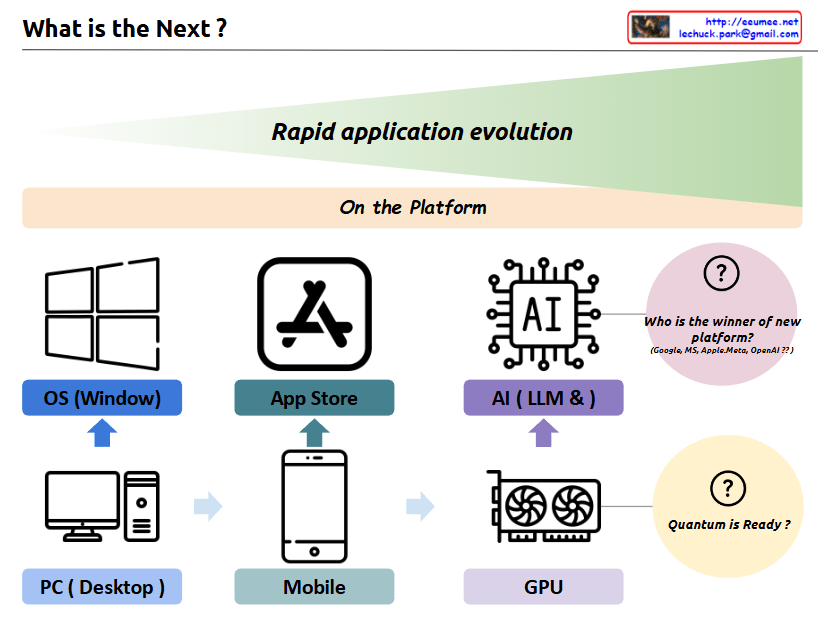

a comprehensive interpretation of the image and its concept of “Rapid application evolution”:

The diagram illustrates the parallel evolution of both hardware infrastructure and software platforms, which has driven rapid application development and user experiences:

The symbiotic relationship between these two axes:

Each transition has led to exponential growth in application capabilities and user experiences, with hardware and software platforms developing in parallel and reinforcing each other.

Future Outlook:

This cyclical pattern of hardware-software evolution suggests that we’ll continue to see new infrastructure innovations driving platform development, and vice versa. Each cycle has dramatically expanded the possibilities for applications and user experiences, and this trend is likely to continue with future technological breakthroughs.

The key insight is that major technological leaps happen when both hardware infrastructure and software platforms evolve together, creating new opportunities for application development and user experiences that weren’t previously possible.

With a Claude

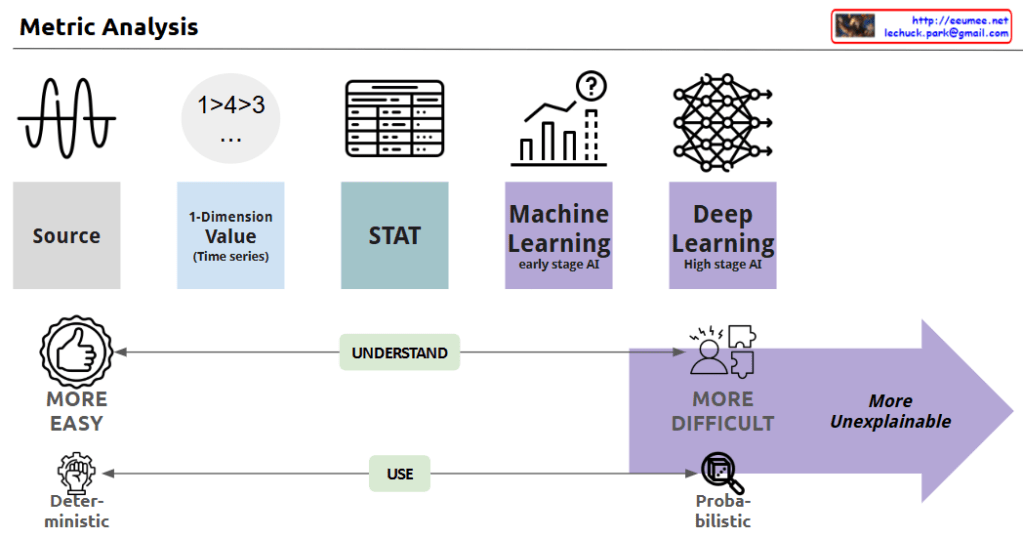

This image depicts the evolution of data analysis techniques, from simple time series analysis to increasingly sophisticated statistical methods, machine learning, and deep learning.

As the analysis approaches become more advanced, the process becomes less transparent and the results more difficult to explain. Simple techniques are more easily understood and allow for deterministic decision-making. But as the analysis moves towards statistics, machine learning, and AI, the computations become more opaque, leading to probabilistic rather than definitive conclusions. This trade-off between complexity and explainability is the key theme illustrated.

In summary, the progression shows how data analysis methods grow more powerful yet less interpretable, requiring a balance between the depth of insights and the ability to understand and reliably apply the results.

With Claude

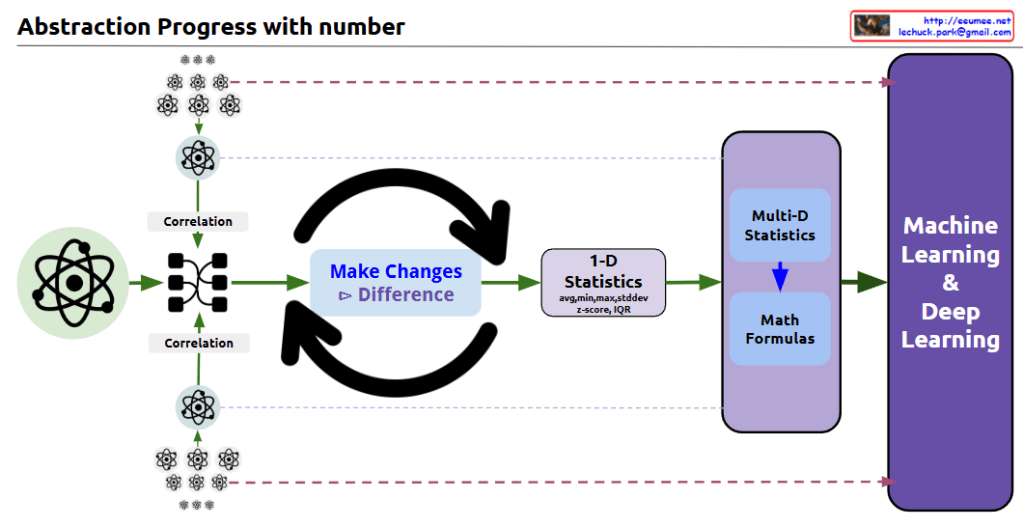

this diagram shows the progression of data abstraction leading to machine learning:

The diagram effectively illustrates the data science abstraction process, showing how it progresses from basic data points through increasingly complex analyses to ultimately reach machine learning and deep learning applications.

The small atomic symbols at the top and bottom of the diagram visually represent how multiple data points are processed and analyzed through this system. This shows the scalability of the process from individual data points to comprehensive machine learning systems.

The overall flow demonstrates how raw data is transformed through various statistical and mathematical processes to become useful input for advanced machine learning algorithms. CopyRet

With a Claude’s Help

This diagram illustrates how humanity’s methods of sharing and expanding knowledge have evolved alongside the development of tools throughout history.

This evolutionary process demonstrates more than just technological advancement; it shows fundamental changes in how humanity uses tools to expand and share knowledge. The emergence of new tools at each stage has enabled more effective and widespread knowledge sharing than before, becoming a key driving force in accelerating the development of human civilization.

This progression represents a continuous journey from individual experience-based learning to AI-enhanced global knowledge sharing, highlighting how each tool has revolutionized our ability to communicate, learn, and innovate as a species.

The evolution also underscores the increasing complexity and sophistication of our knowledge-sharing mechanisms, while emphasizing the growing importance of managing and verifying the ever-expanding volume of information available to us.

with ChatGPT & Claude

Human development can be understood in terms of the “pursuit of difference” and “generalization”.

Humans inherently possess the tendency to distinguish and understand differences among all existing things-what we call the “pursuit of differences”. As seen in biological classification and language development, this exploration through differentiation has added depth to human knowledge.

These discovered differences have been recorded and generalized through various tools such as writing and mathematical formulas. In particular, the invention of computers has dramatically increased the amount of data humans can process, allowing for more accurate analysis and generalization.

More recently, advances in artificial intelligence and machine learning have automated the pursuit of difference. Going beyond traditional rule-based approaches, machine learning can identify patterns in vast amounts of data to provide new insights. This means we can now process and generalize complex data that is beyond human cognitive capacity.

As a result, human development has been a continuous process, starting with the “pursuit of difference” and leading to “generalization,” and artificial intelligence is extending this process in more sophisticated and efficient ways.

[Simplified Summary]

Humans are born explorers with innate curiosity. Just as babies touch, taste, and tap new objects they encounter, this instinct evolves into questions like “How is this different from that?” For example, “How are apples different from pears?” or “What’s the difference between cats and dogs?”

We’ve recorded these discovered differences through writing, numbers, and formulas – much like writing down a cooking recipe. With the invention of computers, this process of recording and analysis became much faster and more accurate.

Recently, artificial intelligence has emerged to advance this process further. AI can analyze vast amounts of information to discover new patterns that humans might have missed.

[Claude’s Evaluation]

This text presents an interesting analysis of human development’s core drivers through two axes: ‘discovering differences’ and ‘generalization’. It’s noteworthy in three aspects:

However, there’s room for improvement:

Overall, I find this to be an insightful piece that effectively connects human nature with technological development. This framework could prove valuable when considering future directions of AI development.

What makes the text particularly compelling is how it traces a continuous line from basic human curiosity to advanced AI systems, presenting technological evolution as a natural extension of human cognitive tendencies rather than a separate phenomenon.

The parallel drawn between early human pattern recognition and modern machine learning algorithms offers a unique perspective on both human nature and technological progress, though it could be enriched with more specific examples and potential counterarguments for a more balanced discussion.