Attitude towards AI

The Computing for the Fair Human Life.

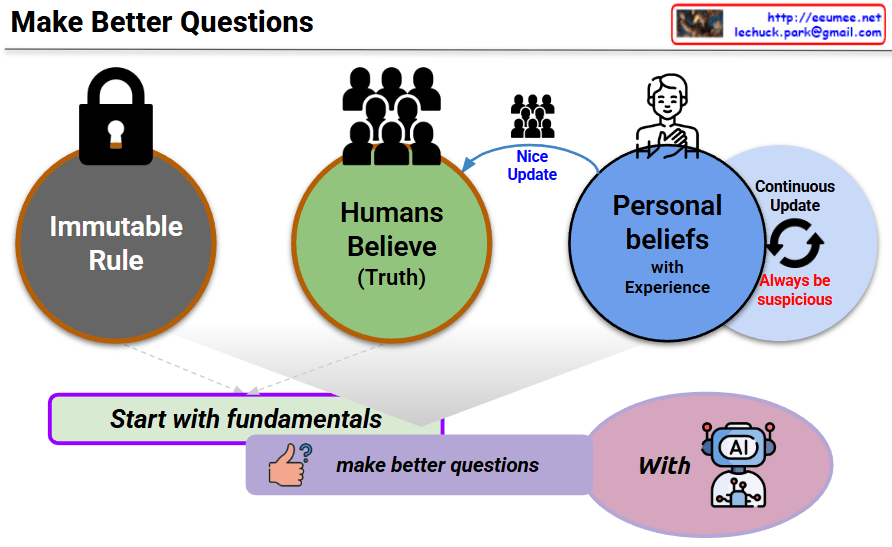

This diagram titled “Make Better Questions” illustrates a methodology for effective questioning. The key concepts are:

This diagram ultimately suggests a method for optimizing interactions with AI through constant skepticism and adherence to fundamentals while maintaining flexible thinking. It emphasizes the importance of not settling for fixed beliefs but continuously learning and evolving.

With Claude

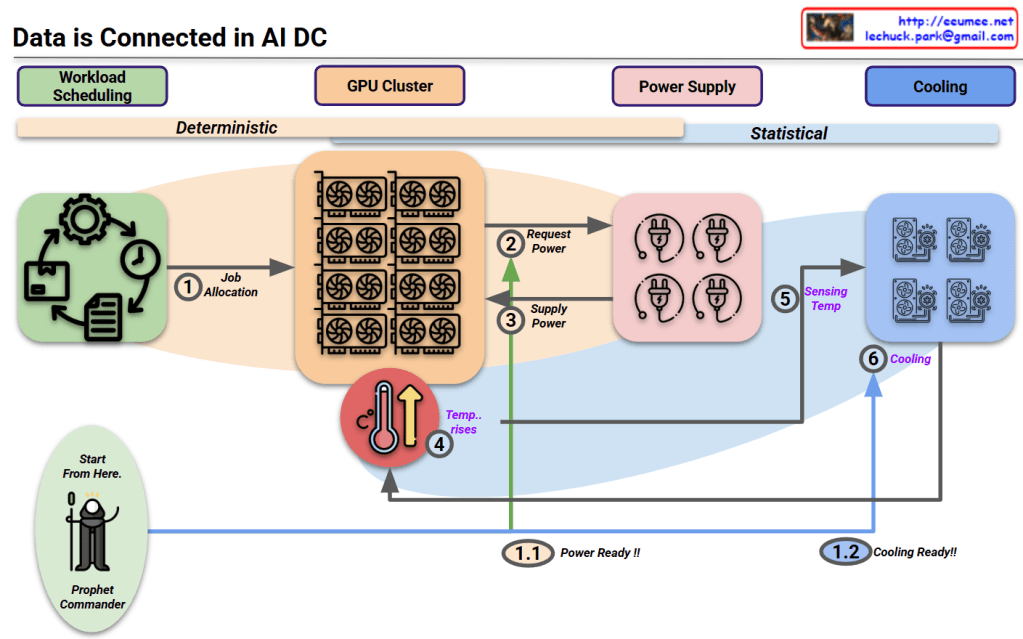

This diagram titled “Data is Connected in AI DC” illustrates the relationships starting from workload scheduling in an AI data center.

Key aspects of the diagram:

This diagram illustrates the interconnected workflow in AI data centers, beginning with workload scheduling that enables predictive resource management. The process flows from deterministic power requirements to statistical cooling needs, with the “Prophet Commander” enabling proactive preparation of power and cooling resources. This integrated approach demonstrates how workload prediction can drive efficient resource allocation throughout the entire AI data center ecosystem.

With Claude

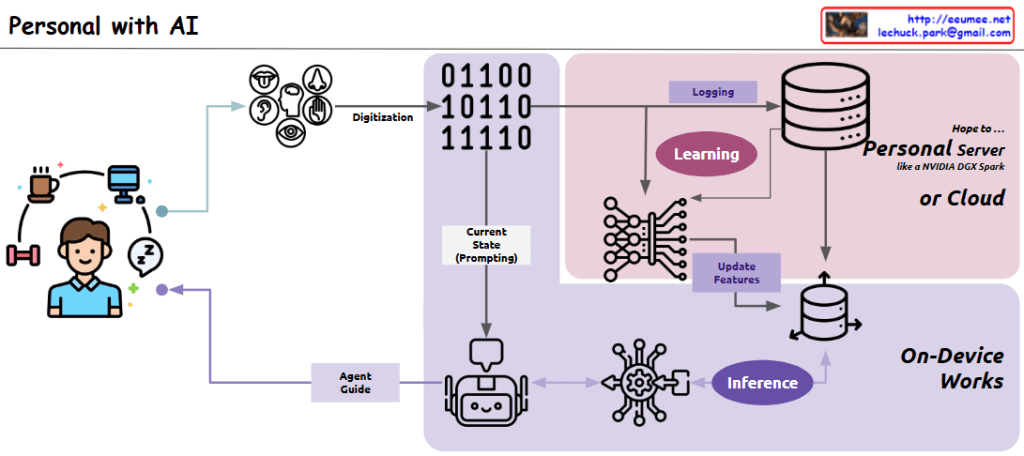

This diagram illustrates a “Personal Agent” system architecture that shows how everyday life is digitized to create an AI-based personal assistant:

Left side: The user’s daily activities (coffee, computer, exercise, sleep) are represented, which serve as the source for digitization.

Center-left: Various sensors (visual, auditory, tactile, olfactory, gustatory) capture the user’s daily activities and convert them through the “Digitization” process.

Center: The “Current State (Prompting)” component stores the digitized current state data, which is provided as prompting information to the AI agent.

Upper right (pink area): Two key processes take place:

This section runs on a “Personal Server or Cloud,” preferably using a personalized GPU server like NVIDIA DGX Spark, or alternatively in a cloud environment.

Lower right: In the “On-Device Works” area, the “Inference” process occurs. Based on current state data, the AI agent infers guidance needed for the user, and this process is handled directly on the user’s personal device.

Center bottom: The cute robot icon represents the AI agent, which provides personalized guidance to the user through the “Agent Guide” component.

Overall, this system has a cyclical structure that digitizes the user’s daily life, learns from that data to continuously update a personalized vector database, and uses the current state as a basis for the AI agent to provide customized guidance through an inference process that runs on-device.

with Claude

This image titled “Data Explosion in Data Center” illustrates three key challenges faced by modern data centers:

This diagram comprehensively explains how the exponential growth of data impacts data center design and operations, particularly highlighting the challenges and innovations in power consumption and thermal management.

With Claude

Data centers have expanded rapidly from the early days of cloud computing to the explosive growth driven by AI and ML.

Initially, growth was steady as enterprises moved to the cloud. However, with the rise of AI and ML, demand for powerful GPU-based computing has surged.

The global data center market, which grew at a CAGR of around 10% during the cloud era, is now accelerating to an estimated CAGR of 15–20% fueled by AI workloads.

This shift is marked by massive parallel processing with GPUs, transforming data centers into AI factories.

With ChatGPT

The image shows a diagram explaining “Legacy AI” or rule-based AI systems. The diagram is structured in three main sections:

This diagram effectively illustrates the structure and workflow of traditional rule-based AI systems, demonstrating how they operate like conventional programming with IF-THEN statements. The system first analyzes data, then prioritizes information based on predefined criteria, and finally makes decisions by selecting the optimal choice according to the programmed rules. This represents the foundation of early AI approaches before the advent of modern machine learning techniques, where explicit rules rather than learned patterns guided the decision-making process.

With Claude