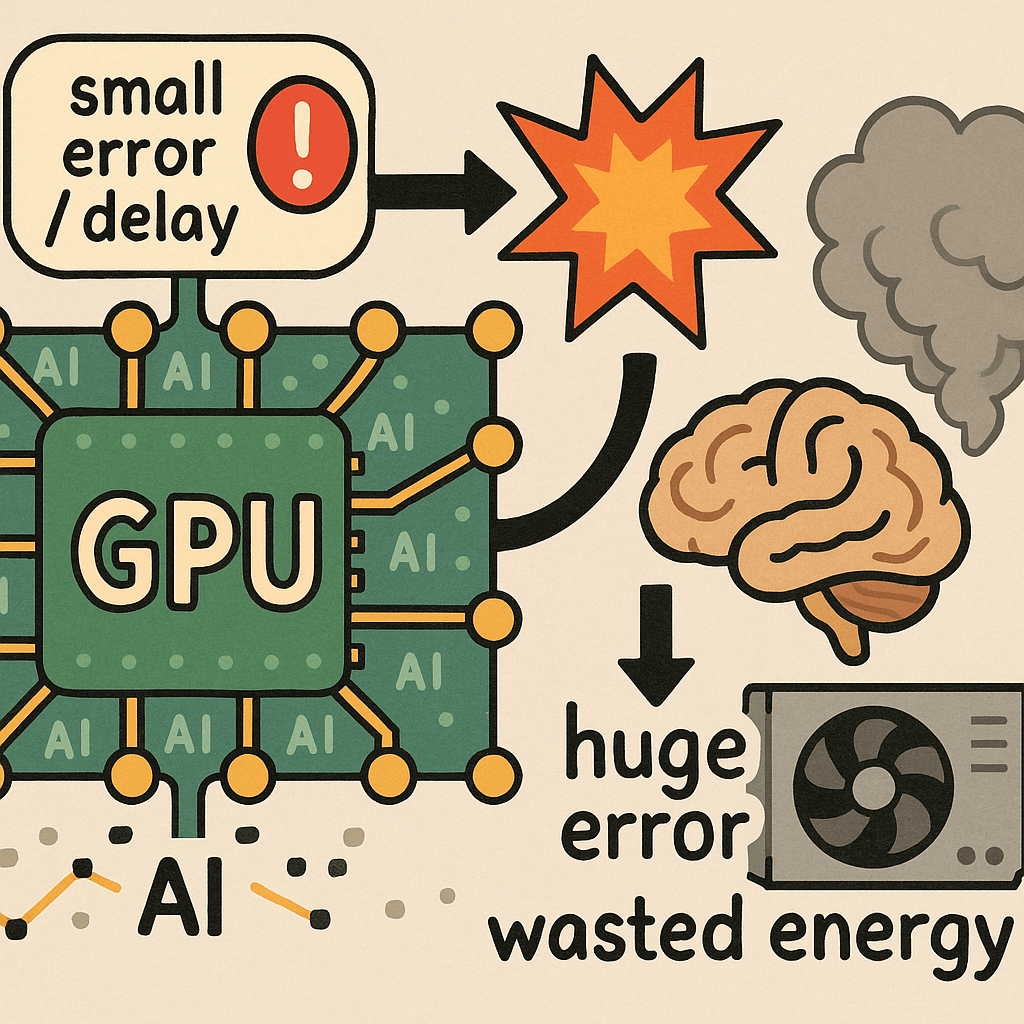

The image shows how even a small error or delay in GPU-based large-scale parallel AI processing can cause major output failures and energy waste, highlighting the critical importance of data quality—especially accuracy and precision—in AI systems.

The Computing for the Fair Human Life.

The image shows how even a small error or delay in GPU-based large-scale parallel AI processing can cause major output failures and energy waste, highlighting the critical importance of data quality—especially accuracy and precision—in AI systems.

Humans explore to learn. AI waits for data.

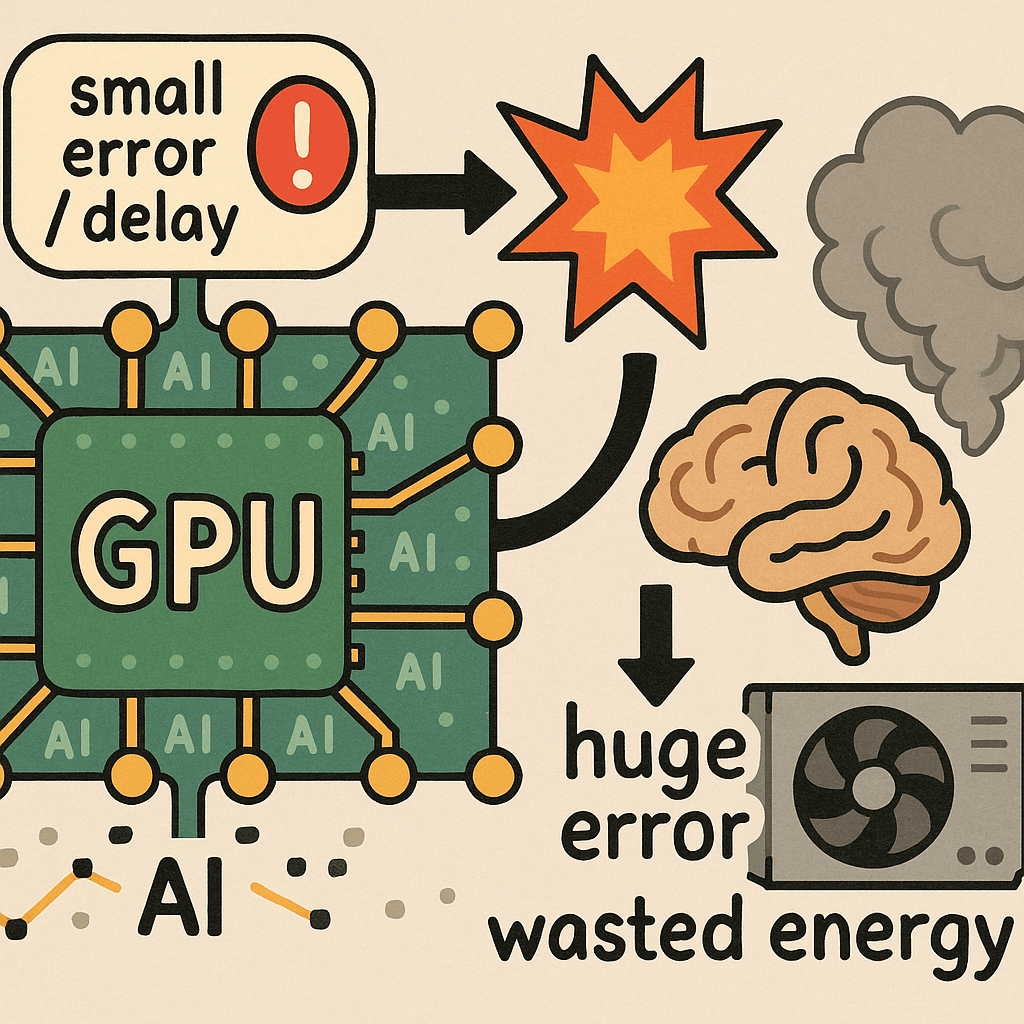

This image titled “Machine Changes” visually illustrates the evolution of technology and machinery across different eras.

The diagram progresses from left to right with arrows showing the developmental stages:

Stage 1 (Left): Manual Labor Era

Stage 2: Mechanization Era

Stage 3 (Blue section): Automation and Computer Era

Stage 4 (Purple section): AI and Smart Technology Era

Additional Insight: The transition from the CPU era to the GPU era marks a fundamental shift in what drives technological capability. In the CPU era, program logic was the critical factor – the sophistication of algorithms and code determined system performance. However, in the GPU era, training data has become paramount – the quality, quantity, and diversity of data used to train AI models now determines the intelligence and effectiveness of these systems. This represents a shift from logic-driven computation to data-driven learning.

Overall, this infographic captures humanity’s technological evolution: Manual Labor → Mechanization → Automation → AI/Robotics, highlighting how the foundation of technological advancement has evolved from human skill to mechanical power to programmed logic to data-driven intelligence.

With Claude

This diagram illustrates a systematic framework for “Monitoring is from changes.” The approach demonstrates a hierarchical structure that begins with simple, certain methods and progresses toward increasingly complex analytical techniques.

This framework demonstrates a logical progression from simple and certain to gradually more complex analyses. The hierarchical structure of the detection process—from change detection through anomaly detection to error detection—shows how monitoring systems identify and respond to increasingly serious issues.

With Claude

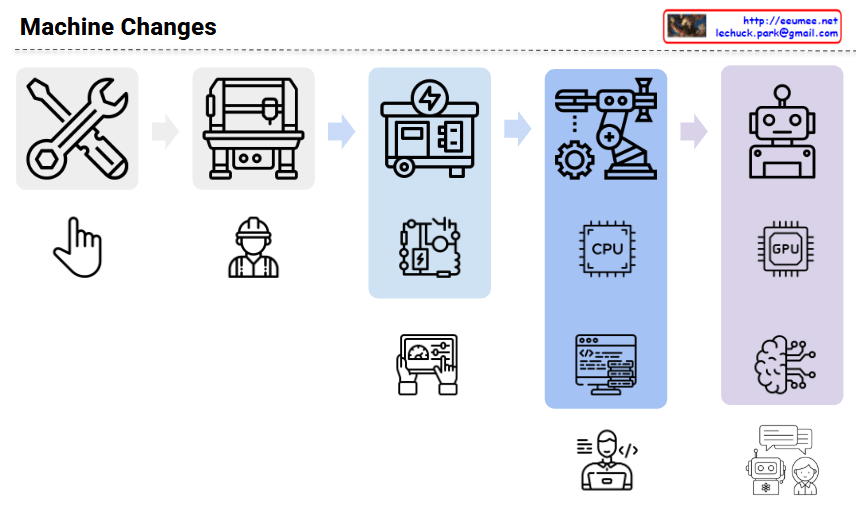

The image shows a comprehensive data security diagram with three main approaches to securing data systems. Let me explain each section:

The diagram illustrates the evolution of data security approaches from simpler encryption and authentication methods to more complex network security architectures, and finally to comprehensive end-to-end security solutions. The diagram questions whether more complex systems might actually introduce more vulnerabilities, suggesting that complexity doesn’t always equal better security.

With Claude

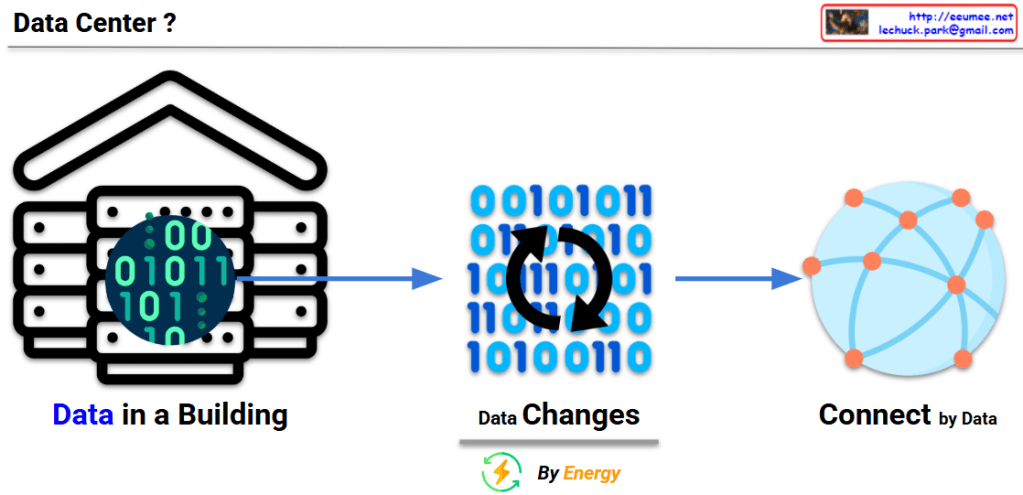

This image explains the fundamental concept and function of a data center:

This diagram visualizes the essential definition of a data center – a physical building that stores data, consumes energy to process that data, and plays a crucial role in connecting this data to the external world through the internet.

With Claude

This image titled “Data Explosion in Data Center” illustrates three key challenges faced by modern data centers:

This diagram comprehensively explains how the exponential growth of data impacts data center design and operations, particularly highlighting the challenges and innovations in power consumption and thermal management.

With Claude