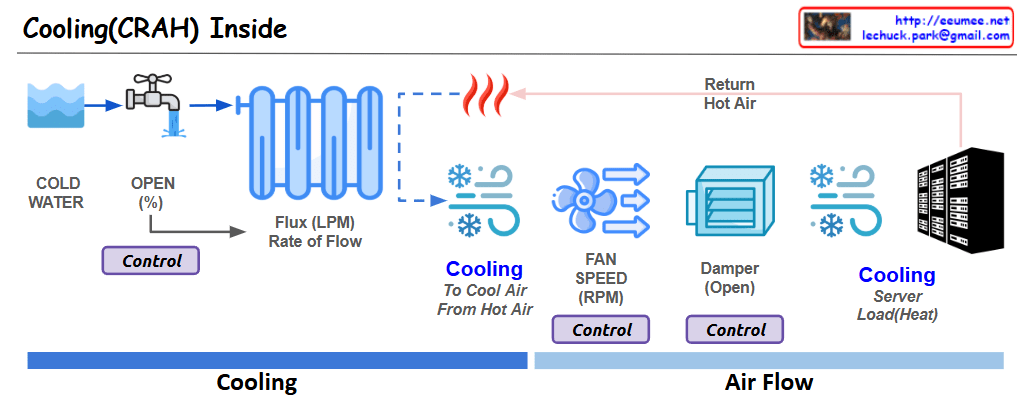

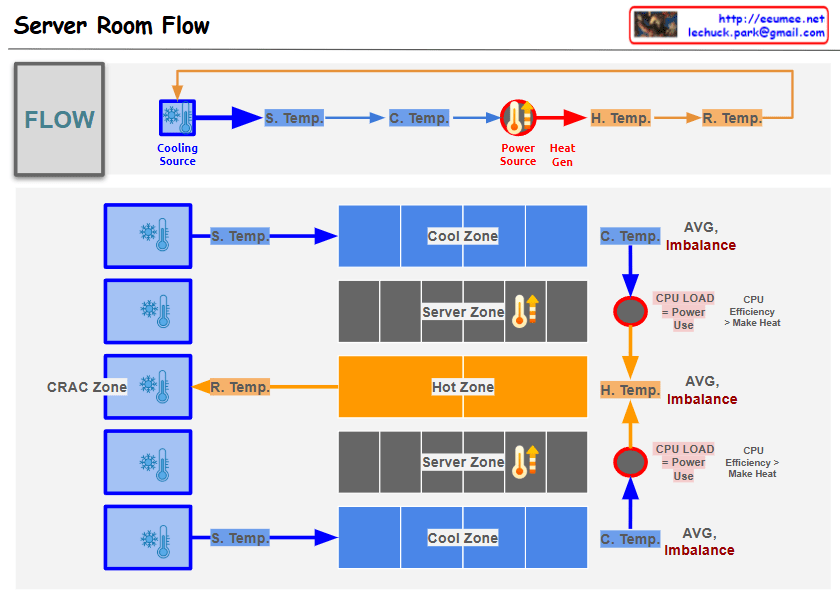

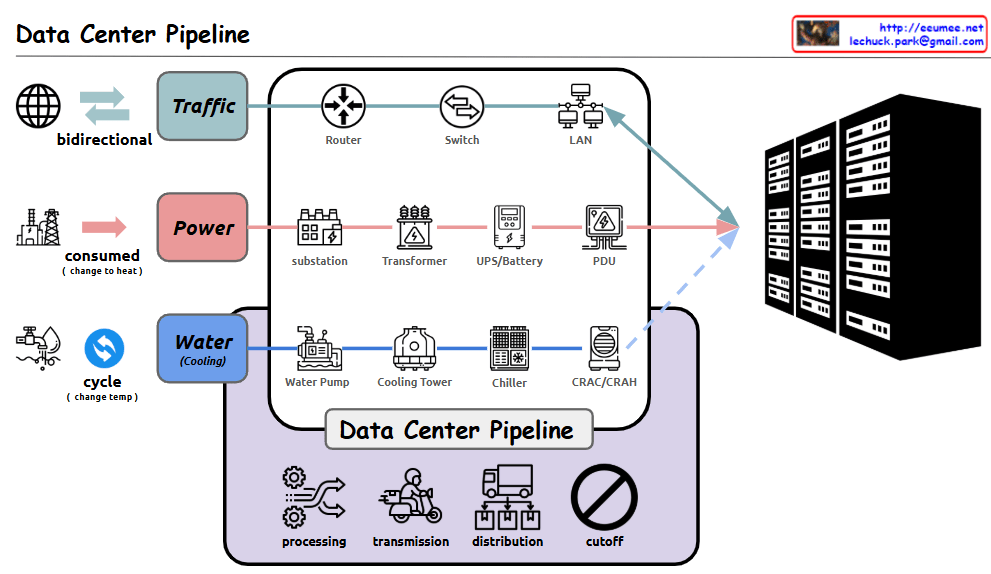

Data Center Cooling System Overview

Cooling System Operation Flow

- Cooling Tower: Produces cooling water by releasing heat to the outside environment. This stage involves dissipating heat into the atmosphere.

- Chiller: Absorbs heat from the cooling water to produce chilled water. The condenser plays a crucial role in this process.

- Air Handling Unit: Uses chilled water to cool air, creating cooling air for the server room.

- Server Room: The cooled air is ultimately supplied to the server room to remove heat from IT equipment.

Key Control and Conversion Equipment

- Pump: Regulates the pressure and speed of cooling and chilled water to maintain appropriate flow rates throughout the system.

- Header: Handles the distribution and collection of cooling and chilled water, ensuring uniform distribution across the system.

- Heat Exchanger/Condenser: Performs heat exchange processes at various stages, with the condenser playing a particularly important role in the chiller.

- Fan: Circulates cooling air to the server room.

Core Measurement Metrics

- Temperature: Monitors the temperature of cooling water, chilled water, and air at each stage to evaluate system efficiency.

- Water Flow Rate: Measures the amount of cooling and chilled water circulating in the system to ensure adequate cooling capacity.

- Supply/Return Temperature Differential: Measures the temperature difference before and after passing through each component to assess heat exchange efficiency.

- Power Usage: Monitors the power consumption of pumps, chillers, fans, and other equipment to manage energy efficiency.

These metrics are monitored in detail by pump and condenser to optimize the overall performance of the cooling system and improve energy efficiency.

With Claude