with a ChatGPT’s help

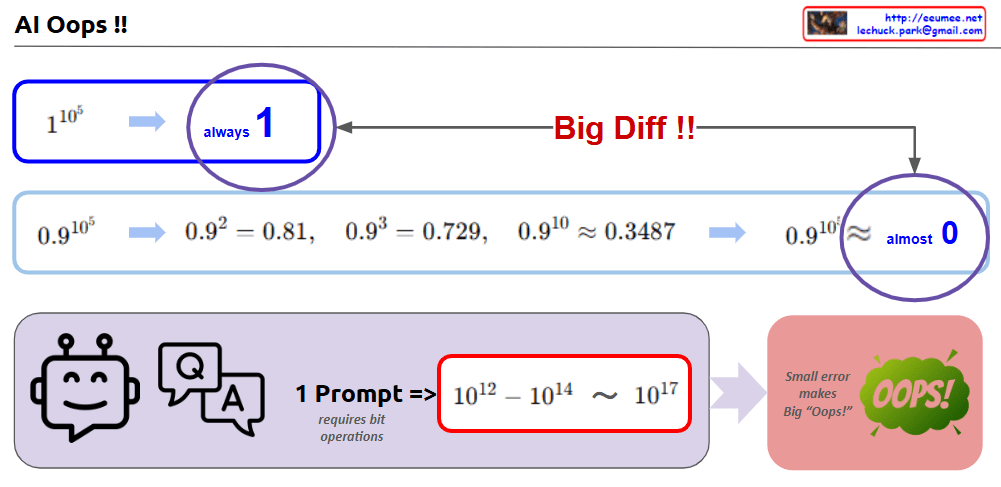

This image highlights how small errors in AI or computational operations can lead to significant differences or problems. Here’s a sentence-based explanation:

- Small changes lead to big differences

- 1^10⁵: This consistently equals

1, no matter how many iterations are performed. - 0.9^10⁵: On the other hand, this gradually decreases and approaches

0, creating a significant difference.- For example:

- 0.92=0.810.9^2 = 0.810.92=0.81,

- 0.93=0.7290.9^3 = 0.7290.93=0.729,

- 0.910≈0.34870.9^{10} ≈ 0.34870.910≈0.3487,

- 0.9105≈almost00.9^{10^5} ≈ almost 00.9105≈almost0.

- For example:

- 1^10⁵: This consistently equals

- The “Oops” in AI or calculations

- A single incorrect computation or prompt can result in a massive amount of processing (from 10^12 to 10^17 bit operations).

- This demonstrates how a small error can lead to a big “Oops!” in the overall system.

Summary:

The image visually explains the importance of precision and how minor computational inaccuracies can cascade into significant consequences, especially in AI or large-scale calculations.