From ChatGPT with some prompting

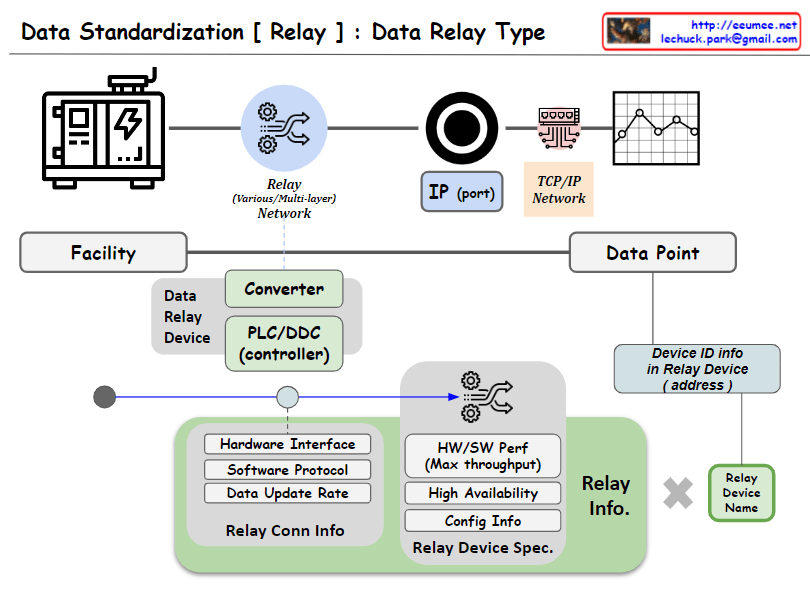

The image appears to illustrate the process and key elements involved in data collection from a facility, with a focus on the intermediary step of converting or relaying data through devices such as PLCs (Programmable Logic Controllers) or DDCs (Direct Digital Controllers). These conversion devices play a pivotal role, and their functions are visualized as follows:

Data Conversion (Converter): This converts raw data from the facility into a format that is communicable across a network, ensuring compatibility with other devices through protocol or data format alignment.

Communication Gateway (PLC/DDC controller): The data relay device also serves as a gateway, managing the flow of data between the facility and the TCP/IP network, transmitting data in a form that is understandable to other devices on the network, and sometimes processing complex data.

Relay Information (Relay Info): As depicted, it defines the functional and technical details of the converter, including hardware interfaces, software protocols, data update rates, and relay connection information. This encompasses the device’s performance capabilities (maximum throughput), availability, configuration information, and relay device specifications.

Device Identification Information (Device ID info): Each relay device possesses unique identification information (address), which is a critical parameter for distinguishing and addressing devices within the network.

Relay Device Naming (Relay Device Name): Each device is assigned a discernible name for easy identification and reference within the system.

These components are crucial for standardized communication and processing of data, ensuring efficient collection and prompt handling of data. The diagram is designed to elucidate how these technical elements interact and fulfill their roles in the data relay process.