From Claude with some prompting

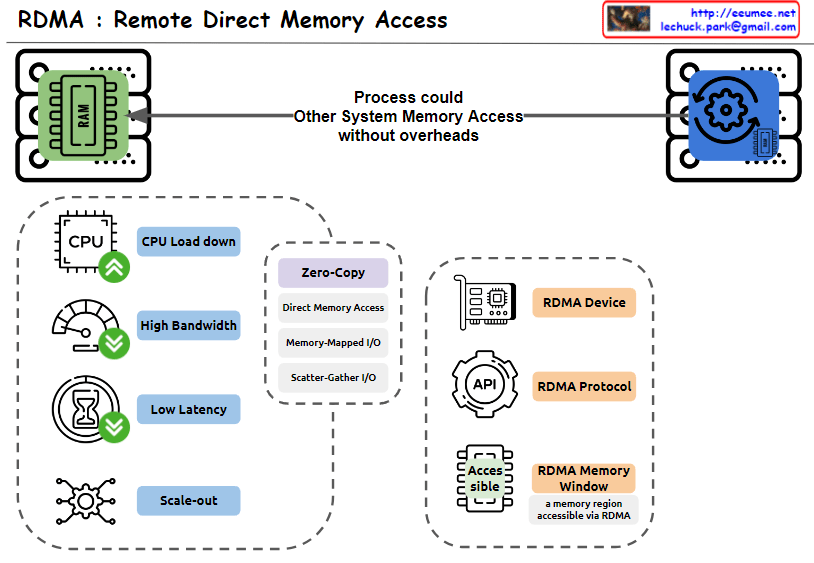

This image explains RDMA (Remote Direct Memory Access). Here’s an interpretation in English:

- The top of the image shows that RDMA allows one system to access the memory of another system without overheads.

- The bottom left box lists the main advantages of RDMA:

- CPU Load down

- High Bandwidth

- Low Latency

- Scale-out

- The central box describes key features of RDMA:

- Zero-Copy

- Direct Memory Access

- Memory-Mapped I/O

- Scatter-Gather I/O

- The bottom right box lists RDMA-related components:

- RDMA Device

- RDMA Protocol

- RDMA Memory Window (defined as “a memory region accessible via RDMA”)

The image provides a concise overview of RDMA technology, highlighting its main features and benefits. It illustrates how RDMA enables efficient, direct memory access between systems, reducing CPU load and improving performance in terms of bandwidth and latency.