I’m sorry to you and I wish you happiness. But I …

The Computing for the Fair Human Life.

I’m sorry to you and I wish you happiness. But I …

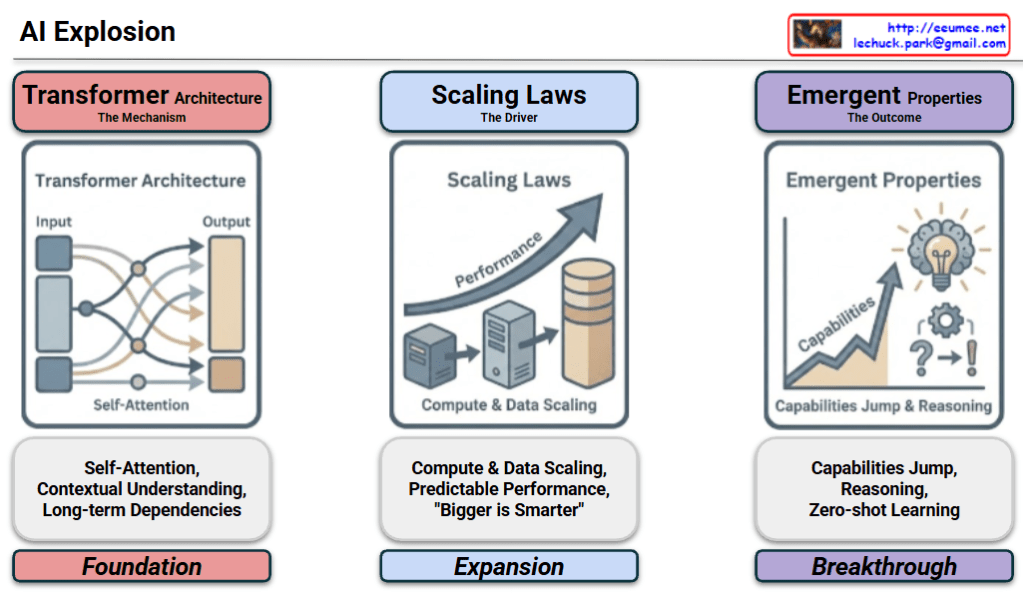

This diagram provides a structured visual narrative of how modern AI (LLM) achieved its rapid advancement, organized into a logical flow: Foundation → Expansion → Breakthrough.

1. The Foundation: Transformer Architecture

2. The Expansion: Scaling Laws

3. The Breakthrough: Emergent Properties

The diagram effectively illustrates the causal relationship of AI evolution: The Transformer provided the capability to learn, Scaling Laws amplified that capability through size, and Emergent Properties were the revolutionary outcome of that scale.

#AIExplosion #LLM #TransformerArchitecture #ScalingLaws #EmergentProperties #GenerativeAI #TechTrends

With Gemini

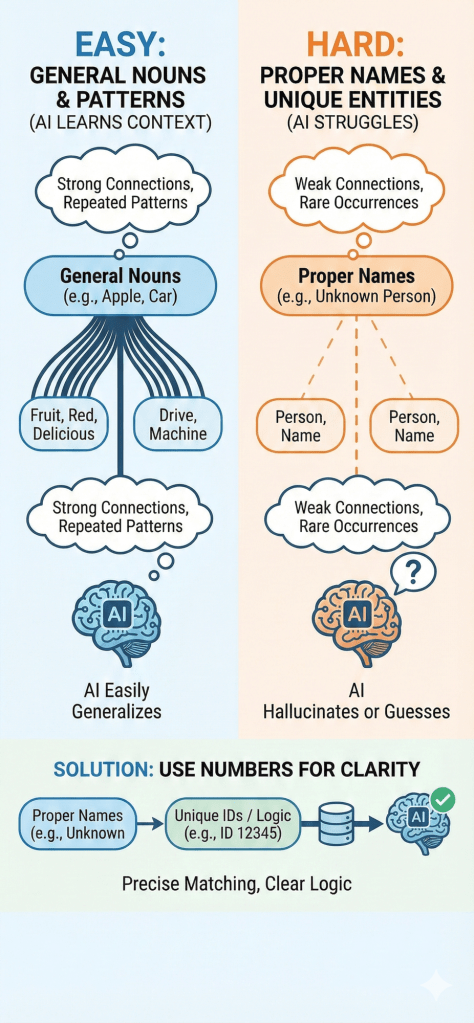

This infographic illustrates the fundamental difference in how AI processes language. It shows that AI excels at understanding General Nouns (like “apple” or “car”) because they are built on strong, repeated contextual patterns. In contrast, AI struggles with Proper Nouns (like specific names) due to weak connections and a lack of context, often leading to hallucinations. The visual suggests a solution: converting unique entities into Numbers or IDs, which offer the clear logic and precision that AI models prefer over ambiguous text.

With Gemini

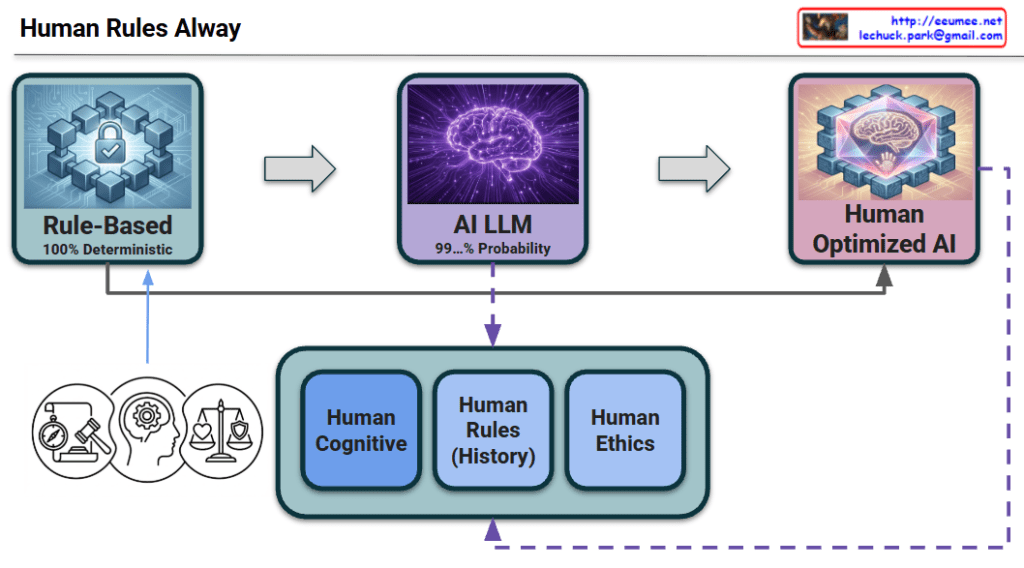

This diagram visualizes the history and future direction of intelligent systems. It illustrates the evolution from the era of manual programming to the current age of generative AI, and finally to the ultimate goal where human standards perfect the technology.

This section represents the mechanism that drives the evolution from Stage 2 to Stage 3.

This diagram declares that the future of AI lies not in discarding the old “Rule-Based” ways, but in fusing that deterministic precision with modern probabilistic power to create a truly optimized intelligence.

#AIEvolution #FutureOfAI #HybridAI #DeterministicVsProbabilistic #HumanInTheLoop #TechRoadmap #AIArchitecture #Optimization #ResponsibleAI