The Computing for the Fair Human Life.

From Claude with some prompting

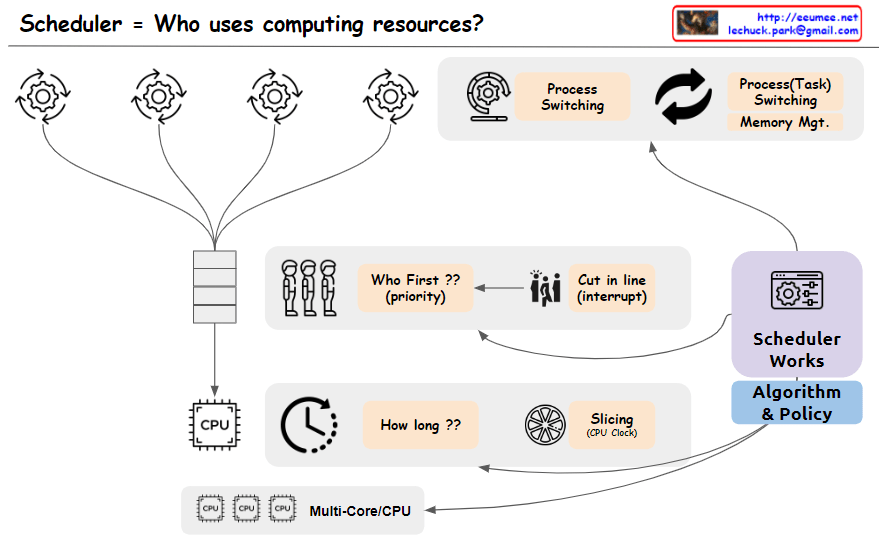

The image depicts a scheduler system that manages the allocation of computing resources, addressing the key question “Who uses computing resources?”. The main components shown are:

The image effectively covers the key concepts and components involved in scheduling computing resources, including task prioritization, interrupts, CPU time allocation, time slicing, process/task switching, scheduling algorithms and policies, and support for multi-core/multi-CPU systems. Memory management is assumed to be part of the task-switching process and is not explicitly depicted.

From Claude with some prompting

The image illustrates the role of a Data Processing Unit (DPU) in facilitating seamless and delay-free data exchange between different hardware components such as the GPU, NVME (likely referring to an NVMe solid-state drive), and other devices.

The key highlight is that the DPU enables “Data Exchange Parallely without a Delay” and provides “Seamless” connectivity between these components. This means the DPU acts as a high-speed interconnect, allowing parallel data transfers to occur without any bottlenecks or latency.

The image emphasizes the DPU’s ability to provide a low-latency, high-bandwidth data processing channel, enabling efficient data movement and processing across various hardware components within a system. This seamless connectivity and delay-free data exchange are crucial for applications that require intensive data processing, such as data analytics, machine learning, or high-performance computing, where minimizing latency and maximizing throughput are critical.

==================

The key features of the DPU highlighted in the image are:

The DPU aims to provide a high-speed, low-latency data processing channel, enabling efficient data movement and computation between various hardware components in a system. This can be particularly useful in applications that require intensive data processing, such as data analytics, machine learning, or high-performance computing.Cop

From Claude with some prompting

The CPU is described as a central processing unit for general-purpose computing, handling diverse tasks with high performance but at a low cost/price ratio.

This image provides an overview of different types of processors and their key characteristics. It compares CPUs, ASICs (Application-Specific Integrated Circuits), FPGAs (Field-Programmable Gate Arrays), and GPUs (Graphics Processing Units).

The ASIC is an application-specific integrated circuit designed for specific tasks like cryptography and AI. It has low performance per price but is highly optimized for its intended use cases.

The FPGA is a reconfigurable processor that allows design changes and prototyping. It has medium performance per price and is suitable for data processing sequences.

The GPU is designed for graphic processing and parallel data processing. It excels at high-performance computing for graphics-intensive applications, but has a medium to high cost/price ratio.

The image highlights the key differences in terms of processing capability, specialization, reconfigurability, performance, and cost among these processor types.

From Gemini with some prompting

The image depicts the concept of time and its relationship to matter, light, and change. Here’s a breakdown of the image elements:

Image Analysis

The image conveys that time is intricately intertwined with matter, light, and change. Time is used to measure the movement of matter and light, while change signifies the passage of time.

Text Analysis

Overall Interpretation

The image is a multifaceted representation of the complexity and diversity of time. It goes beyond time as a mere tool for counting numbers and delves into its profound relationship with matter, light, and change.

From ChatGPT with some prompting

The image is a schematic representation of GPU applications across three domains, emphasizing the GPU’s strength in parallel processing:

Image Processing: GPUs are employed to perform parallel updates on image data, which is often in matrix form, according to graphical instructions, enabling rapid rendering and display of images.

Blockchain Processing: For blockchain, GPUs accelerate the calculation of new transaction hashes and the summing of existing block hashes. This is crucial in the race of mining, where the goal is to compute new block hashes as efficiently as possible.

Deep Learning Processing: In deep learning, GPUs are used for their ability to process multidimensional data, like tensors, in parallel. This speeds up the complex computations required for neural network training and inference.

A common thread across these applications is the GPU’s ability to handle multidimensional data structures—matrices and tensors—in parallel, significantly speeding up computations compared to sequential processing. This parallelism is what makes GPUs highly effective for a wide range of computationally intensive tasks.