From DALL-E with some prompting

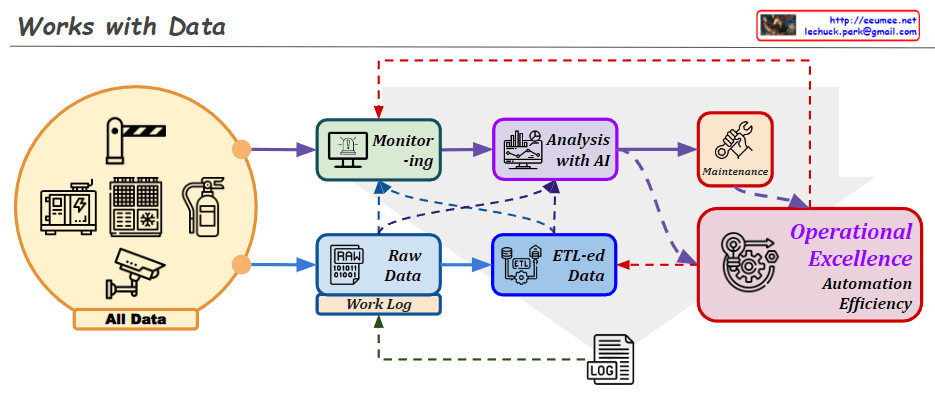

The image presents different data collection configurations in facility management systems:

- Direct Connection: Equipment directly sends data to the network without any intermediate device.

- Controller: Data is collected via a PLC (Programmable Logic Controller), DDC (Direct Digital Control), or Gateway from the equipment and then sent to the network.

- Dedicated Meter: Specialized meters are used to collect specific data, which is then transferred directly to the network.

- Dedicated Meter & Controller: A setup where dedicated meters work in conjunction with a PLC/DDC/Gateway for data collection and subsequent control before networking.

- Internal Control System: An integrated control system manages and monitors data internally before it connects to the network.

- Solution System: a Standalone system that is self-contained with full functionalities for a specific operation.

This depiction emphasizes the progression from direct data routing to more complex systems involving multiple stages of data handling and integration.