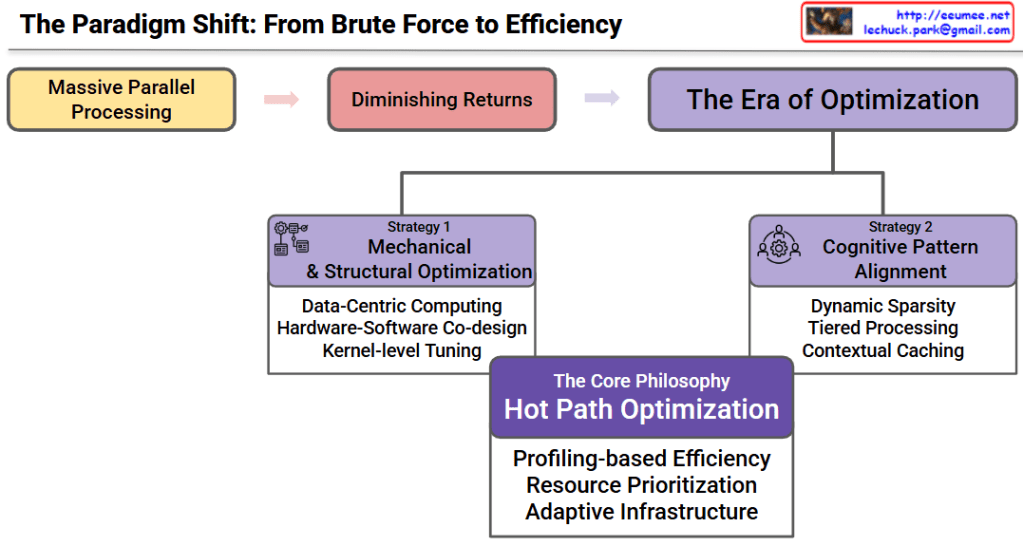

This diagram illustrates the critical paradigm shift currently happening in AI development: the transition from a “brute-force” approach—heavily reliant on massive infrastructure scaling and immense energy consumption—to a highly targeted, efficiency-first optimization perspective.

1. The Evolutionary Path in AI Infrastructure

The top flow outlines the historical and current trajectory of AI computing:

- Massive Parallel Processing: This represents the “Brute Force” era of AI. Progress was historically driven by simply throwing massive GPU clusters and enormous amounts of electrical power at models to achieve scale.

- Diminishing Returns: We are hitting a physical and energetic wall. Pumping more hardware and megawatts of power into data centers is yielding progressively smaller performance gains due to power density limits, cooling challenges, and silicon constraints.

- The Era of Optimization: The new frontier of AI development. Since we can no longer rely purely on adding more servers and power, the focus has entirely shifted to extracting maximum compute-per-watt and maximizing the utilization of existing infrastructure.

2. The Dual-Pillar Strategy for Efficiency

To navigate away from energy-heavy brute force, the diagram proposes two distinct but complementary optimization approaches:

Strategy 1: Mechanical & Structural Optimization

This focuses on the physical and foundational software layers to prevent energy and computational waste.

- Data-Centric Computing: Keeping data close to the processing units to reduce the massive energy cost of moving data across networks.

- Hardware-Software Co-design: Building AI software that is perfectly aligned with the underlying silicon to maximize throughput without drawing excess power.

- Kernel-level Tuning: Fine-tuning the operating system at the lowest level to remove overhead and latency.

Strategy 2: Cognitive Pattern Alignment

This focuses on algorithmic and logical efficiency, ensuring the AI models themselves are running “smarter.”

- Dynamic Sparsity: Skipping unnecessary calculations in AI models (like ignoring zero-values in neural networks), drastically reducing the required compute power.

- Tiered Processing: Assigning tasks to the right level of hardware based on complexity, so high-power GPUs are only used when absolutely necessary.

- Contextual Caching: Intelligently predicting and storing data to speed up AI inference without repeatedly fetching it from main memory.

3. The Core Philosophy: Hot Path Optimization

At the foundation of this new era is Hot Path Optimization, the ultimate answer to the energy and infrastructure bottleneck.

Instead of keeping the entire AI data center running at maximum power, this philosophy dictates:

- Profiling-based Efficiency: Identifying the exact “Hot Paths” (the most frequent and critical computational bottlenecks in the AI workload).

- Resource Prioritization: Funneling the best hardware and power strictly into those critical paths, rather than wasting energy on idle or low-priority tasks.

- Adaptive Infrastructure: Creating an environment that dynamically scales power and resources in real-time to match the exact needs of the AI model, achieving peak efficiency.

#AIInfrastructure #EnergyEfficiency #SustainableAI #OptimizationEra #GreenDataCenter #HotPathOptimization #ComputePerWatt #TechVisualization