From Claude with some prompting

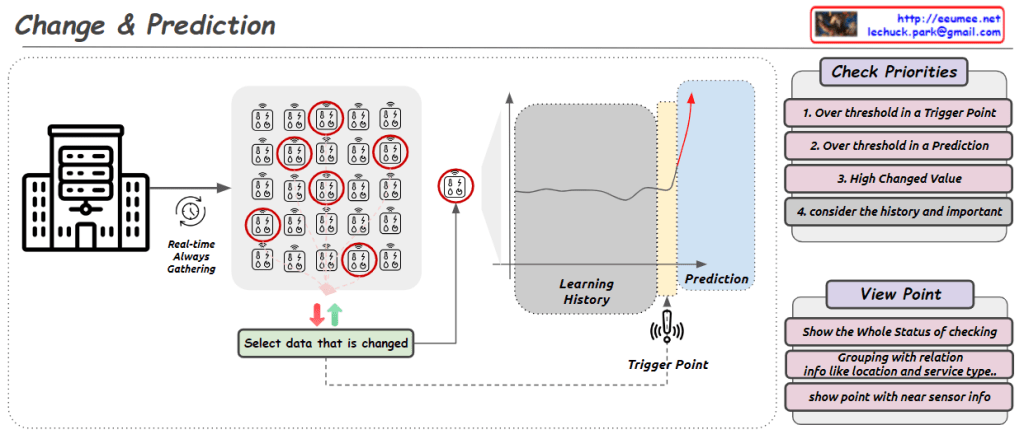

The image presents a “Trend & Predictions” process, illustrating a data-driven prediction system. The key aspect is the transition from manual validation to automation.

- Data Collection & Storage: Digital data is gathered from various sources and stored in a database.

- Manual Selection & Validation: a. User manually selects which metric (data) to use b. User manually chooses which AI model to apply c. Analysis & Confirmation using selected data and model

- Transition to Automation:

- Once optimal metrics and models are confirmed in the manual validation phase, the system learns and switches to automation mode. a. Automatically collects and processes data based on selected metrics b. Automatically applies validated models c. Applies pre-set thresholds to prediction results d. Automatically detects and alerts on significant predictive patterns or anomalies based on thresholds

The core of this process is combining user expertise with system efficiency. Initially, users directly select metrics and models, validating results to “educate” the system. This phase determines which data is meaningful and which models are accurate.

Once this “learning” stage is complete, the system transitions to automation mode. It now automatically collects, processes data, and generates predictions using user-validated metrics and models. Furthermore, it applies preset thresholds to automatically detect significant trend changes or anomalies.

This enables the system to continuously monitor trends, providing alerts to users whenever important changes are detected. This allows users to respond quickly, enhancing both the accuracy of predictions and the efficiency of the system.