From Claude with some prompting

Here’s an interpretation of the diagram in English, focusing on the major changes in internet traffic types:

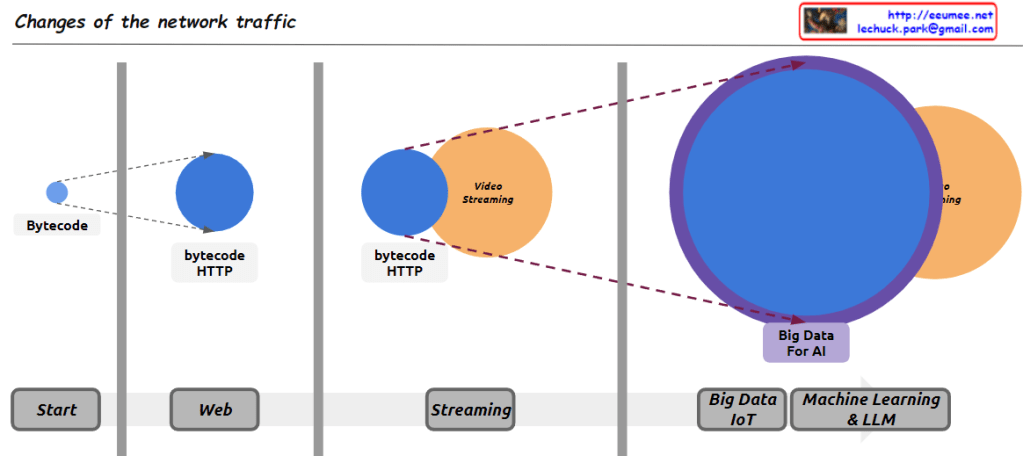

- Early Internet (Start): The small “Bytecode” circle represents the limited data exchange of the early internet. This period was primarily characterized by simple, text-based information exchange.

- Web Era (Web): The larger “bytecode HTTP” circle illustrates the surge in HTTP traffic with the advent of the World Wide Web. This represents increased traffic from web browsing, email, and early online services.

- Streaming Age (Streaming): The addition of the “Video Streaming” circle signifies the explosive growth in video streaming traffic, driven by platforms like YouTube and Netflix. This marks a paradigm shift in internet bandwidth usage.

- Big Data and AI Era (Big Data IoT / Machine Learning & LLM): The largest circle, “Big Data For AI,” represents the enormous traffic increase due to IoT device proliferation, cloud computing ubiquity, and large-scale data processing for AI and machine learning. This suggests it now constitutes the largest portion of internet traffic.

This diagram effectively shows the evolution of internet traffic from simple data exchange to web-based services, media streaming, and the current data-centric, AI-driven era.

Comments (points to be cautious about):

- Accuracy: It’s unclear if the circle sizes accurately reflect actual traffic volumes. This should be understood as a conceptual representation.

- Time scale: The time intervals between stages may not be uniform, which is not indicated in the diagram.

- Overlap: In reality, these traffic types coexist and are not as distinctly separated as the diagram suggests.

- Recent trends: The diagram doesn’t reflect traffic changes due to latest technological trends like 5G or edge computing.

- Regional differences: These changes may not have occurred uniformly worldwide, which is not reflected in the diagram.

It’s important to consider these points when interpreting the diagram. Overall, this image effectively conveys the macroscopic trends in the evolution of internet traffic in a concise and impactful manner.