From Claude with some prompting

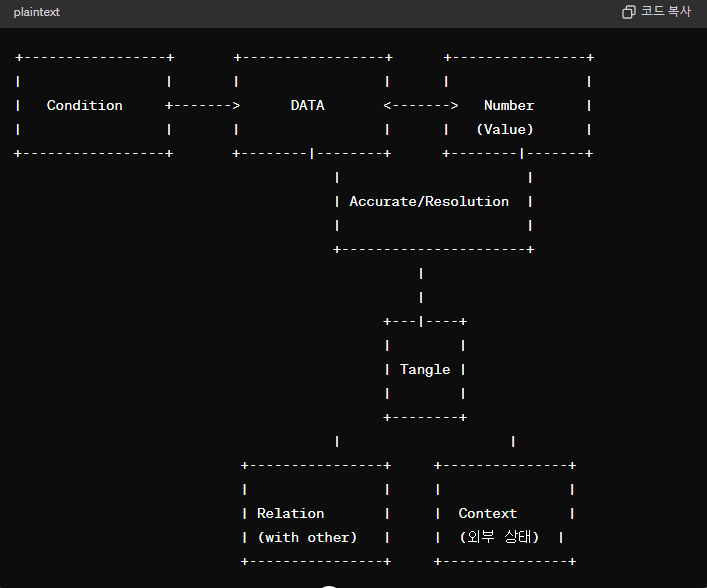

This image presents a comprehensive definition of data that goes beyond just numerical values. To clearly understand data, several elements must be considered.

First, the accuracy and resolution of the data itself are crucial. The “Number (Value)” represents numerical values that must be precise and have an appropriate level of resolution.

Second, data is closely related to external factors. “Condition” indicates a relationship with the state or condition of other data, while “Relation with other” suggests interconnectedness with other data sets.

Third, “Tangle” illustrates that data is not merely a simple number but is complexly intertwined with various elements. To clearly define data, these intricate interconnections and interdependencies must be accounted for.

In essence, the image presents a definition of data that encompasses accuracy, resolution, relationships with external conditions, and intricate interconnectedness. It emphasizes that to truly grasp the nature of data, one must comprehensively consider all these aspects.

The image underscores that data cannot be reduced to just numeric values; rather, it is a multifaceted concept intricately tied to precision, granularity, external factors, and interdependent relationships. Fully understanding data requires a holistic examination of all these interlinked elements.

Updated by GPT-4o