From Claude with some prompting

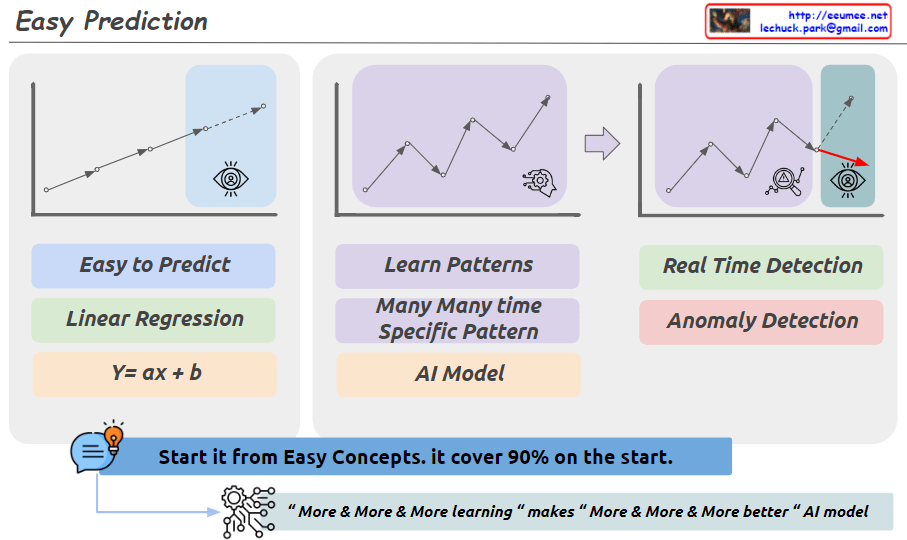

This image illustrates three main approaches to prediction and pattern recognition.

First, for easy prediction, a linear regression model (Linear Regression, y=ax+b) can be used. This is represented by a simple upward trendline. While a basic concept, it is emphasized that this can cover 90% of cases.

Second, for learning complex patterns that recur over time, an AI model is required. This is depicted by the jagged line shape.

Third, for real-time anomaly detection, sudden spike patterns need to be identified.

Additionally, at the bottom of the image, a new phrase has been added: “More & More & More learning makes More & More & More better AI model.” This conveys the idea that as an AI model learns from more and more data, its performance continues to improve.

In summary, the image highlights a step-by-step approach: starting with simple concepts to build a foundation, then utilizing AI models to learn complex patterns, and continuously improving the models through ongoing data learning and training. The key emphasis is on starting with the basics, while recognizing the potential of advanced AI techniques when combined with extensive learning from data.